Anthropic and LLM Memory Management

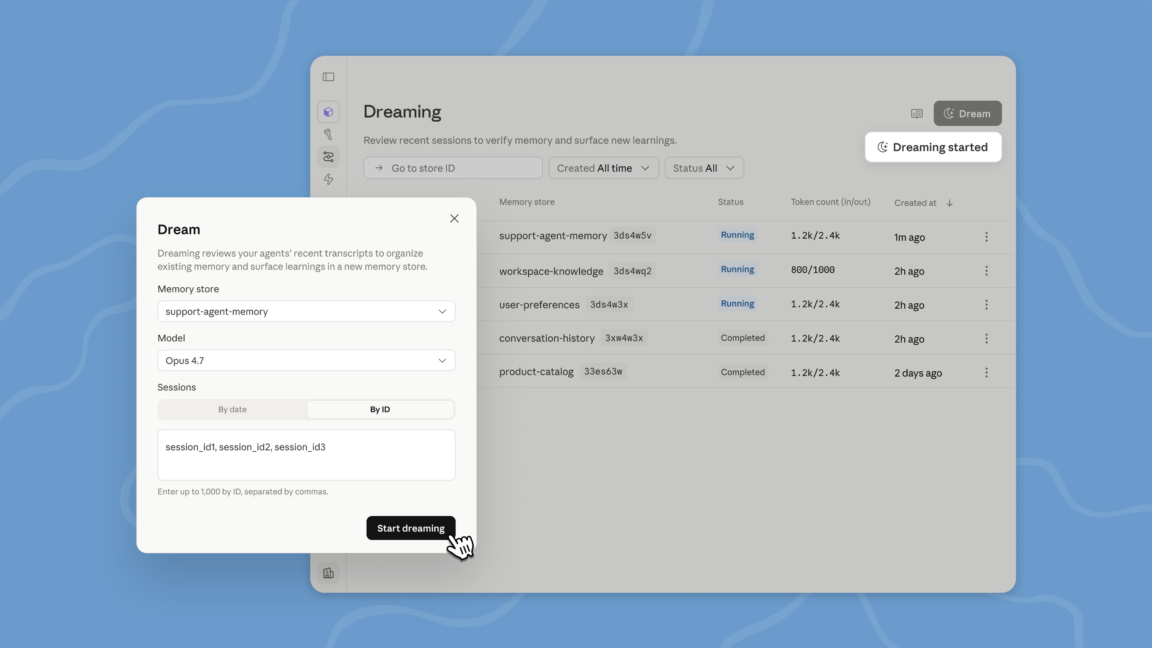

During its 'Code with Claude' developer conference, Anthropic announced a new feature called 'dreaming' for its Claude Managed Agents. This innovation represents a significant step towards solving one of the most persistent challenges in Large Language Models (LLMs): memory management and information persistence in complex, long-duration tasks. The 'dreaming' feature is currently available in a research preview phase and is limited to managed agents on the Claude platform.

Anthropic's Managed Agents are configured as a higher-level alternative to building directly on the Messages API. The company describes them as a 'pre-built, configurable agent harness that runs in managed infrastructure.' They are designed for scenarios where multiple agents need to collaborate on a task or project for extended periods, which can range from several minutes to hours, requiring a consistency and informational continuity that traditional LLM architectures struggle to maintain.

The 'Dreaming' Mechanism and Context Window Limitations

The 'dreaming' process is conceived as a scheduled activity, where recent sessions and their associated memory stores are reviewed. The goal is to curate and select specific 'memories' that are worth storing to inform future tasks and interactions. This capability is crucial because LLM context windows, while constantly expanding, remain intrinsically limited. In prolonged projects, important information can easily be lost or 'forgotten' by the model, compromising the coherence and effectiveness of its responses.

Anthropic emphasizes that memory management is a critical aspect for the reliability of LLMs in real-world application contexts. In the field of chat-based interactions, many models adopt a similar process called 'compaction.' This mechanism periodically analyzes lengthy conversations, attempting to remove irrelevant information from the context window while retaining only what is actually important for the ongoing conversation, project, or task. Anthropic's 'dreaming' extends this concept to a more structured and proactive level for its agents.

Implications for LLM Deployments and Data Sovereignty

The ability to manage long-term memory and maintain context is crucial not only for the effectiveness of LLMs but also for deployment decisions, especially in self-hosted or air-gapped environments. For organizations evaluating an on-premise deployment, efficient memory management directly impacts hardware requirements, such as GPU VRAM, and the Total Cost of Ownership (TCO). A model that can 'remember' relevant information without having to continuously reload it into the context window can reduce the necessary throughput and optimize computational resource utilization.

In contexts where data sovereignty and compliance are priorities, an LLM's ability to manage and curate its internal memory becomes an enabling factor. It allows for maintaining the relevance of interactions without having to expose the entire history of conversations or sensitive data with every request, thereby improving security and privacy. For those evaluating analytical frameworks for on-premise deployment, AI-RADAR offers resources at /llm-onpremise to delve into these trade-offs and infrastructural implications.

Future Prospects for Intelligent Agents

The introduction of features like 'dreaming' highlights the evolutionary direction of LLMs, which are transforming from simple text generators into true agents capable of reasoning and persistence. This ability to 'reflect' on past events and curate an internal memory opens up new possibilities for complex enterprise applications, from project management to problem-solving that requires deep and continuous contextual understanding.

However, the implementation and scaling of such memory systems present significant challenges, including determining what is 'important' to remember and how to manage selective forgetting. Research in this field is dynamic and promises to unlock further capabilities for LLMs, making them increasingly sophisticated and autonomous tools, capable of operating effectively in scenarios that demand extended contextual understanding and reliable long-term memory.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!