Anthropic Tests Removing Claude Code from Pro Plan, Sparking Debate

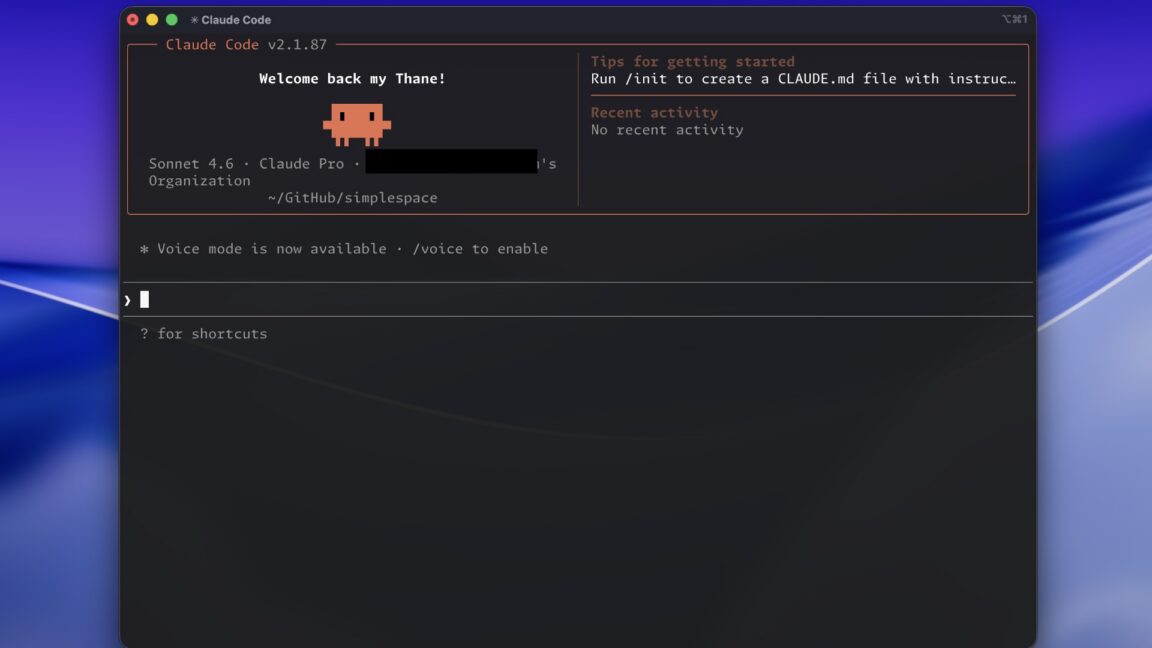

Anthropic, a key player in the Large Language Models (LLM) landscape, recently conducted a test that generated significant buzz among its developer community. The company experimented with removing Claude Code, a popular agentic development tool, from its $20-per-month Pro subscription plan. This move, initially perceived as a sudden and unannounced change, raised questions and frustration among users.

The modification was noticed when Anthropic's pricing page for Claude explicitly began to show Claude Code as unsupported in the Pro plan. While new users signing up for Pro subscriptions were unable to access the tool, existing subscribers experienced no interruption to their service. The functionality remained available, however, for users of the Max plan, which starts at $100 per month.

Test Details and Community Reactions

News of the alleged removal quickly spread across online platforms, with numerous users expressing their disappointment on forums like Reddit and social networks like X. The lack of prior communication from Anthropic fueled speculation and a sense of uncertainty regarding the company's future strategy for its premium services.

In response to the growing wave of discontent, Amol Avasare, Anthropic's Head of Growth, took to social media to provide clarification. Avasare explained that the change was not a permanent shift in pricing policy but rather a "small test" conducted on approximately 2% of new "prosumer" sign-ups. This statement helped to alleviate immediate concerns but also highlighted the community's sensitivity to any variations in the offering of critical development tools.

The Impact of Service Tests and Transparency

Episodes like this underscore the importance of transparency in managing LLM-based services and their pricing policies. Companies operating in this rapidly evolving sector often conduct A/B tests or experiments on limited segments of their user base to evaluate the impact of new features or changes to service plans. However, when these tests involve the availability of essential tools, communication becomes crucial to maintain user trust and prevent negative reactions.

For developers and businesses relying on these tools for their work pipelines, stability and predictability of the offering are decisive factors. The possibility that a feature might be removed or limited can influence decisions regarding the adoption of a particular Framework or service, prompting them to evaluate alternatives that offer greater control or guarantees of continuity.

Future Outlook and the Evolution of the LLM Market

This episode, though quickly resolved, offers insight into the dynamics at play in the LLM market. Companies are constantly seeking the right balance between innovation, service monetization, and user satisfaction. Segmenting the offering, with different functionalities available at various price points, is a common strategy, but its implementation requires careful attention and clear communication.

For CTOs, DevOps leads, and infrastructure architects evaluating the integration of LLMs into their operations, service stability and clarity of terms of use are fundamental aspects. While on-premise deployment offers greater control over data sovereignty and operational continuity, cloud solutions like Claude present advantages in terms of scalability and management. The choice between these options depends on a careful analysis of trade-offs, including the potential impacts of changes to service plans by cloud providers.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!