A Targeted Attack and Serious Charges

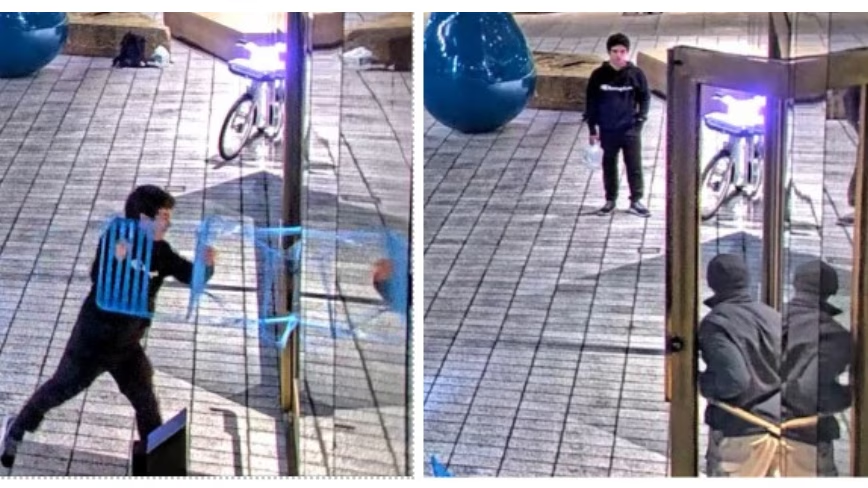

Daniel Moreno-Gama, 20, has pleaded not guilty to two counts of attempted murder and nine other state charges. The accusations stem from an incident in San Francisco where he allegedly threw a Molotov cocktail at the home of Sam Altman, CEO of OpenAI. The incident reportedly did not stop there: Moreno-Gama then allegedly walked approximately three miles to OpenAI's headquarters, threatening to set the building on fire.

According to reports, the young man was carrying a kill list of AI CEOs and a jug of kerosene, elements that heighten the perception of his intentions. The severity of the charges is significant, carrying the possibility of a life sentence. Despite this, Moreno-Gama's defense has adopted a strategy aimed at downplaying the incident, describing it as a "property crime." This legal approach sharply contrasts with the nature of the charges brought by the prosecution, which highlight the potential dangerousness of the young man's actions.

Implications for Security in the AI Sector

The incident raises crucial questions about the physical security and protection of key figures and infrastructure within the artificial intelligence sector. As AI becomes increasingly pervasive and influential, companies and their leaders face an evolving landscape of risks that extend beyond traditional cyber threats. Protecting physical premises, data centers, and personnel is becoming an increasingly critical element in risk management strategies.

For organizations opting for on-premise deployments, the physical security of their infrastructure is a fundamental aspect of TCO and data sovereignty. The ability to directly control the physical environment where servers, GPUs, and other hardware components reside offers a security advantage but requires significant investment in surveillance, access control, and emergency response plans. This contrasts with cloud models, where physical security is delegated to the provider, while logical security remains the client's responsibility.

Context of Growing Polarization

This episode occurs within a broader context of increasing polarization and public debate regarding the impact and future of artificial intelligence. While AI is seen as a driver of progress and innovation, significant concerns also emerge regarding ethics, control, and potential existential risks. This dichotomy sometimes fuels extreme reactions, which can manifest as acts of protest or, as in this case, violent actions.

Managing public perception and transparent communication about the risks and benefits of AI become essential for companies in the sector. The ability to engage with society and address concerns constructively is crucial for maintaining trust and preventing the escalation of tensions that could have repercussions on companies' operational stability and reputation.

Future Prospects for AI Resilience

The AI industry, while focusing on technological development, must simultaneously strengthen its resilience strategies. This includes not only cybersecurity and operational continuity of systems but also the protection of human and physical assets. Risk assessment must extend to scenarios that might have seemed remote in the past, considering the growing visibility and impact of AI technologies.

For companies considering the adoption of LLMs and other AI solutions, the choice between on-premise and cloud deployment is not just about performance and costs, but also about the ability to manage a complex security ecosystem. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate the trade-offs between control, security, and TCO, providing tools for informed decisions in an increasingly unpredictable environment.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!