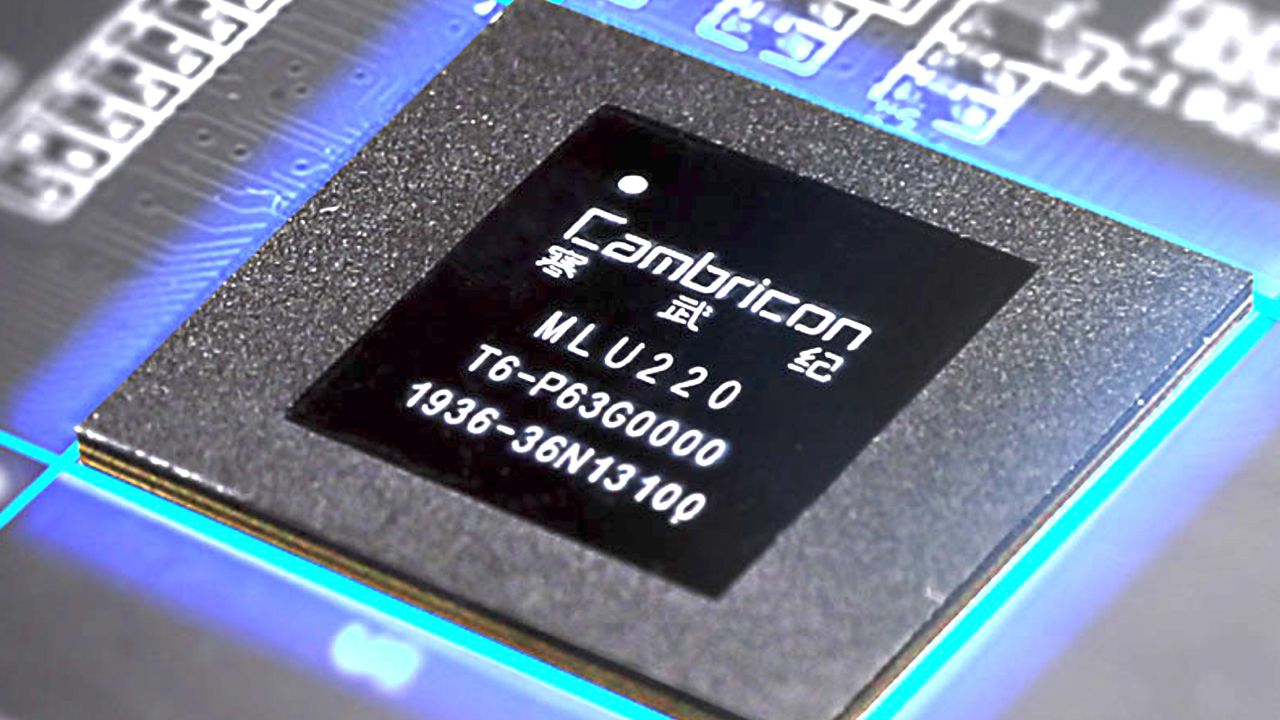

Cambricon and the Rise of China's AI Chip Market

Cambricon, a key player in the Chinese GPU manufacturing landscape, has announced Q1 revenues of $423 million. This figure not only highlights the company's growth but also underscores the acceleration of China's domestic market for AI-specific chips. In a global context dominated by a few major names, the emergence of local alternatives has significant implications for AI deployment strategies, particularly for organizations prioritizing on-premise solutions.

China's drive towards technological self-sufficiency is leading to rapid development and adoption of proprietary hardware solutions. This trend translates into increasing competition for established international players, such as Nvidia, who are seeing their market share erode in one of the world's most dynamic digital economies. For CTOs and infrastructure architects, the expansion of hardware options represents a crucial factor in planning AI workloads.

The Context of Chinese AI Silicio

The acceleration of the Chinese AI chip market is driven by a combination of strategic and commercial factors. On one hand, the pursuit of technological sovereignty and the reduction of dependence on foreign suppliers are national priorities. This translates into massive investments in research and development of silicio specifically designed for local needs, ranging from Large Language Models (LLM) processing to computer vision and industrial automation.

For companies operating in environments with stringent compliance requirements or in air-gapped contexts, the availability of hardware from diverse supply chains can represent a strategic advantage. The ability to choose from a wider range of GPU suppliers can not only influence the overall TCO of a deployment but also mitigate risks related to supply chain disruptions or geopolitical restrictions. Diversification of hardware offerings is a key element for infrastructure resilience.

Implications for On-Premise Deployment

The expansion of players like Cambricon in the AI chip market offers new perspectives for organizations evaluating the deployment of AI workloads on-premise. Traditionally, the choice of high-performance GPUs was limited to a few vendors, with consequent implications for costs, availability, and architectural flexibility. The emergence of alternatives can stimulate innovation and competition, potentially leading to solutions more optimized for specific use cases or a better cost-effectiveness ratio.

For those designing self-hosted AI infrastructures, evaluating new hardware options requires an in-depth analysis of trade-offs. Factors such as compatibility with existing software frameworks, support for Quantization, available VRAM, and Inference throughput are critical elements. AI-RADAR, for example, offers analytical frameworks on /llm-onpremise to help evaluate these trade-offs, providing tools to compare the performance and costs of different silicio architectures in a local deployment context.

Future Outlook and Challenges

Cambricon's success and the growth of the Chinese AI chip market indicate a clear trend towards greater fragmentation and diversification in the artificial intelligence hardware sector. This evolution, while offering more choices and potential benefits in terms of TCO and data sovereignty, also presents challenges related to integration, standardization, and technical support. Companies will need to navigate an an increasingly complex hardware ecosystem, balancing performance needs with reliability and long-term sustainability.

The ability of these new players to compete not only on price but also on performance and software integration will be crucial for their long-term success. For decision-makers, staying updated on silicio market developments is essential for building resilient, efficient AI infrastructures aligned with corporate strategies for data control and sovereignty.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!