AI-Driven HBM Memory Shortage: A Long-Term Challenge

Samsung and SK hynix, two of the world's leading semiconductor manufacturers, have issued a significant warning: the shortage of High Bandwidth Memory (HBM), crucial for artificial intelligence applications, could persist until 2027 and beyond. This forecast highlights increasing pressure on the supply chain, with direct implications for the global tech industry. The explosive demand for AI solutions is driving customers to reserve supplies years in advance, creating an extremely competitive market.

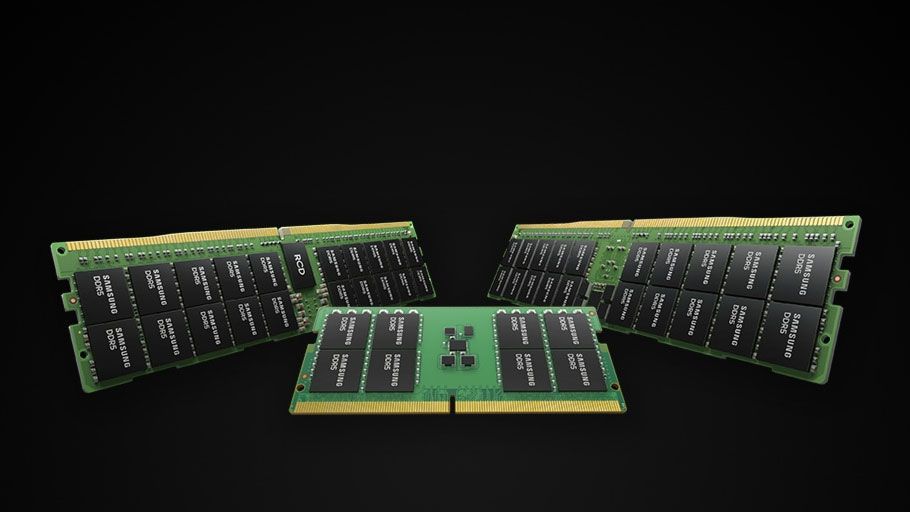

In parallel, the broader DRAM memory market, while less in the spotlight than HBM, is beginning to show signs of tightening. This complex scenario suggests that companies relying on these technologies will face significant challenges in planning and acquiring essential components for their deployments.

The Critical Role of HBM Memory in the AI Era

HBM memory has become an indispensable component for artificial intelligence workloads, particularly for Large Language Models (LLM) and other high-performance computing applications. Its vertically stacked architecture allows for significantly higher memory bandwidth compared to traditional DRAM, reducing data transfer bottlenecks between the GPU and memory. This capability is fundamental for accelerating the training and inference of increasingly large and complex AI models, which require rapid access to enormous amounts of data and parameters.

The current AI race has amplified the demand for high-end GPUs, which in turn integrate HBM modules to maximize performance. Manufacturers' ability to meet this demand is now under scrutiny, with major industry players reporting saturated production capacity and extended lead times. The practice of reserving supplies years in advance underscores the severity of the situation and the perception of a structural, rather than transient, shortage.

Implications for On-Premise Deployments and TCO

For companies evaluating or already implementing on-premise AI solutions, this HBM shortage and the tightening DRAM market represent a considerable challenge. The limited availability of key components can translate into longer waiting times for hardware, higher costs, and increased complexity in infrastructure planning. The Total Cost of Ownership (TCO) for self-hosted AI deployments could increase, not only due to the direct price of components but also due to indirect costs related to delays and the need for more aggressive procurement strategies.

The choice between a cloud and a self-hosted infrastructure becomes even more critical in this context. While the cloud offers flexibility and immediate access to resources, companies prioritizing data sovereignty, control, and hardware customization may find themselves navigating a more volatile component market. The need to secure stable and predictable supplies becomes a decisive factor in AI infrastructure investment decisions.

Future Outlook and Mitigation Strategies

The persistence of the HBM memory shortage until 2027 and beyond necessitates long-term strategic thinking for the entire industry. Chip manufacturers are certainly working to increase production capacity, but these processes require massive investments and years to materialize. In the meantime, companies will need to adopt mitigation strategies, such as diversifying suppliers, optimizing the use of existing resources, and evaluating alternative hardware architectures that can reduce reliance on a single type of memory.

For those evaluating on-premise deployments, it is crucial to consider these supply constraints during the planning phase. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate the trade-offs between costs, performance, and availability, helping organizations make informed decisions in a continuously evolving market landscape. The ability to anticipate and manage these challenges will be crucial for the success of AI initiatives in the coming years.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!