The Growing Complexity in AI Chip Testing

The artificial intelligence sector is experiencing rapid evolution, with an ever-increasing demand for computing power. At the heart of this revolution are AI chips, fundamental components for training and Inference of Large Language Models (LLMs) and other advanced applications. However, the production of these processors is not without its challenges. One of the most significant, and often underestimated, is the growing complexity of their testing.

This complexity is not just a technical detail; it's a factor already influencing the demand for specific components, such as "probe cards," and the entire upstream supply chain. For companies evaluating on-premise LLM Deployment, understanding these dynamics is crucial, as they directly impact the availability, costs, and ultimately the TCO of the infrastructure.

Technical Details and Supply Chain Impact

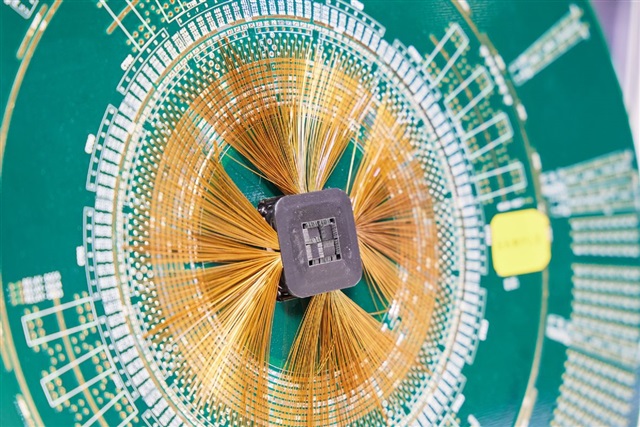

The complexity of AI chip testing stems from multiple factors. Modern architectures, often based on heterogeneous integration, include specialized compute cores, high-bandwidth memory (HBM), and advanced interconnects like NVLink. Testing every single component of this complex silicio and their interaction requires extremely precise tools. "Probe cards" are a prime example: these are critical interfaces that connect the chip (still at the wafer level) to testing equipment, verifying its functionality and performance. With increasing transistor density and miniaturization, the precision and durability of these cards become ever more stringent requirements.

The impact extends throughout the "upstream supply chain." This includes suppliers of semiconductor materials, manufacturers of lithography and packaging machinery, and companies specializing in test and measurement solutions. The need for more sophisticated equipment and more rigorous production processes to ensure the reliability of AI chips translates into higher demand and, potentially, longer lead times and increased costs for these essential components.

Implications for On-Premise Deployments

For organizations choosing to implement LLMs and other AI solutions in self-hosted environments, the dynamics of the AI chip supply chain are of paramount importance. The availability and cost of hardware, particularly GPUs with high VRAM, are decisive factors for the success and sustainability of an on-premise Deployment. If testing complexity slows down production or increases costs, companies could face significant challenges.

This scenario can lead to an increase in initial CapEx for purchasing servers and GPUs, or extended waiting times for component procurement. TCO planning thus becomes even more complex, requiring careful evaluation of supply chain risks. The choice of on-premise Deployment is often motivated by data sovereignty needs, regulatory compliance, or the requirement for air-gapped environments, making reliable hardware access a non-negotiable constraint. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these trade-offs and support infrastructure decisions.

Perspectives and Strategies for Decision-Makers

Facing these challenges, CTOs, DevOps leads, and infrastructure architects must adopt a strategic approach. Closely monitoring the semiconductor market and supply chain trends becomes essential to anticipate potential disruptions or cost increases. Diversifying suppliers, where possible, and evaluating alternative hardware architectures can help mitigate risks.

A company's ability to develop and maintain a robust on-premise AI infrastructure will increasingly depend on its understanding of chip production and testing dynamics. Investing in long-term planning and a deep knowledge of the hardware market is fundamental to ensuring the resilience and effectiveness of AI strategies, while maintaining control over data and operational costs.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!