Pressure on AI Server ODM Margins

The surging demand for artificial intelligence, particularly for intensive Large Language Model (LLM) workloads, is putting pressure on the entire hardware supply chain. A critical aspect of this market dynamic, as highlighted by DIGITIMES, involves rising memory costs, which are eroding the margins of AI server Original Design Manufacturers (ODMs). This situation not only directly impacts ODM profitability but also has significant repercussions on the broader AI infrastructure market and deployment strategies for enterprises.

ODMs play a crucial role in producing customized servers for specific AI needs, often working closely with chip suppliers and end customers. Their ability to maintain healthy margins is essential for innovation and the stability of the supply chain. The increase in costs for key components, such as memory, presents a direct challenge for these players, who must balance price competitiveness with the need to cover production costs.

The Impact of Memory on On-Premise AI Infrastructure

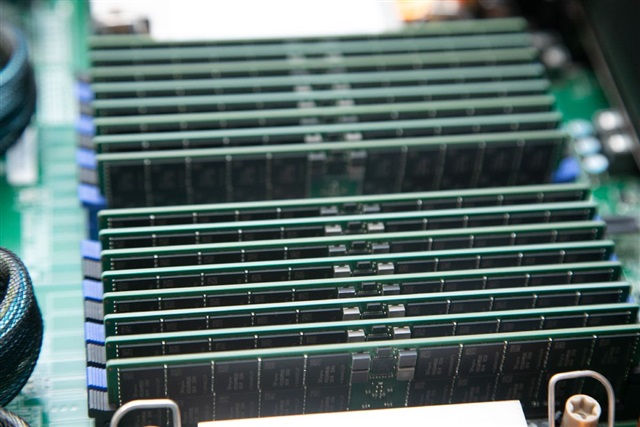

AI servers, especially those dedicated to training and inference of LLMs, require significant amounts of high-bandwidth memory, such as the VRAM found in high-end GPUs. The escalation of prices for these essential components directly translates into increased production costs for ODMs. For enterprises considering self-hosted or on-premise AI infrastructure deployments, this means a higher initial CapEx for acquiring the necessary hardware.

This factor becomes crucial in evaluating the long-term Total Cost of Ownership (TCO). While on-premise solutions can offer advantages in terms of data sovereignty, control, and predictable operational costs over time, a significant increase in initial hardware costs can alter the economic equation. Organizations must carefully balance the upfront cost with long-term benefits, also considering the energy, cooling, and maintenance costs associated with local infrastructure.

Market Dynamics and Procurement Strategies

The pressure on ODM margins can lead to various market consequences, including potential delays in AI server deliveries or reduced flexibility in offered hardware configurations. Companies planning investments in AI infrastructure must closely monitor these market dynamics to effectively plan purchases and mitigate risks related to component availability and cost. Cost volatility can complicate the choice between cloud and on-premise solutions, requiring a more in-depth analysis of trade-offs.

The ability to scale infrastructure, maintain control over sensitive data, and ensure regulatory compliance are factors that must be carefully weighed against initial cost and component availability. In a context of rising costs, optimizing existing hardware utilization, for example through quantization techniques for LLM models or the adoption of more efficient architectures, becomes even more relevant to maximize return on investment.

Strategic Considerations for LLM Deployment

For CTOs, DevOps leads, and infrastructure architects, the volatility of memory costs underscores the importance of robust strategic planning for LLM deployments. Evaluating self-hosted alternatives requires a thorough TCO analysis, considering not only the initial purchase but also ongoing operational costs. The ability to forecast and manage these costs is fundamental for the sustainability of AI projects.

In this scenario, hardware selection, server configuration, and procurement strategies become critical decisions. AI-RADAR offers analytical frameworks on /llm-onpremise to help organizations evaluate these complex trade-offs, providing a solid foundation for informed decisions on AI/LLM workload deployment that prioritize data sovereignty, control, and optimized TCO. Understanding supply chain dynamics is an essential step in building resilient and high-performing AI infrastructure.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!