Denmark Halts Data Center Development for AI

Denmark has announced a suspension of new grid connections for data centers, a clear signal of the growing infrastructural pressures generated by the expansion of artificial intelligence. Total requests for new connections have reached an impressive 60 GW, highlighting the unprecedented energy demand that modern AI workloads require. This decision positions Denmark as the latest Nordic nation to put a brake on the rapid expansion of AI-dedicated infrastructure.

The phenomenon is not isolated and reflects a broader trend across Europe and globally, where the race to build data centers to support the training and inference of Large Language Models (LLM) is straining existing power grids. The need to power thousands of high-performance GPUs, along with their associated cooling systems, translates into an energy requirement that can exceed local generation and distribution capacity, leading to situations like Denmark's, where grid stability becomes a primary concern.

The Energy Challenges of AI Workloads

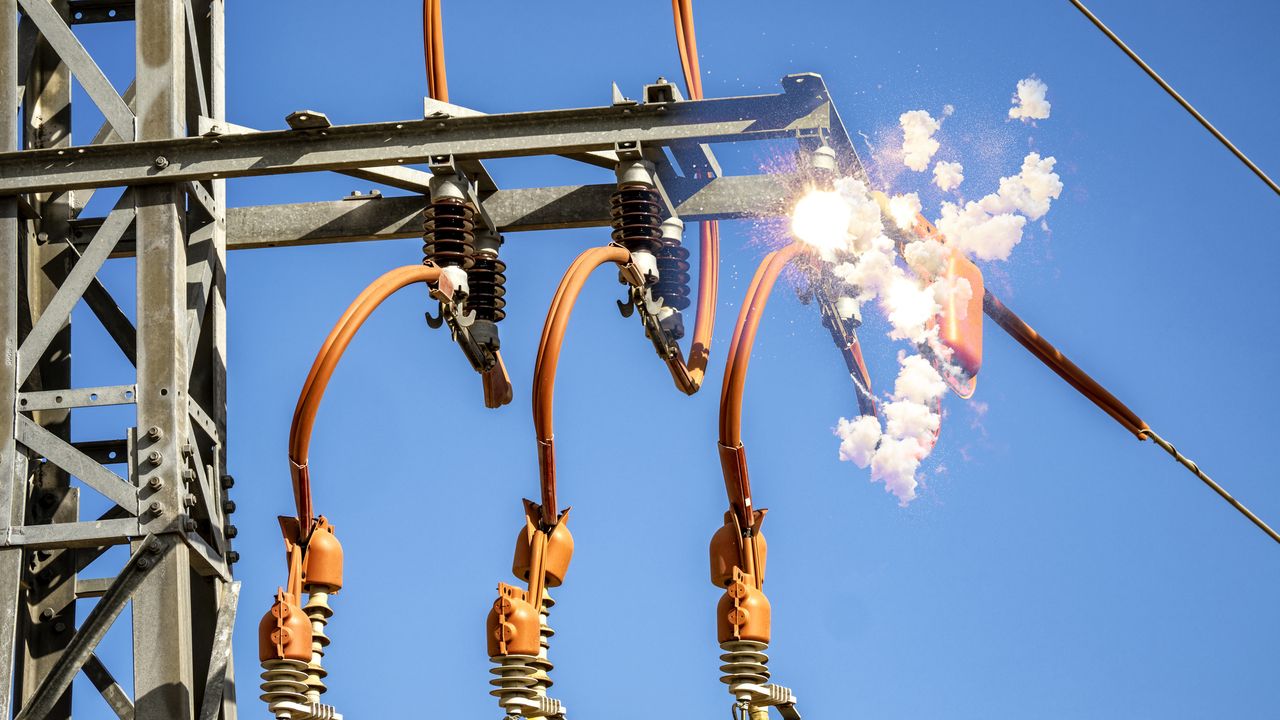

The halt in connections in Denmark is directly linked to “high voltage sparking” issues, an indication of how existing electrical infrastructure is struggling to manage demand peaks and high constant loads. Modern data centers, especially those optimized for AI, are true energy devourers. A single server rack equipped with state-of-the-art GPUs, such as NVIDIA H100s or A100s, can require tens of kilowatts, and an entire data center can easily exceed 100 MW of consumption.

These energy requirements are not only for direct server power but also for critical auxiliary systems like cooling. The high heat density generated by GPUs demands advanced cooling solutions, often liquid-based, which in turn consume significant energy. For CTOs and infrastructure architects evaluating on-premise LLM deployments, the availability of reliable and scalable power is a critical factor in the Total Cost of Ownership (TCO) and project feasibility. Planning must include not only hardware procurement but also the adaptation of electrical and cooling infrastructure, often with considerable costs and implementation times.

Implications for On-Premise Deployment and Data Sovereignty

The Danish situation highlights a physical constraint that directly impacts deployment strategies, both cloud and self-hosted. For companies considering an on-premise deployment for reasons of data sovereignty, compliance, or control over their technology stacks, the availability of electrical power becomes a critical limiting factor. The choice of a site for a local data center can no longer ignore a thorough analysis of the local power grid capacity and the timelines for any necessary upgrades.

This scenario reinforces the importance of carefully evaluating the trade-offs between the agility offered by the cloud and the control and security of on-premise deployments. While the cloud can offer seemingly unlimited scalability, even large providers must contend with the infrastructural capacities of the regions in which they operate. For those evaluating on-premise deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between CapEx, OpEx, energy consumption, and infrastructure requirements, providing guidance for informed decisions without direct recommendations.

Future Prospects and Strategic Planning

The pause imposed by Denmark is a wake-up call for the entire industry. As the demand for AI computational capacity continues to grow exponentially, the availability of energy and the robustness of grid infrastructures will become increasingly critical factors. Nations and companies will need to invest massively in new energy sources and grid upgrades to sustain this growth.

For technology decision-makers, this means that strategic planning for AI workloads cannot be limited to LLM or GPU selection but must extend to a deep analysis of the underlying physical infrastructure. A company's ability to implement and scale AI solutions will increasingly depend on its ability to access adequate energy resources and manage the associated costs and complexities. The long-term sustainability of AI expansion will require a holistic approach that integrates technological innovation and infrastructural development.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!