Gigabyte Showcases NVIDIA Vera Rubin Platforms and More at GTC 2026

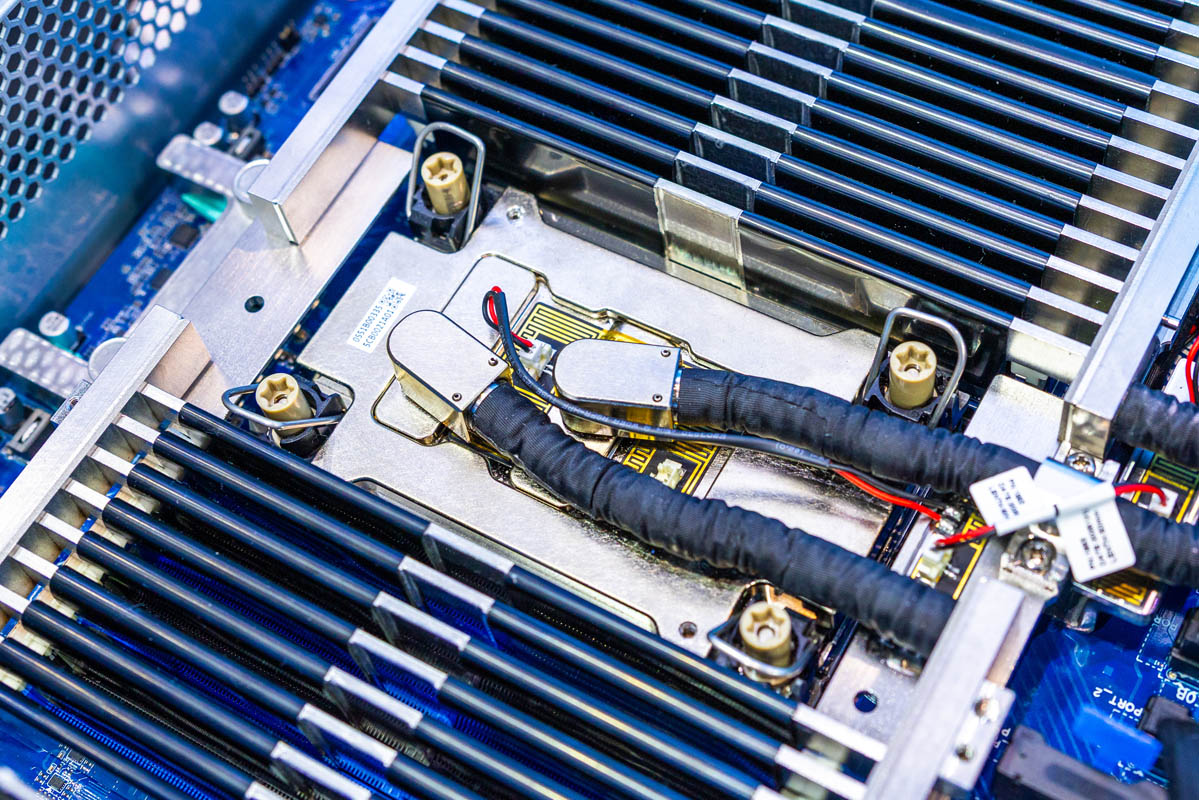

NVIDIA GTC 2026 continues to be a pivotal event for the artificial intelligence industry, serving as a stage where key players unveil their latest innovations. Among them, Gigabyte captured attention with its exhibition of new platforms and components, particularly highlighting systems based on the NVIDIA Vera Rubin architecture. This event underscores the continuous evolution of AI-dedicated hardware and its implications for the most demanding workloads.

Gigabyte's presence at GTC 2026 is not merely a product showcase but an indicator of future directions for AI infrastructure. The company, known for its robust and high-performance server solutions, demonstrated how it intends to support the next generation of Large Language Models and AI applications, providing the necessary hardware foundations for innovation.

NVIDIA Vera Rubin Platforms: A Leap Forward for AI

NVIDIA Vera Rubin platforms represent the next iteration in the evolution of NVIDIA's GPU architectures, designed to push the boundaries of computing power and memory capabilities. While specific details are still being revealed, the introduction of a new architecture suggests significant improvements in terms of throughput, VRAM capacity, and interconnectivity—critical factors for the training and Inference of increasingly complex LLMs.

Gigabyte, as a key partner, integrates these cutting-edge technologies into its systems, offering comprehensive solutions aimed at maximizing performance. These new systems and components are essential for enterprises requiring infrastructure capable of handling models with billions of parameters, reducing processing times, and optimizing energy efficiency. The focus is on delivering the computational power needed to tackle the most challenging AI tasks.

Context and Implications for On-Premise Deployment

The emergence of platforms like those based on NVIDIA Vera Rubin directly impacts on-premise deployment strategies for businesses. The ability to access next-generation hardware means being able to manage intensive AI workloads directly within their own data centers, ensuring greater data control and adhering to stringent sovereignty and compliance requirements. This approach is particularly relevant for sectors such as finance, healthcare, and public administration, where data security and location are paramount.

The choice between on-premise deployment and cloud-based solutions involves a careful evaluation of the Total Cost of Ownership (TCO). While the initial investment in cutting-edge hardware can be significant (CapEx), long-term operational costs for Inference and training large-scale models can prove more advantageous compared to cloud-based consumption models (OpEx). For those evaluating on-premise deployment, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between costs, performance, and control, helping organizations make informed decisions.

Future Prospects for AI Infrastructure

The innovation presented by Gigabyte and NVIDIA at GTC 2026 highlights a clear direction: hardware continues to be the fundamental driver for the advancement of artificial intelligence. With the increasing complexity of Large Language Models and the growing demand for computing capacity, the availability of high-performance and scalable platforms is more critical than ever. Companies that invest in cutting-edge infrastructure will be better positioned to fully leverage the potential of AI, accelerating innovation and maintaining a competitive edge.

These developments not only enable new AI-powered applications and services but also strengthen the feasibility of self-hosting strategies and air-gapped environments, offering organizations the flexibility to build their bespoke AI infrastructure. The future of AI is intrinsically linked to the ability of hardware to evolve, and NVIDIA Vera Rubin platforms, integrated into Gigabyte systems, are a prime example of this progression.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!