Google and the Expansion of TPU Servers

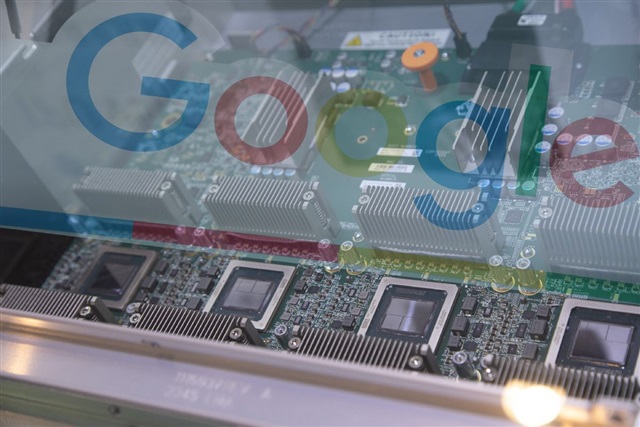

According to a DIGITIMES report, Google is significantly increasing the deployment of its Tensor Processing Unit (TPU)-based servers. This intensification reflects the company's strategy to enhance its computing capabilities for artificial intelligence and Large Language Models (LLM) workloads. Google's decision to expand its TPU infrastructure has direct repercussions on the global supply chain.

Specifically, Taiwanese suppliers are experiencing an increase in their market share, directly benefiting from the higher demand for components and assemblies required for these advanced servers. This trend highlights Taiwan's centrality in the AI hardware manufacturing ecosystem and its ability to meet the needs of tech giants, solidifying its position as a crucial hub for silicio innovation and production.

The Strategic Role of TPUs in AI

Tensor Processing Units (TPUs) are hardware accelerators specifically designed by Google to optimize machine learning operations. Unlike GPUs, which are more general-purpose processors with parallel computing capabilities, TPUs are ASIC (Application-Specific Integrated Circuit) architectures optimized for the linear algebra operations fundamental to training and inference of neural models. This targeted design allows TPUs to achieve high levels of energy efficiency and performance for specific AI workloads.

Google initially developed TPUs for internal use, powering services like Google Search, Google Photos, and Google Translate. Subsequently, it made TPU computing power available through Google Cloud, offering customers access to this specialized infrastructure for their artificial intelligence projects. The continuous investment in this proprietary technology underscores the strategic importance Google places on controlling and optimizing its hardware stack for AI, aiming to maximize efficiency and reduce long-term TCO.

Implications for the Supply Chain and Hardware Market

Google's accelerated deployment of TPU servers has a significant impact on the global AI hardware supply chain. The increase in demand translates into larger orders for manufacturers of chips, motherboards, cooling systems, and other essential components. The growth in market share for Taiwanese suppliers is a clear indicator of how the investment decisions of a key player like Google can reshape industrial balances and influence resource availability globally.

This scenario reflects a broader trend in the industry, where the race for AI is driving companies to invest heavily in dedicated infrastructure. The availability and reliability of the supply chain become critical factors in sustaining this growth, influencing delivery times and costs for all market players, from large hyperscalers to enterprises seeking to build their own AI capabilities. The ability to ensure timely deliveries and adequate volumes is now a competitive differentiator.

Perspectives for On-Premise Deployments

Google's strategy of investing in proprietary and optimized AI hardware offers interesting insights for organizations evaluating on-premise deployments of LLMs and other AI workloads. Although Google operates at a hyperscale, the basic principle – namely, the pursuit of control, efficiency, and optimization through dedicated hardware – is also relevant for companies wishing to keep their data and models within their own infrastructural boundaries, ensuring data sovereignty and regulatory compliance.

For those evaluating self-hosted alternatives versus cloud solutions, analyzing the Total Cost of Ownership (TCO) and the ability to manage concrete hardware specifications, such as VRAM and throughput, becomes fundamental. The choice between commercial GPUs and more specialized solutions depends on factors such as data volume, latency requirements, and data sovereignty needs. AI-RADAR offers analytical frameworks on /llm-onpremise to help companies evaluate these complex trade-offs and make informed decisions about on-premise deployments, ensuring control and compliance.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!