DIY Innovation in the Data Center

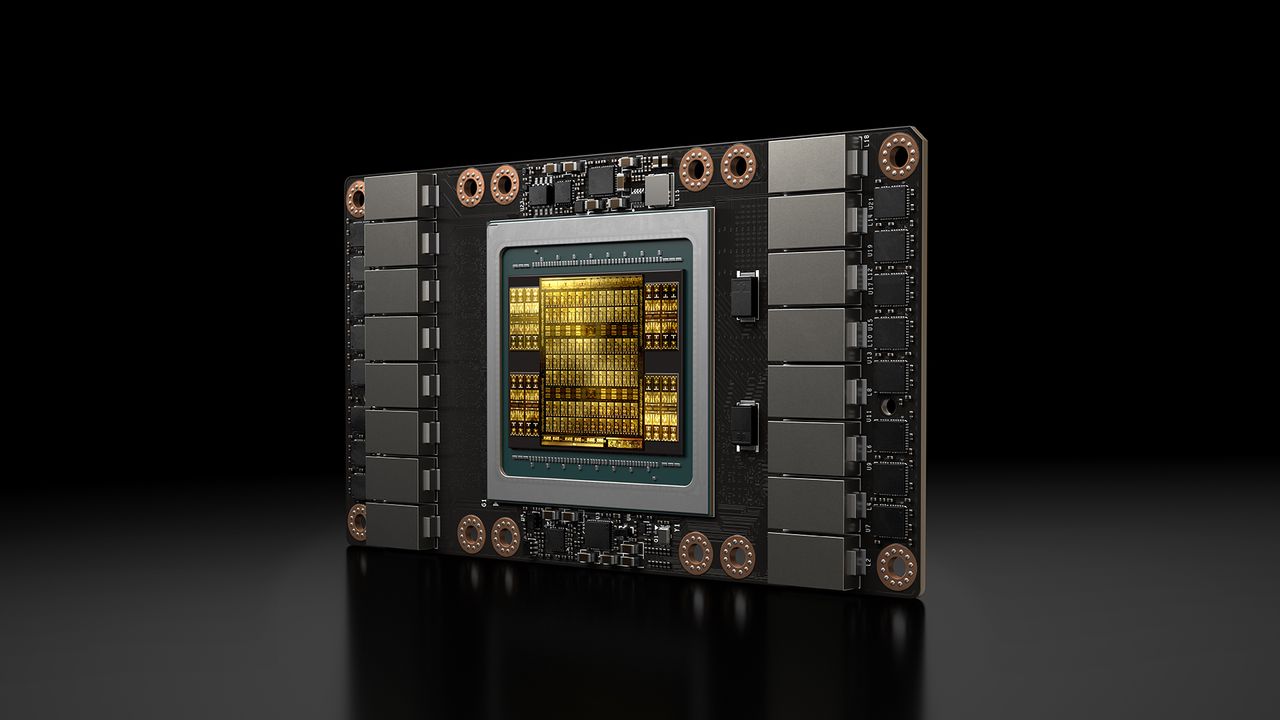

In the artificial intelligence landscape, where hardware costs can be prohibitive, ingenious solutions emerge that challenge the status quo. A recent project has captured the attention of the tech community, demonstrating how an Nvidia Tesla V100 SMX GPU, based on the GV100 chip, can be repurposed for use in PCIe servers with a surprisingly low initial investment, estimated around $200 for the GPU alone.

This initiative highlights a growing trend: the search for cost-effective alternatives for Large Language Models (LLM) inference in self-hosted environments. For CTOs and infrastructure architects, the possibility of leveraging previous-generation hardware with targeted modifications represents an opportunity to optimize Total Cost of Ownership (TCO) and maintain control over their data.

Technical Details and Hardware Implications

The Nvidia Tesla V100 GPU, powered by the GV100 processor, was a cornerstone in accelerated computing for data centers. Originally designed for proprietary form factors like SMX, which require specific server infrastructures, its re-engineering into a standard PCIe card is a non-trivial technical feat. The project involves the use of a custom PCB (Printed Circuit Board) and a cooling system made with 3D printing, crucial elements for adapting the GPU to a more common form factor and managing its thermal dissipation.

Despite not being the latest generation of silicon, this modified V100 has proven capable of running LLMs with higher efficiency than many newer midrange offerings, specifically for inference workloads. This performance is significant for those looking to maximize the value of existing hardware or acquire components at reduced costs for experimenting or implementing AI solutions in on-premise contexts.

The Context of On-Premise Deployment and TCO

The relevance of such a project for on-premise deployments is clear. Organizations prioritizing data sovereignty, regulatory compliance, or operating in air-gapped environments often face high costs for purchasing and managing cutting-edge AI hardware. Solutions like this offer an alternative path to building local infrastructures, reducing dependence on external cloud services and keeping data within their own perimeter.

However, it is essential to consider the trade-offs. While the initial cost of approximately $200 for the GPU is extremely competitive, the creation of a custom PCB and cooling system requires specific engineering skills and time. For those evaluating on-premise deployments, analytical frameworks on /llm-onpremise can help weigh these aspects, comparing initial costs (CapEx) with operational costs (OpEx) and the management complexity of custom solutions versus commercial ones.

Future Prospects and Considerations for CTOs

This example of 'DIY' engineering underscores the vibrancy and innovation that characterize the AI sector. For CTOs and technical decision-makers, it serves as a reminder that resource optimization and creativity can unlock new possibilities for implementing AI workloads. While modified solutions may present challenges in terms of support, scalability, and long-term reliability compared to standard enterprise products, their existence stimulates debate on how to make AI more accessible and controllable.

The pursuit of a balance between performance, cost, and control remains a priority for companies looking to integrate AI into their operations, especially when data sovereignty and TCO management are critical factors. Projects like the modified V100 demonstrate that the path to efficient, self-hosted AI infrastructure is paved with both conventional and unconventional innovations.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!