Helion, un DSL (Domain Specific Language) di alto livello progettato per semplificare la creazione di kernel di machine learning ad alte prestazioni, ha introdotto un nuovo approccio per accelerare il processo di autotuning. L'autotuning, essenziale per ottimizzare le prestazioni dei kernel su hardware specifici, può richiedere tempi lunghi, rappresentando un collo di bottiglia nello sviluppo.

Ottimizzazione Bayesiana per l'Autotuning

Il nuovo algoritmo, denominato LFBO (Likelihood-Free Bayesian Optimization) Pattern Search, si basa sull'ottimizzazione bayesiana, una tecnica di machine learning che utilizza modelli probabilistici per selezionare in modo intelligente i punti da valutare. Invece di esplorare esaustivamente tutte le possibili configurazioni, LFBO addestra un modello di classificazione (RandomForest) sui dati di latenza raccolti durante la ricerca. Questo modello predice se una configurazione rientra nel 10% migliore in termini di latenza, consentendo di filtrare i candidati meno promettenti.

Risultati e Benefici

L'implementazione di LFBO ha portato a significativi miglioramenti:

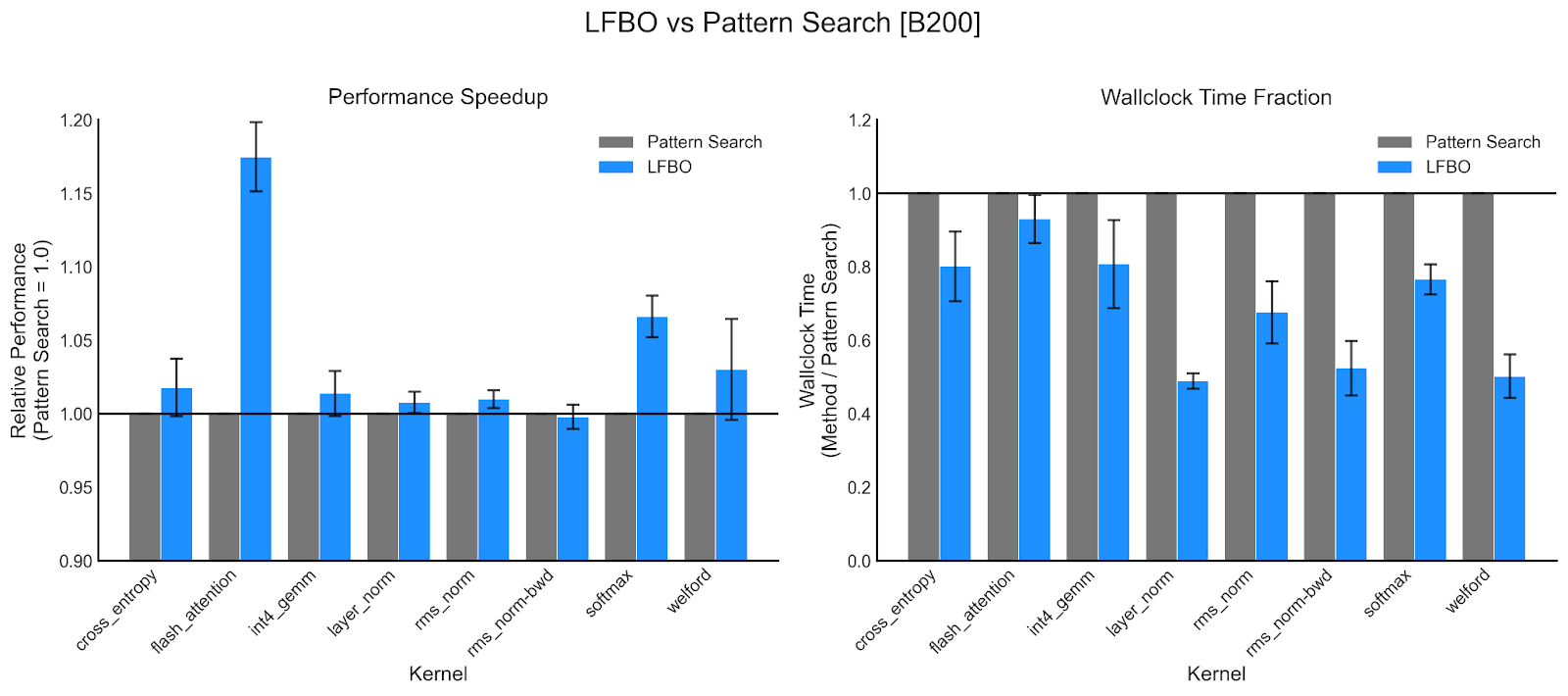

- Riduzione del tempo di autotuning del 36,5% e miglioramento della latenza del kernel del 2,6% in media su GPU NVIDIA B200.

- Riduzione del tempo di autotuning del 25,9% e miglioramento della latenza del kernel del 1,7% su GPU AMD MI350.

In alcuni casi, come per i kernel layer-norm su B200, la riduzione del tempo di esecuzione ha raggiunto il 50%, mentre per i kernel Helion FlashAttention si è osservato un miglioramento della latenza superiore al 15%.

Sfide dell'Autotuning dei Kernel

L'autotuning dei kernel è un processo complesso a causa di diversi fattori:

- Spazio di ricerca ad alta dimensionalità: Il numero di possibili combinazioni di parametri (dimensioni dei blocchi, fattori di srotolamento, ecc.) è enorme.

- Tempi di compilazione lunghi: Alcune configurazioni possono richiedere tempi di compilazione significativi.

- Errori di configurazione e timeout: Lo spazio di ricerca può includere configurazioni che generano errori di compilazione o risultati inaccurati.

LFBO Pattern Search affronta queste sfide esplorando più ampiamente lo spazio di ricerca e concentrandosi sulle configurazioni più promettenti.

Conclusioni

L'utilizzo del machine learning, in particolare l'ottimizzazione bayesiana, si dimostra efficace per accelerare l'autotuning in Helion, migliorando l'esperienza di sviluppo dei kernel. L'approccio LFBO consente di risparmiare tempo e scoprire configurazioni più veloci, aprendo la strada a ulteriori miglioramenti tramite tecniche di reinforcement learning e LLM.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!