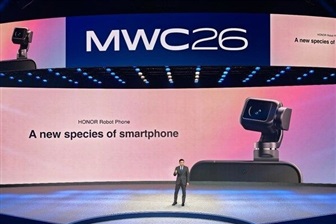

Honor Redefines AI Strategy: Focus on Humanoid Robotics and Edge Devices

Honor, the well-known technology manufacturer, is reorienting its artificial intelligence strategy, with a strong emphasis on the development of humanoid robotics and a revision of its approach to AI on devices. This move, reported by DIGITIMES, signals a significant evolution in the company's technological landscape, projecting it towards sectors that require deep integration between hardware and local AI processing capabilities.

The "AI device playbook," as it is called, is being rewritten, suggesting a departure from traditional architectures and an exploration of new frontiers for artificial intelligence. This implies a potential transition towards solutions that prioritize on-device or edge processing, with direct consequences for silicio design, model optimization, and deployment strategies.

On-Device AI and the Challenges of Humanoid Robotics

Integrating artificial intelligence into humanoid robotics and edge devices presents considerable technical challenges, but also unique opportunities. For humanoid robots, on-device AI is crucial to ensure real-time responses, operational autonomy, and fluid interactions with the surrounding environment. This requires efficient Inference capabilities, often supported by specialized silicio and LLM models optimized through techniques like Quantization to operate with limited VRAM and computing power resources.

The need to process data locally reduces latency, improves privacy, and enables operation in Air-gapped environments or with limited connectivity. However, this imposes stringent constraints on hardware, which must balance performance, energy consumption, and costs. The development of a revised "AI device playbook" suggests that Honor is addressing these complexities, seeking to define new architectures and Frameworks to make AI more pervasive and autonomous on its products.

Implications for Deployment and TCO

The push towards on-device AI and humanoid robotics has profound implications for deployment decisions and the Total Cost of Ownership (TCO) for businesses. While traditional AI workloads often rely on the cloud for scalability and computing power, edge processing offers advantages in terms of data sovereignty, compliance, and reduced long-term operational costs, especially for applications that generate large volumes of sensitive data or require immediate responses.

For those evaluating Self-hosted or edge infrastructure deployments, it is essential to consider the trade-offs between the initial investment (CapEx) in dedicated hardware, such as GPUs with adequate VRAM specifications, and the operational costs (OpEx) of the cloud. Choosing an on-premise or hybrid approach can offer greater control and security, but requires careful infrastructural planning. AI-RADAR, for example, offers analytical Frameworks on /llm-onpremise to help organizations evaluate these complex trade-offs.

Future Prospects and Strategic Trade-offs

Honor's decision to focus on humanoid robotics and redefine its AI strategy on devices reflects a broader trend in the technology sector towards distributed artificial intelligence. This approach aims to bring computational power closer to the data source and the end-user, unlocking new applications and improving the overall experience. However, it also entails addressing significant compromises.

Companies must balance the flexibility and scalability of the cloud with the privacy, latency, and TCO benefits offered by on-premise or edge solutions. The continuous evolution of silicio, with the introduction of increasingly powerful and efficient chips for AI Inference, will play a key role in determining the success of these strategies. Honor's "playbook rewrite" is a signal that the future of AI will be increasingly diversified, with tailored solutions for specific deployment and performance needs.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!