The New AI Inference Landscape

The AI inference landscape is constantly evolving, characterized by market dynamics that are redefining the supply chains for critical hardware components. The increasing demand for artificial intelligence capabilities, particularly for inference workloads, is driving this transformation across various industrial sectors. This context highlights the strategic importance of hardware choices for organizations aiming to deploy robust and efficient AI solutions.

Traditionally, the market has been dominated by a few established players, but recent tensions and challenges in the supply chain are paving the way for new entrants. The ability to adapt to these changing conditions and ensure the availability of silicon has become a crucial competitive factor for both suppliers and the companies that depend on these technologies.

Technical Details and Market Context

AI inference, the process of executing an artificial intelligence model to generate predictions or responses, represents a fundamental component in many enterprise applications, from computer vision to natural language processing. While GPUs are often the preferred choice for their exceptional parallel computing capabilities, CPUs continue to play a significant role, especially for less intensive workloads, for running quantized LLMs, or where latency is a primary constraint.

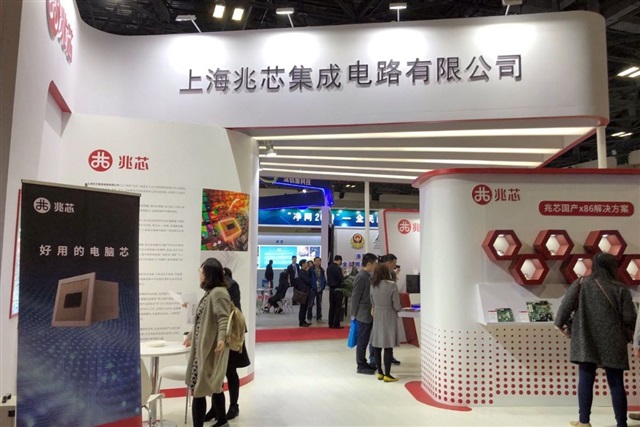

Current difficulties in chip supply chains, particularly those involving historical suppliers like Intel and AMD, are creating a void that other players are actively seeking to fill. In this scenario, Chinese CPU vendors, such as Shanghai Zhaoxin Semiconductor, are seizing the opportunity to expand their market share, offering alternatives that can mitigate risks associated with reliance on a limited number of sources.

Implications for On-Premise Deployments

For organizations prioritizing on-premise deployments, the availability and diversification of hardware vendors are decisive factors. The choice of self-hosted solutions is often motivated by the need to ensure data sovereignty, comply with stringent regulatory requirements, and maintain direct control over infrastructure. In an environment where traditional supplies are tightening, the emergence of new suppliers offers vital options to maintain operational continuity and strategic flexibility.

These alternatives can significantly influence the Total Cost of Ownership (TCO) and the resilience of the supply chain. Evaluating hardware from new players requires a thorough analysis of performance, software support, compatibility with existing stacks, and the long-term technology roadmap. The ability to integrate diverse hardware architectures thus becomes a competitive advantage for companies operating in complex environments with specific constraints.

Future Prospects and Strategic Trade-offs

The current dynamics of the silicon market suggest increasing fragmentation and greater complexity in infrastructure planning. Companies must carefully evaluate the trade-offs between performance, cost, availability, and long-term support when selecting hardware for their AI workloads. This includes considering factors such as available VRAM, tokens per second throughput, and latency, all crucial elements for inference efficiency.

Opening up to new suppliers can offer advantages in terms of risk diversification and access to emerging technologies, but it also requires careful due diligence. For those evaluating on-premise deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to support infrastructure decisions, emphasizing the importance of a thorough analysis of constraints and opportunities. The ability to navigate this complex scenario will be fundamental to the success of enterprise AI strategies.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!