Welcome to another edition of the AI-Radar Editorial. Today, we want to talk about the unglamorous, highly mathematical, and indeed crucial plumbing of the artificial intelligence revolution: Large Language Model (LLM) quantization.

As Tim Dettmers aptly put it, “Quantization research is like printers. Nobody cares about printers. Nobody likes printers. But everybody is happy if printers do their job”. Yet, in an industry obsessed with parameter counts that look like national GDPs, quantization is the only reason the whole house of cards hasn't collapsed under the sheer physical weight of its own hardware requirements. We are pushing models with hundreds of billions of parameters, but the physical reality of silicon and memory bandwidth refuses to bend to software hype.

So, what do we do? We squish the math. We take the high-precision "brains" of these models and compress them. We call it quantization, but let’s be honest—it’s advanced rounding. Here is the unvarnished history, the chaotic current situation, the undeniable pros, the hidden cons, and a lingering, uncomfortable question about the commercial realities driving it all.

The History: From Floating Points to Sinking Expectations

To understand where we are, we must look at how we got here. The conceptual lineage of quantization is rooted in mid-20th-century digital signal processing. In the early days of deep learning, 32-bit floating-point (FP32) was the unquestioned gold standard. We needed 32 bits—one sign bit, eight exponent bits, and 23 mantissa bits—to represent each weight to maintain the delicate gradients required for backpropagation.

But as the Transformer architecture triggered the "parameter explosion," FP32 became a severe liability. A 7-billion-parameter model in FP32 demands 28 GB of memory; a 70B model demands over 280 GB. The industry’s first compromise was migrating to 16-bit formats (FP16 and Google’s BFloat16), which immediately halved memory footprints with a negligible loss in accuracy.

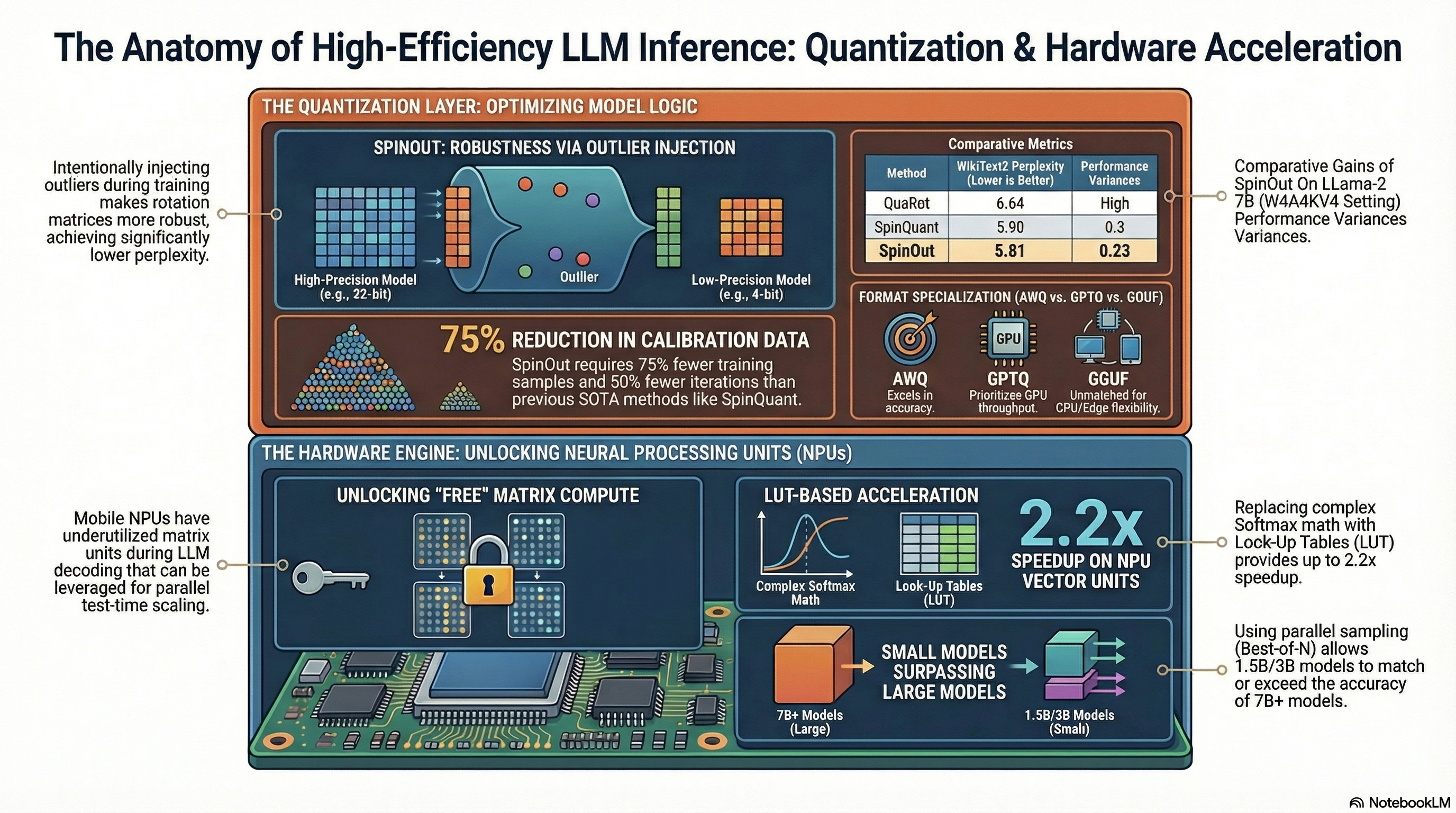

However, even 16-bit was too fat for deployment at scale. The watershed moment arrived in 2022 with the introduction of General Pre-trained Transformer Quantization (GPTQ), a post-training method that proved 4-bit weights were actually feasible. Suddenly, the race was on. In 2023, frameworks like Activation-aware Weight Quantization (AWQ) and SmoothQuant revealed that the difficulty in compression wasn't the weights, but the "outlier" activations—extreme values in specific channels that broke naive rounding schemes. By dynamically scaling these outliers, researchers managed to squeeze models into 4-bit and 8-bit formats without giving the AI a digital lobotomy.

The Current Situation: The 4-Bit Wild West

Today, 4-bit precision is the default deployment recipe. We have transitioned from experimental compression to an era of hardware-software co-design.

The current landscape is divided by competing algorithmic paradigms and fragmented formats. For local inference on CPUs and Apple Silicon (which boasts a massive Unified Memory Architecture advantage, offering up to 546 GB/s bandwidth on the M4 Max), the GGUF format reigns supreme. GGUF employs "K-Quants," dividing weights into super-blocks and utilizing double quantization to squeeze out every drop of efficiency. Meanwhile, for NVIDIA GPUs, formats like GPTQ, AWQ, and EXL2 dominate, optimizing for high-throughput CUDA operations.

At the enterprise level, the battle lines are drawn between Post-Training Quantization (PTQ) and Quantization-Aware Training (QAT). PTQ is the fast, cheap option: you take a fully trained model, run a small calibration dataset through it, and quantize the weights. The downside? Accuracy loss. When PTQ fails—especially at aggressive 2-bit or 3-bit depths—engineers deploy QAT. QAT bakes fake quantization nodes directly into the training loop, forcing the model to "learn to live with quantization noise" through a Straight-Through Estimator trick. QAT preserves near-FP32 accuracy even at 4-bit, but it demands the very GPU hours quantization was meant to save.

Simultaneously, the edge AI market has exploded. Mobile Neural Processing Units (NPUs) like the Qualcomm Snapdragon 8 Elite Gen 5 (pushing 80-85 TOPS) and AMD's Ryzen AI 400 are moving LLMs directly onto smartphones and laptops. Yet, NPU deployment reveals a harsh truth: memory bandwidth, not raw compute (TOPS), is the actual bottleneck. Because LLM decoding is memory-bound—streaming the entire model’s weights for every generated token—the 50-90 GB/s bandwidth of a mobile device absolutely requires 4-bit quantization to function without melting the chassis.

But the true frontier lies in the 1.58-bit ternary paradigm, spearheaded by Microsoft's BitNet. BitNet restricts weights to {-1, 0, 1}, completely eliminating energy-intensive floating-point matrix multiplications in favor of simple addition and subtraction. At 3B parameters, BitNet b1.58 matches FP16 baselines while running 2.71x faster and using 3.55x less memory. It is a glimpse into a natively multiplication-free future.

The Pros: Doing More With Less

The benefits of quantization are mathematically indisputable and commercially vital.

1. Massive Memory Reduction: Moving from FP32 to an INT4 format shrinks a model by 87.5%. A 70B parameter model that previously required a server rack of A100s can now be squeezed into a single 96GB workstation GPU or a high-end Mac Studio.

2. Speed and Throughput: Because inference is memory-bandwidth bound, reading fewer bytes per token translates directly to higher tokens-per-second generation. Furthermore, integer arithmetic is computationally simpler, allowing modern Tensor Cores to process data at vastly accelerated rates.

3. Energy Efficiency and Cost: Quantization can cut inference energy consumption by up to 79-80%. In cloud data centers, serving a quantized 8-bit or 4-bit model translates to 30-50% savings on infrastructure bills.

4. Edge Deployment and Privacy: Quantization makes "on-device AI" a reality. Running models locally on smartphones or IoT edge NPUs means zero network latency and absolute privacy. User data never leaves the device, circumventing cloud vulnerabilities and complying with stringent GDPR frameworks.

The Cons: The Price of Compression

Of course, there is no free lunch in computer science. You cannot discard 87.5% of a neural network's numerical precision without inducing some level of brain damage.

1. The Accuracy and Reasoning Cliff: While 8-bit quantization is practically lossless, 4-bit drops perplexity by 1-2%, and dropping to 3-bit or 2-bit triggers a catastrophic degradation. In complex logic tasks, math, and multi-turn reasoning (like the GSM8K benchmark), coarsely quantized models suffer severe performance drops and hallucinate wildly. The model might speak fluently, but its underlying logic is shattered.

2. The KV Cache Monster: We celebrate shrinking the model's static weights, but during inference, the Key-Value (KV) cache—the memory required to track conversational context—grows linearly with sequence length. For long-context applications, the KV cache can easily exceed the size of the compressed model weights, negating the benefits of quantization unless the cache itself is aggressively quantized.

3. NPU and Hardware Inefficiencies: Modern NPUs boast high TOPS, but their general-purpose vector units have abysmal memory bandwidth compared to their specialized matrix units. Key operations like Softmax or mixed-precision dequantization often become devastating bottlenecks on edge devices unless engineers resort to complex workarounds like Look-Up Tables (LUTs).

4. Adversarial Vulnerabilities: Quantized models are statistically more fragile. Precision loss makes them highly susceptible to adversarial prompt injections and bit-flip attacks, often exhibiting a 10-20% higher Attack Success Rate (ASR) than their full-precision counterparts.

The Grand Illusion: A Concluding Doubt

This brings us to the irony of our current technological trajectory. We possess the mathematical alchemy to run incredibly capable LLMs on consumer-grade hardware. Research like BitNet demonstrates that 1-bit and 1.58-bit ternary models can match FP16 accuracy at scale, abandoning power-hungry matrix multiplication entirely. We know that through intelligent Quantization-Aware Training, we can synthesize highly compressed, highly capable intelligence that doesn't require a dedicated nuclear reactor to operate.

Yet, the vast majority of capital, research, and corporate momentum remains stubbornly fixated on scaling up massive, high-precision clusters.

One must ask, from a purely editorial standpoint: Are the big AI labs neglecting massive investments in native 1-bit architectures and extreme quantization due to the commercial interests of the big chip manufacturers? The current AI boom has created a trillion-dollar market cap for companies selling VRAM and massive GPU accelerators. If the industry were to suddenly pivot to natively quantized, multiplication-free architectures—models so efficient they could run flawlessly on commodity CPUs and basic NPUs—the premium market for $40,000 datacenter GPUs would face an existential threat.

In an ecosystem where the shovel-sellers are the most profitable entities in the gold rush, creating a shovel that costs pennies and lasts forever is bad for business. Perhaps LLM quantization is not just an algorithmic challenge; perhaps it is a commercial threat that the titans of silicon would prefer to keep relegated to the hobbyists and the edge, far away from the lucrative racks of the cloud.

Maybe it sounds a bit extreme as thought but I remain convinced that the genial concept of Quantization and related future progresses could be slow down due to ensure business interests of the big weights. Hope I'm wrong.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!