The Growing Divide Between Optimism and Anxiety in the AI Era

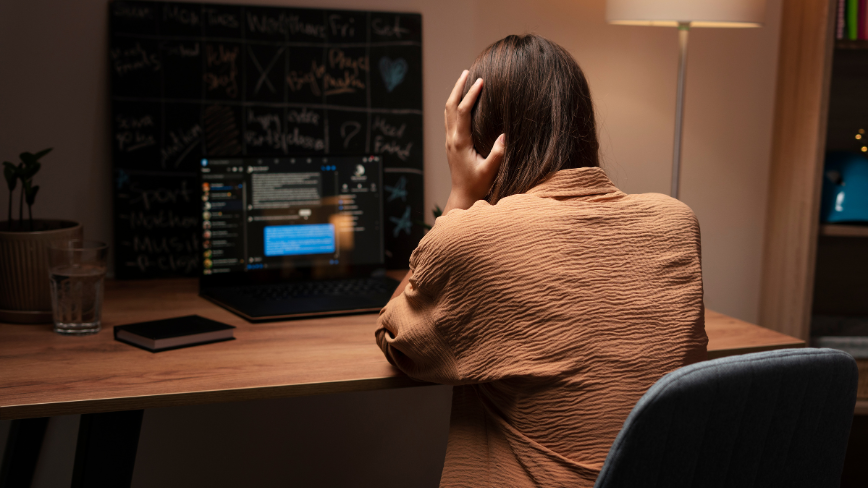

The landscape of artificial intelligence continues to evolve at a rapid pace, yet public and expert perceptions appear to be diverging increasingly. This is a key finding from the annual AI Index 2026 report, published by the Stanford Institute for Human-Centered AI. The document highlights a profound disconnect between the optimism prevalent among experts and a growing anxiety expressed by the general public.

This polarization of perception is not an isolated phenomenon but an indicator of how rapid technological progression is generating complex social reactions. For companies and technical teams involved in the Deployment of Large Language Models (LLM) and other AI solutions, understanding this divide is crucial for navigating an environment where acceptance and trust play an increasingly decisive role.

Generational Concerns and Employment Impact

Stanford's report sheds light on several concerning trends, particularly among younger demographics. The analysis indicates a rapid rise in "anger" or frustration among Generation Z towards artificial intelligence. This sentiment, if not addressed, could have significant repercussions for the adoption and integration of AI technologies into society and the labor market.

Concurrently, the document notes a decline in employment among younger workers in fields considered "exposed" to AI. This data raises important questions about labor market dynamics and the need for reskilling and adaptation strategies. For CTOs and DevOps leads, this means not only managing infrastructure and technological Frameworks but also considering the social impact and perception of their AI projects, especially when it comes to automation and process optimization.

Trust in Regulation and Data Sovereignty

Another critical aspect highlighted by the report concerns trust in governments' ability to regulate AI. In the United States, specifically, public trust in its administration for AI regulation is the lowest among all surveyed countries. This data is particularly relevant for companies operating in international contexts or managing sensitive data.

A lack of trust in governmental regulation can push organizations to seek greater control and autonomy over their AI stacks. This often translates into evaluating self-hosted or on-premise Deployment options, where data sovereignty, compliance, and security can be managed directly, even in air-gapped environments. The choice between cloud and bare metal infrastructure thus becomes not only a matter of TCO or Throughput but also of mitigating reputational and regulatory risk.

Future Perspectives for LLM Deployment and AI Ethics

The findings of the AI Index 2026 report underscore the importance of a holistic approach to artificial intelligence Deployment. For technical decision-makers, it is no longer sufficient to focus solely on LLM performance or Inference efficiency. It is essential to integrate ethical, social, and governance considerations from the earliest stages of the development and Release Pipeline.

Growing public anxiety and distrust in regulation compel companies to be proactive in demonstrating responsible AI use. This can include transparency about models, the implementation of robust security and privacy controls, and the selection of architectures that ensure maximum control over data and processes. For those evaluating on-premise Deployment, analytical Frameworks are available on /llm-onpremise that can help assess the trade-offs between control, costs, and scalability, in a context where trust and compliance are increasingly central.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!