Local AI for Bug Hunting: The Linux Kernel Case

Greg Kroah-Hartman, one of the most influential figures and the "second-in-command" in Linux kernel development, is exploring new frontiers in code optimization. His latest initiative sees him employing a local artificial intelligence bot, specifically designed to identify and report bugs within the vast kernel codebase. This innovative approach underscores a growing interest in adopting self-hosted AI solutions for critical tasks, where data sovereignty and direct control over infrastructure are paramount.

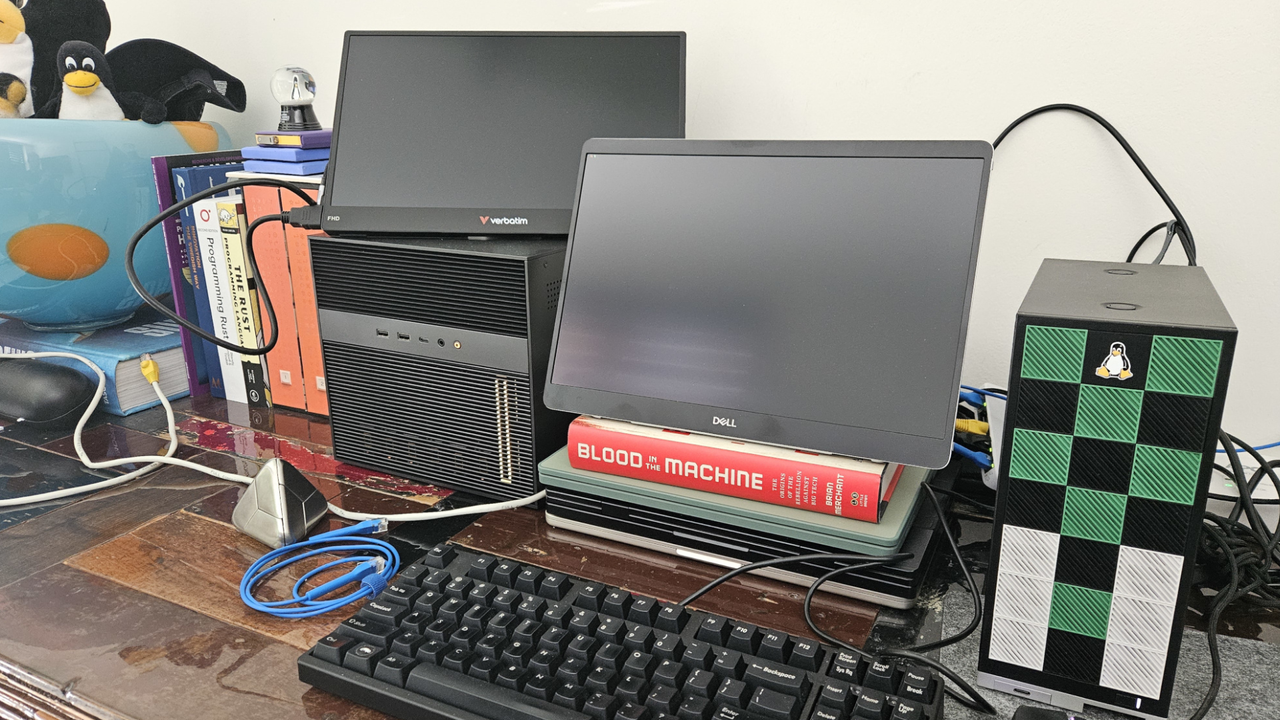

The system, dubbed "Clanker T1000," is not a cloud-based solution but an on-premise implementation that leverages cutting-edge consumer hardware. This choice reflects an emerging trend in the tech sector, where distributed computing capabilities and local AI inference are becoming increasingly accessible and performant. The objective is clear: to enhance the code quality and stability of the world's most widely used operating system, utilizing advanced tools in a controlled environment.

The "Clanker T1000": Hardware and Technical Implications

At the heart of the "Clanker T1000" is a Framework Desktop, a platform known for its modularity and ease of upgrade, equipped with an AMD Ryzen AI Max+ processor. This hardware combination is significant. AMD Ryzen AI Max+ processors integrate a dedicated Neural Processing Unit (NPU), designed to accelerate artificial intelligence workloads directly on the device, reducing reliance on external cloud resources.

The adoption of a Framework Desktop, coupled with an AI-capable processor, highlights a deployment strategy that prioritizes energy efficiency and reduced latency for AI inference. For tasks like code analysis, where data privacy and processing speed are crucial, a self-hosted setup offers distinct advantages. It allows sensitive source code to remain within the local environment, avoiding transfers to external cloud services and ensuring complete control over the analysis process.

Impact and Considerations for On-Premise Deployments

The preliminary results of Kroah-Hartman's initiative are already tangible: the AI bot has contributed to the discovery and resolution of nearly two dozen patches for the Linux kernel. This concrete data demonstrates the effectiveness of AI in supporting complex software engineering tasks, even with local resources. For CTOs, DevOps leads, and infrastructure architects, this case study offers important insights into the feasibility and benefits of deploying LLMs and AI in on-premise environments.

The choice of a local system for bug hunting in the Linux kernel highlights the trade-offs between cloud and self-hosted solutions. While the cloud offers immediate scalability and flexible operational costs, on-premise implementations ensure greater data control, long-term cost predictability (TCO), and the ability to operate in air-gapped environments or those with stringent compliance requirements. For those evaluating on-premise deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to assess these trade-offs, considering factors such as data sovereignty, hardware specifications, and throughput needs.

Future Prospects of AI in the Development Cycle

Greg Kroah-Hartman's experiment is not just a technological anecdote but an indicator of the direction artificial intelligence is taking in the software development lifecycle. The integration of AI capabilities directly into client and server hardware, as demonstrated by the AMD Ryzen AI Max+, is democratizing access to powerful analysis and automation tools. This paves the way for scenarios where developers can leverage AI to improve code quality, accelerate testing processes, and strengthen security, all while keeping sensitive data within their own infrastructural boundaries.

This "AI-first" and "on-premise-first" approach to Linux kernel maintenance could serve as a model for other organizations looking to implement AI solutions for code management, documentation, or internal knowledge bases. The ability to perform inference locally, with dedicated hardware, offers a path to balance innovation, security, and cost control—fundamental aspects for strategic IT decisions.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!