AI Compute: From Cost Center to Profit Center

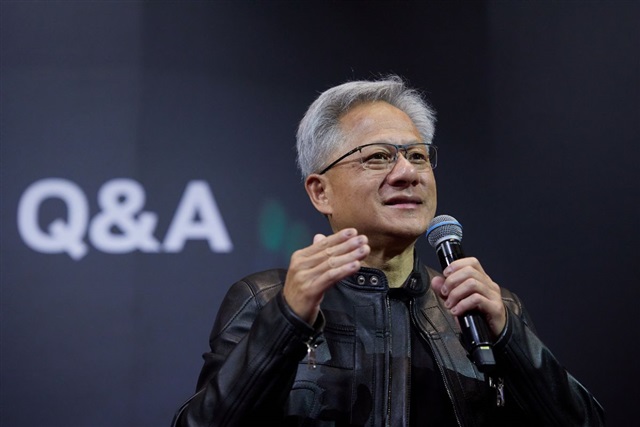

Jensen Huang, CEO of Nvidia, recently offered an innovative perspective on the future of AI compute, redefining computers as true "factories" directly linked to enterprise revenue generation. This statement, reported by DIGITIMES, marks a significant shift in how the industry perceives and values computational infrastructure dedicated to artificial intelligence. No longer mere support tools, but strategic assets capable of producing tangible value.

The "factory" metaphor suggests a well-defined production process, where the input consists of data and models, and the output is represented by insights, automated decisions, or, in the context of Large Language Models (LLM), the generation of "tokens" that translate into value-added services and applications. This vision prompts organizations to consider investment in AI compute capacity not as an operational expense, but as productive capital with a measurable return on investment.

The "Token Factory" and Inference

The concept of a "token factory" is particularly relevant in the LLM landscape. Every interaction with a generative model, whether for content creation, customer support, or data analysis, involves the generation of tokens. An enterprise's ability to produce these tokens efficiently and at scale thus becomes a critical factor for its competitiveness and for monetizing its AI-based services.

To build and manage these "factories," companies must address complex hardware and software challenges. LLM Inference requires GPUs with high VRAM and throughput, capable of processing large volumes of requests with low latency. The choice between different silicio architectures, the management of model Quantization, and the optimization of deployment pipelines are all elements that directly influence the efficiency and TCO of this "token production."

Implications for On-Premise Deployment

Huang's vision strengthens the argument for self-hosted and on-premise deployments for AI workloads. If AI compute is a "factory" that generates revenue, then direct control over this factory becomes a strategic advantage. Companies can thus optimize hardware for their specific needs, ensuring data sovereignty and regulatory compliance, crucial aspects in sectors such as finance or healthcare.

An on-premise deployment also allows for more granular control over TCO, balancing initial CapEx with long-term operational costs, including energy. The ability to operate in air-gapped environments offers a level of security and isolation that cloud solutions can hardly match for certain critical applications. For those evaluating on-premise deployments, analytical frameworks on /llm-onpremise exist to help assess the trade-offs between control, cost, and scalability.

Future Prospects and Strategic Decisions

The redefinition of AI compute as a "revenue-generating factory" shifts the focus from mere technological adoption to its strategic integration into the business model. Decisions regarding AI infrastructure, whether investing in new GPUs, optimizing existing Frameworks, or choosing between bare metal and containerized solutions, become investment decisions with a direct impact on profits.

This scenario compels CTOs, DevOps leads, and infrastructure architects to carefully evaluate not only technical capabilities but also the economic and strategic implications of each choice. The ability to efficiently build and manage one's "token factory" will be a distinguishing factor for companies aiming to fully capitalize on the transformative potential of artificial intelligence.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!