Reverse Engineering Meets Hardware History

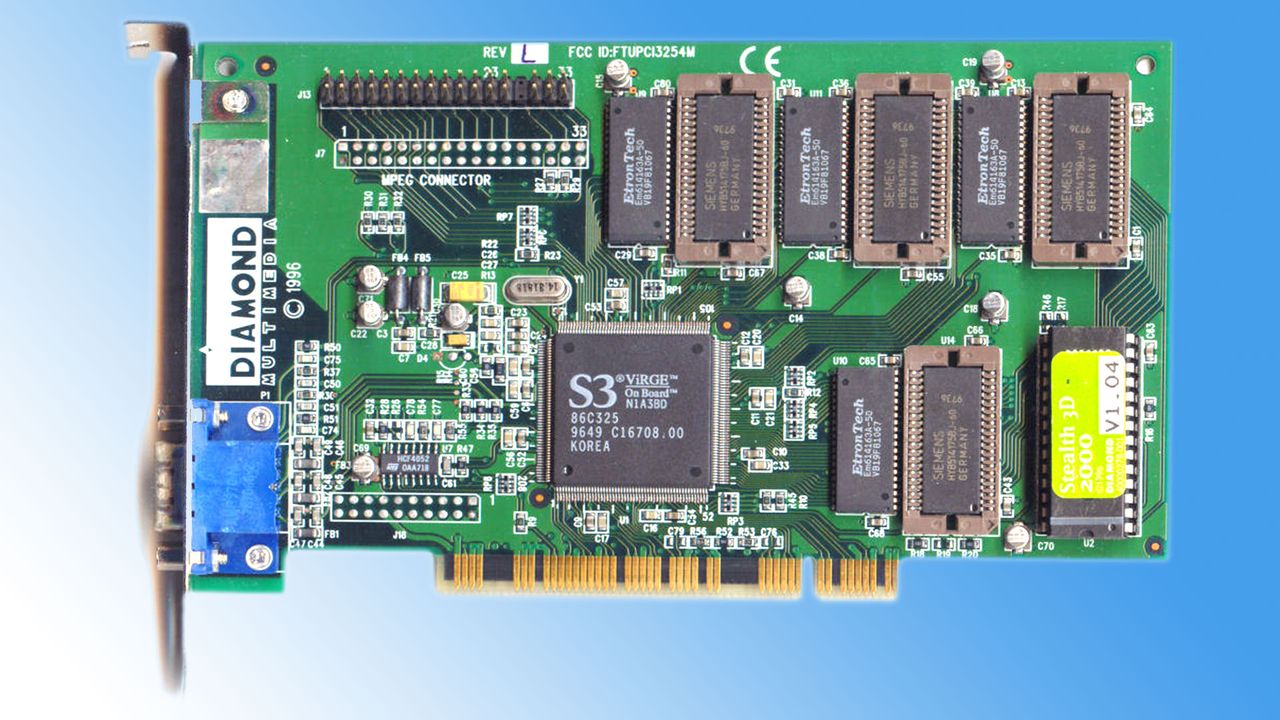

In today's technology landscape, dominated by complex architectures and software abstractions, the news of an enthusiast resolving a 30-year-old hardware issue on an S3 Virge graphics card offers a fascinating perspective. The intervention concerned one of the first consumer 3D graphics cards, the S3 Virge, which suffered from a black level defect that compromised its visual quality. The solution did not come from a software update or a driver, but from a reverse engineering operation on the card's VBIOS (Video Basic Input/Output System) itself.

This type of resolution, rooted in direct firmware modification, is a powerful reminder of the depth of control that can be exercised over hardware. For those operating critical infrastructures, especially in the field of artificial intelligence, the ability to intervene at such a low level can represent a significant strategic advantage, ensuring not only optimal performance but also greater resilience and security.

The Technical Detail of the 'Pedestal Bit'

The core of the solution lies in modifying what has been termed a 'pedestal bit' within the VBIOS of the S3 Virge DX. The VBIOS is the firmware that initializes and controls the graphics card upon system startup, managing fundamental parameters such as video modes, frequencies, and, in this case, the calibration of color and brightness levels. The 'pedestal bit' in question was responsible for an incorrect setting of black levels, which were too high, compromising the contrast and color fidelity of the image.

The intervention required not only a deep understanding of the S3 Virge's hardware architecture but also the ability to disassemble and modify the VBIOS binary code. This approach, which could be described as 'surgical,' allowed the root cause of the problem to be eliminated, restoring correct black levels. It is a clear example of how a detailed understanding of hardware's internal workings can unlock potential and solve problems that would otherwise remain unresolved at the driver or operating system level.

Implications for On-Premise LLM Deployments

Although the S3 Virge case relates to historical hardware, the principle of granular control it embodies is extremely relevant for modern infrastructures dedicated to Large Language Models (LLM), especially in on-premise or self-hosted deployment contexts. In these environments, CTOs, DevOps leads, and infrastructure architects seek maximum control over every component of the stack, from silicio to application software.

The ability to intervene at the firmware level, as in the VBIOS case, or to optimize drivers and hardware configurations, is crucial for maximizing throughput, minimizing latency, and efficiently managing GPU VRAM. This deep control is fundamental for optimizing Total Cost of Ownership (TCO), ensuring data sovereignty in air-gapped environments or those with stringent compliance requirements, and customizing infrastructure for specific AI workloads. Unlike cloud environments, where hardware control is often limited, on-premise deployments offer the flexibility needed for such optimizations. For those evaluating the trade-offs between cloud and on-premise, AI-RADAR offers analytical frameworks on /llm-onpremise to support informed decisions.

Beyond Nostalgia: The Lesson for AI

The story of the S3 Virge fix transcends mere nostalgia for vintage hardware. It offers a valuable lesson on the importance of control and a deep understanding of the underlying infrastructure. For AI workloads, where performance is measured in tokens per second and GPU memory is a precious resource, every optimization at the hardware, firmware, or driver level can translate into significant advantages in terms of efficiency and cost.

In an era where the complexity of AI systems continues to grow, the ability to diagnose and solve low-level problems, or to optimize performance through targeted modifications, remains an invaluable skill. This 'bare metal' approach to infrastructure management is a cornerstone for organizations aiming to build robust, secure, and high-performing AI platforms, while maintaining full control over their data and operations.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!