The AI Explosion and the Heat Challenge

The massive adoption of Large Language Models (LLM) and other artificial intelligence applications has generated unprecedented demand for computational power. This requirement translates into enormous heat generation within data centers, making cooling a critical factor for the stability and efficiency of infrastructures.

In this scenario, the source highlights a veritable "patent race" in the server cooling sector. Taiwanese companies, in particular, are positioning themselves among the global leaders in this competition, a sign of their centrality in the technological supply chain and the growing strategic importance of these solutions.

The Critical Role of Cooling in the AI Era

Modern GPUs, such as NVIDIA H100 or A100, are components with extremely high transistor density and high power consumption, generating significant amounts of heat. Excessive heat not only reduces the overall energy efficiency of the data center but can also compromise hardware stability and longevity, directly impacting the throughput and latency of AI workloads.

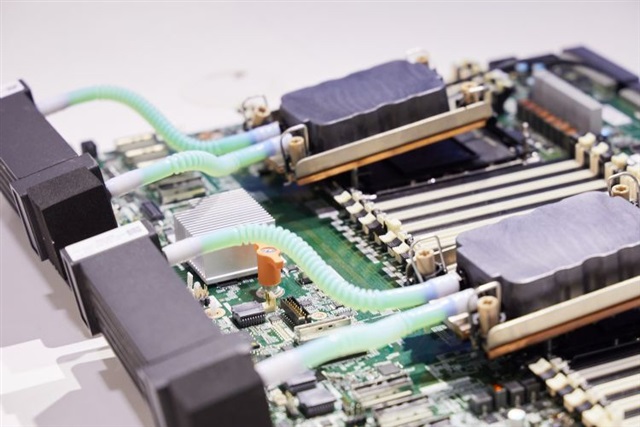

Traditional air cooling solutions are increasingly struggling to manage these power densities, pushing towards the adoption of more advanced systems. Among these, direct-to-chip liquid cooling or full immersion of servers in dielectric fluids are becoming increasingly considered options. These technologies are fundamental for maintaining optimal performance of AI infrastructures and ensuring operational continuity in increasingly demanding environments.

Implications for On-Premise Deployments

For organizations choosing self-hosted or air-gapped deployments for their LLMs, thermal management becomes a central element of the Total Cost of Ownership (TCO). Energy costs associated with cooling can represent a significant portion of operational expenses, directly influencing the economic sustainability of the infrastructure.

Efficient cooling infrastructure is crucial for maximizing rack density and optimizing data center space, vital aspects for those seeking data sovereignty and complete control over hardware. While cloud providers can absorb and distribute these costs at scale, on-premise entities must directly address these infrastructural challenges. For those evaluating on-premise deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between initial, operational costs, and cooling requirements.

Future Prospects and Strategic Innovation

The leadership of Taiwanese companies in this patent race is not surprising, given their central position in the global hardware supply chain and their expertise in producing high-performance components. Innovation in cooling is not just a matter of efficiency but an enabling factor for the next generation of AI hardware. Without adequate cooling solutions, advancements in computing power would be limited by physics.

This often-underestimated sector is, in fact, a strategic pillar for the evolution of artificial intelligence. It ensures that infrastructure can keep pace with the demands of increasingly complex models, guaranteeing that the promises of AI can be fulfilled even at the physical deployment level.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!