TSMC's Role in the AI Ecosystem

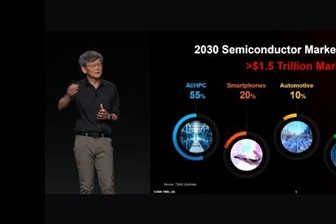

TSMC, the Taiwanese semiconductor manufacturing giant, holds an irreplaceable position in the current technological landscape, serving as a pillar for innovation in key sectors such as artificial intelligence. Its leadership in advanced chip fabrication is fundamental to the development and deployment of processors that power Large Language Models (LLMs) and other AI applications, both in cloud and self-hosted environments.

A recent address by a TSMC Senior Vice President highlighted an optimistic outlook for the future of AI, anticipating "better days ahead" for the industry. This perspective, coming from a company that produces the silicon underlying the most performant GPUs and AI accelerators, offers valuable insight into the directions that hardware innovation and computational capabilities will take.

"COUPE": An Architecture for the Future of AI?

In his speech, the TSMC executive urged attendees to remember the keyword "COUPE." While an explicit definition was not provided, in the context of AI hardware development, "COUPE" could represent an acronym or a concept encompassing guiding principles for the next generation of silicon architectures. It might refer to an emphasis on Compute, Optimization, Ubiquity, Performance, and Efficiency—crucial elements for addressing the growing demands of LLMs.

These principles are vital for overcoming current challenges, such as the need for ever-increasing VRAM for complex models, optimizing Throughput for Inference, and reducing latency. Innovation in these areas is essential to make AI deployments more accessible and efficient, whether for large cloud data centers or on-premise infrastructures that require granular control over resources and data.

Implications for On-Premise Deployments and Data Sovereignty

TSMC's optimism and the potential direction indicated by "COUPE" have significant implications for companies evaluating or managing on-premise LLM deployments. The availability of more performant and efficient silicon can reduce the Total Cost of Ownership (TCO) of AI infrastructures, making it more feasible to manage intensive workloads internally. This is particularly relevant for sectors with stringent data sovereignty and compliance requirements, where air-gapped or self-hosted solutions are often preferred.

For CTOs, DevOps leads, and infrastructure architects, understanding the roadmap of chip manufacturers is crucial. Future generations of GPUs and accelerators, with improvements in memory, bandwidth, and computing power, will directly influence the choice between cloud and on-premise solutions. The ability to run increasingly large and complex LLMs locally, while keeping operational costs under control, is a decisive factor for many organizations.

Towards More Powerful and Efficient AI

TSMC's vision suggests a future where artificial intelligence capabilities will continue to expand, supported by relentless innovation at the silicon level. This progress will not only enable more sophisticated models and complex applications but also promises to make AI technology more democratic and accessible, with a positive impact on energy efficiency and sustainability.

As the industry moves towards broader AI adoption, the ability to manage these workloads flexibly, securely, and economically will remain a priority. Innovations from companies like TSMC are the foundation upon which future solutions will be built, offering operators the flexibility to choose the deployment environment that best suits their specific needs, from bare metal data centers to hybrid configurations.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!