La corsa all'HBM: un fattore critico per l'AI

Nel panorama in rapida evoluzione dell'intelligenza artificiale, la memoria HBM (High Bandwidth Memory) si conferma un componente strategico insostituibile. La sua architettura, che impila più die di memoria per ottenere una larghezza di banda significativamente superiore rispetto alle tradizionali memorie GDDR, è fondamentale per alimentare i carichi di lavoro intensivi richiesti dai Large Language Models (LLM) e da altre applicazioni di AI avanzate. Senza un'adeguata fornitura di HBM, la capacità di produrre acceleratori AI ad alte prestazioni, come le GPU di Nvidia, subisce un rallentamento, creando un collo di bottiglia critico per l'intera industria.

Questa dipendenza ha innescato una vera e propria corsa tra i principali attori del settore. Samsung, uno dei maggiori produttori di HBM, sta cercando di capitalizzare la sua posizione per consolidare la propria influenza sul mercato. L'obiettivo è chiaro: assicurarsi una quota preponderante degli ordini di memoria destinati agli acceleratori AI di Nvidia, che rappresentano una fetta consistente della domanda globale. La capacità di Samsung di soddisfare questa richiesta è vista come un elemento chiave per il suo posizionamento futuro nel settore AI.

Dinamiche di mercato: Samsung, Nvidia e TSMC

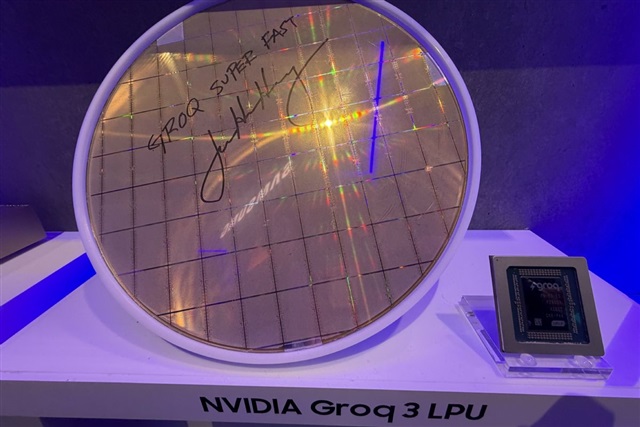

La fonte rivela una complessa interazione tra i giganti della tecnicia. Samsung sta puntando sulla propria leadership nella produzione di HBM per rafforzare la sua posizione come fornitore primario per Nvidia. Gli acceleratori AI di Nvidia, spesso definiti come LPU (Language Processing Units) o GPU, sono al centro delle infrastrutture di AI a livello globale, e la loro performance è intrinsecamente legata alla disponibilità e alle specifiche dell'HBM integrata. Una maggiore larghezza di banda e capacità di VRAM, rese possibili dall'HBM, sono essenziali per gestire modelli sempre più grandi e complessi, riducendo la latenza e aumentando il throughput.

In questo scenario, TSMC, il principale produttore di semiconduttori al mondo, non rimane a guardare. Sebbene TSMC sia primariamente una fonderia che produce i chip di Nvidia, la sua influenza si estende anche al packaging avanzato, che include l'integrazione dell'HBM con i die logici. La sua capacità di offrire soluzioni di packaging all'avanguardia e di gestire l'intera pipeline produttiva, dalla fabbricazione del silicio all'assemblaggio finale, le consente di esercitare una pressione significativa. Questa competizione non riguarda solo la fornitura di memoria, ma anche il controllo sull'intera catena di valore degli acceleratori AI, dove l'efficienza e l'innovazione nel packaging sono altrettanto cruciali quanto la produzione dei singoli componenti.

Implicazioni per il deployment on-premise di LLM

Per le aziende che valutano il deployment di LLM on-premise, le dinamiche del mercato HBM hanno implicazioni dirette e significative. La disponibilità di acceleratori AI ad alte prestazioni è un fattore determinante per la fattibilità e la scalabilità di queste infrastrutture. Una competizione accesa per l'HBM può portare a fluttuazioni nei prezzi e a tempi di consegna più lunghi per le GPU, influenzando direttamente il Total Cost of Ownership (TCO) e la pianificazione degli investimenti (CapEx) per i data center self-hosted. I CTO e gli architetti infrastrutturali devono monitorare attentamente queste tendenze per anticipare potenziali colli di bottiglia nella supply chain.

La scelta di un deployment on-premise è spesso motivata da esigenze di sovranità dei dati, compliance normativa o la necessità di ambienti air-gapped. Tuttavia, queste decisioni comportano la responsabilità di gestire l'approvvigionamento hardware in un mercato volatile. La dipendenza da pochi fornitori chiave per componenti critici come l'HBM introduce un elemento di rischio che deve essere mitigato attraverso una pianificazione strategica robusta e, ove possibile, la diversificazione dei fornitori o la valutazione di architetture hardware alternative. Per chi valuta deployment on-premise, AI-RADAR offre framework analitici su /llm-onpremise per valutare trade-off e strategie di approvvigionamento.

Prospettive future e trade-off strategici

La battaglia per l'HBM è destinata a intensificarsi, riflettendo la crescente domanda di potenza di calcolo per l'AI. Questa competizione spingerà probabilmente verso innovazioni nella tecnicia HBM e nelle tecniche di packaging, ma potrebbe anche mantenere alta la pressione sui prezzi e sulla disponibilità. Per le aziende che investono in infrastrutture AI, comprendere queste dinamiche è fondamentale per prendere decisioni informate. I trade-off tra costo, performance e tempi di consegna diventano sempre più complessi.

La capacità di un'azienda di assicurarsi una fornitura stabile di HBM di ultima generazione può fare la differenza nella sua capacità di innovare e scalare le proprie soluzioni AI. Questo scenario sottolinea l'importanza di una strategia di procurement agile e di una profonda comprensione della supply chain globale dei semiconduttori. La neutralità nella scelta dei vendor e la valutazione oggettiva dei vincoli e delle opportunità offerte dai diversi attori del mercato saranno essenziali per navigare con successo in questo ambiente competitivo.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!