The Supply Chain at the Core of the Trillion-Dollar AI Wave

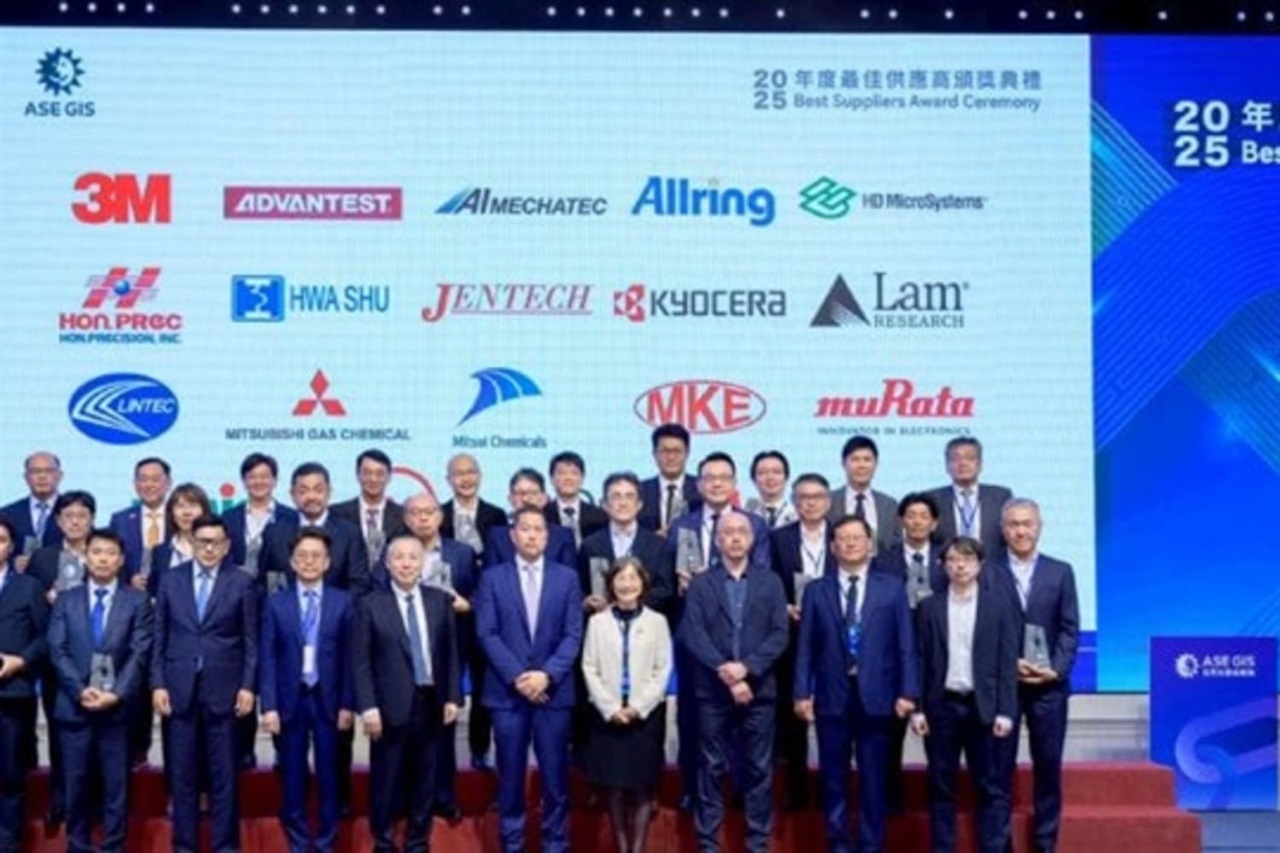

The artificial intelligence sector is experiencing exponential growth, with projections indicating it will reach a multi-trillion-dollar valuation. In this dynamic scenario, the robustness and innovation of the technological supply chain emerge as critical factors for sustaining the development and deployment of advanced AI solutions. ASE Technology, a prominent player in semiconductor packaging and test services, recently highlighted eighteen suppliers at the forefront of this transformation.

This initiative not only underscores the crucial role of these partners in the AI value chain but also emphasizes the complexity and interdependence that characterize the ecosystem. From silicio production to high-bandwidth memory modules, advanced cooling systems, and interconnection components, every link in the supply chain contributes to defining the capabilities and limitations of the AI infrastructures that companies can implement.

The Role of Suppliers for On-Premise AI

For organizations evaluating the deployment of Large Language Models (LLMs) and other AI workloads in self-hosted or air-gapped environments, the availability and reliability of hardware components are paramount. The eighteen suppliers highlighted by ASE Technology represent an essential part of this infrastructure. They produce the fundamental building blocks that enable companies to construct their local stacks, ensuring data sovereignty and complete control over the processing environment.

Choosing an on-premise deployment necessitates procuring GPUs with adequate VRAM specifications, high-speed storage solutions, and low-latency networking directly. The quality and innovation of packaging and test suppliers, like those in ASE's network, directly influence the performance, energy efficiency, and lifespan of these components. A disruption or bottleneck in the supply chain can significantly impact a company's ability to scale its AI operations or maintain its Total Cost of Ownership (TCO) objectives.

Implications for CTOs and Infrastructure Architects

For CTOs, DevOps leads, and infrastructure architects, understanding the robustness of the AI supply chain is more strategic than ever. Reliance on a limited number of suppliers for critical components can introduce risks, while a diversified and innovative supply chain can offer greater resilience and options. Hardware decisions, such as choosing between different generations of GPUs or adopting advanced cooling solutions, are directly influenced by suppliers' ability to deliver cutting-edge products.

Evaluating the trade-offs between CapEx and OpEx, managing compliance requirements, and the need for secure, air-gapped environments make the selection of technology partners a priority. The ability to obtain components that support Quantization to optimize Inference on less powerful hardware, or that ensure high Throughput for intensive workloads, largely depends on the innovation these suppliers bring to the market. For those evaluating on-premise deployments, analytical frameworks are available at /llm-onpremise to help assess these trade-offs in a structured manner.

Future Outlook and Challenges for the AI Supply Chain

The multi-trillion-dollar AI wave will continue to drive innovation and demand for advanced components. Suppliers will face challenges related to production scalability, resource management, and the integration of new technologies, such as photonic silicio or next-generation memory. The ability to maintain a constant flow of innovation and adapt quickly to market needs will be crucial for success.

In this context, collaboration between companies like ASE Technology and its suppliers becomes essential to ensure that the industry can continue to develop and deploy increasingly powerful and efficient AI solutions. Supply chain transparency and resilience will not only be a competitive advantage but a necessity to sustain the growth of the entire AI ecosystem, providing the hardware foundations upon which future innovations, both in the cloud and on-premise, will be built.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!