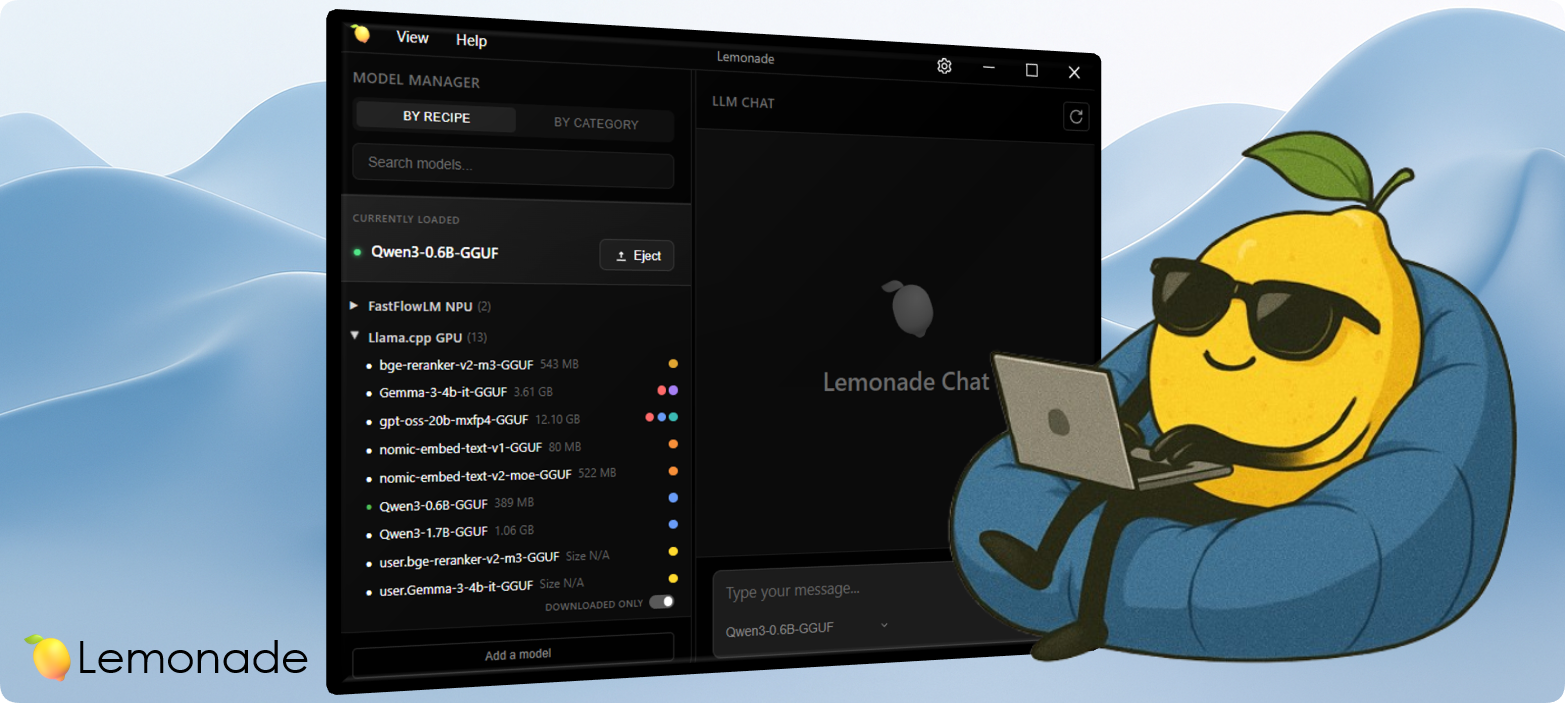

Lemonade, un server locale per LLM, ha rilasciato la versione 9.1.4, portando con sé diverse novità e miglioramenti.

Supporto per GLM-4.7-Flash-GGUF

La nuova versione include il supporto per GLM-4.7-Flash-GGUF, con build aggiornate di llama.cpp per Vulkan e CPU, oltre al supporto ROCm. Questo permette di sfruttare le ultime ottimizzazioni disponibili per questi modelli.

Compatibilità con LM Studio

Lemonade ora è compatibile con LM Studio, consentendo di utilizzare i file GGUF già scaricati con quest'ultimo. Basta avviare Lemonade specificando la directory dei modelli di LM Studio.

Supporto per nuove piattaforme

Oltre a Ubuntu e Windows, Lemonade ora supporta ufficialmente anche Arch, Fedora e Docker, grazie al contributo della community. Sono disponibili immagini Docker ufficiali per ogni rilascio.

App Mobile Companion

È stata sviluppata un'app mobile che si connette al server Lemonade, fornendo un'interfaccia di chat con supporto VLM. L'app è già disponibile su iOS e sarà rilasciata su Android a breve.

Ricette di configurazione

È ora possibile salvare le impostazioni dei modelli (come l'utilizzo di ROCm o Vulkan e gli argomenti di llama.cpp) in un file JSON, che verrà automaticamente applicato al successivo caricamento del modello.

Prossimi sviluppi

Sono in corso di sviluppo il supporto per macOS con llama.cpp+metal, la generazione di immagini con stablediffusion.cpp e un "marketplace" di app AI locali.

Lemonade si propone come alternativa open source a soluzioni come Ollama e LM Studio, con l'obiettivo di far crescere l'ecosistema di applicazioni AI locali.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!