The Future of Autonomous Engineering: An Editorial to Introduce MachinaOS

Allow me to write an editorial, this time about one of the projects I care about most: MachinaOS.

https://machinaos.ai

For decades, we have interacted with our machines via imperative commands, clicking buttons and typing exact scripts to achieve our goals. Today, we are transitioning to an era of intent-driven execution. To fully understand this shift, it is crucial to observe MachinaOS. As an ideal starting point, the machinaos.ai website serves as a presentation and introduction portal, guiding users through the core vision and offering direct links to demos to experience the environment firsthand.

However, beneath the elegant surface of the introductory site lies a robust, complex, and enterprise-grade architecture. This editorial serves as an in-depth analysis and pitch-deck style narrative, unveiling the sophisticated layers of MachinaOS.

- The Vision: A Local-First, Intent-Driven Operating Layer

MachinaOS is not a simple chatbot connected to a dashboard, nor a replacement for your main operating system's kernel. It is the world's first intent-driven operating layer (at least to my knowledge), designed specifically for developer and technical workflows. Elegantly positioning itself above your host operating system, MachinaOS translates natural language goals into secure, inspectable, and tool-executed system actions.

In a classic system, the workflow is: User → App → OS → Hardware. In MachinaOS, the workflow evolves into: User → Intent (Goal) → Planner → Policies → Executor → Tools → Host OS.

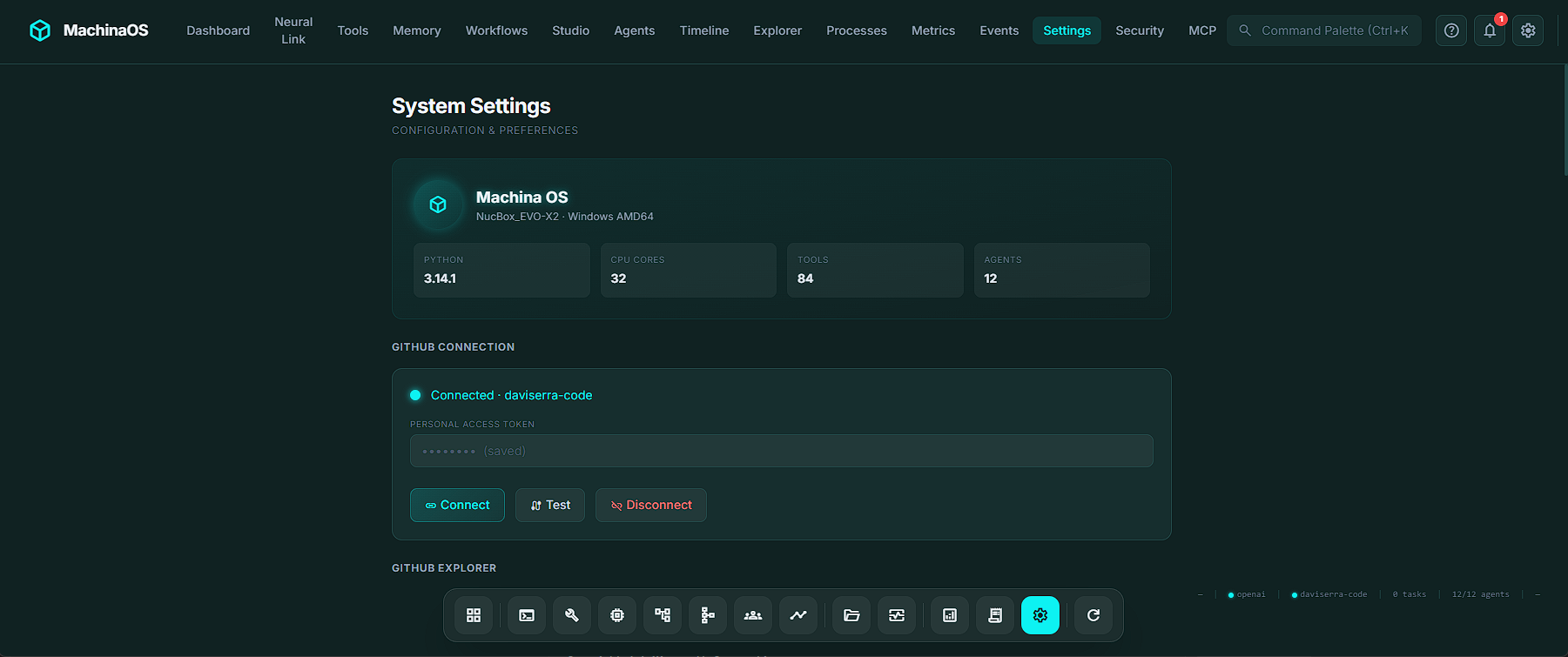

The system understands exactly what you want to achieve, builds a structured plan, requests your approval for risky steps, executes with real tools, and records an immutable, inspectable history. Crucially, it is local-first; its central orchestration, plans, secrets, logs, and agents reside on your machine, utilizing local LLM inference via Ollama or opting for cloud providers as needed.

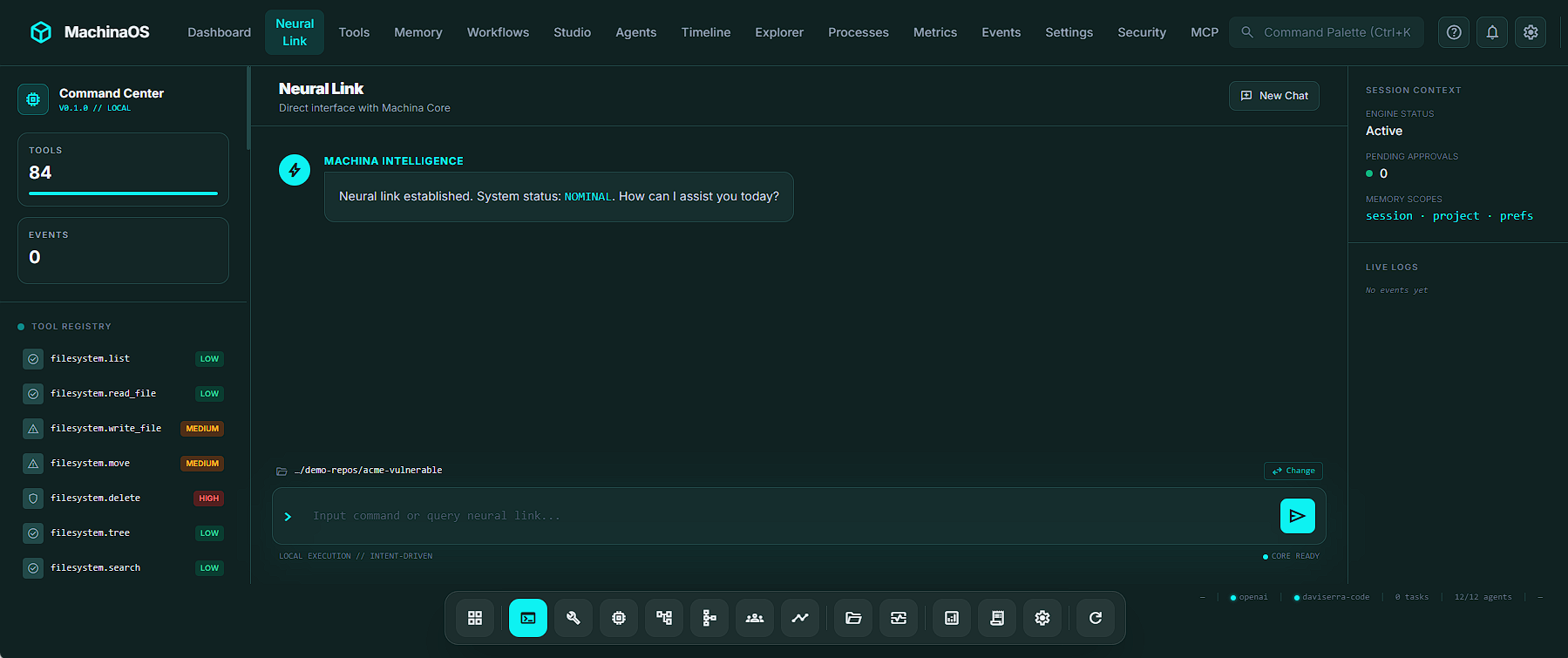

- The Neural Link: The Gateway to Intent

Interaction begins with the Neural Link, MachinaOS's conversational and command interface. More contextualized than a standard chat window, the Neural Link is designed for speed and precision.

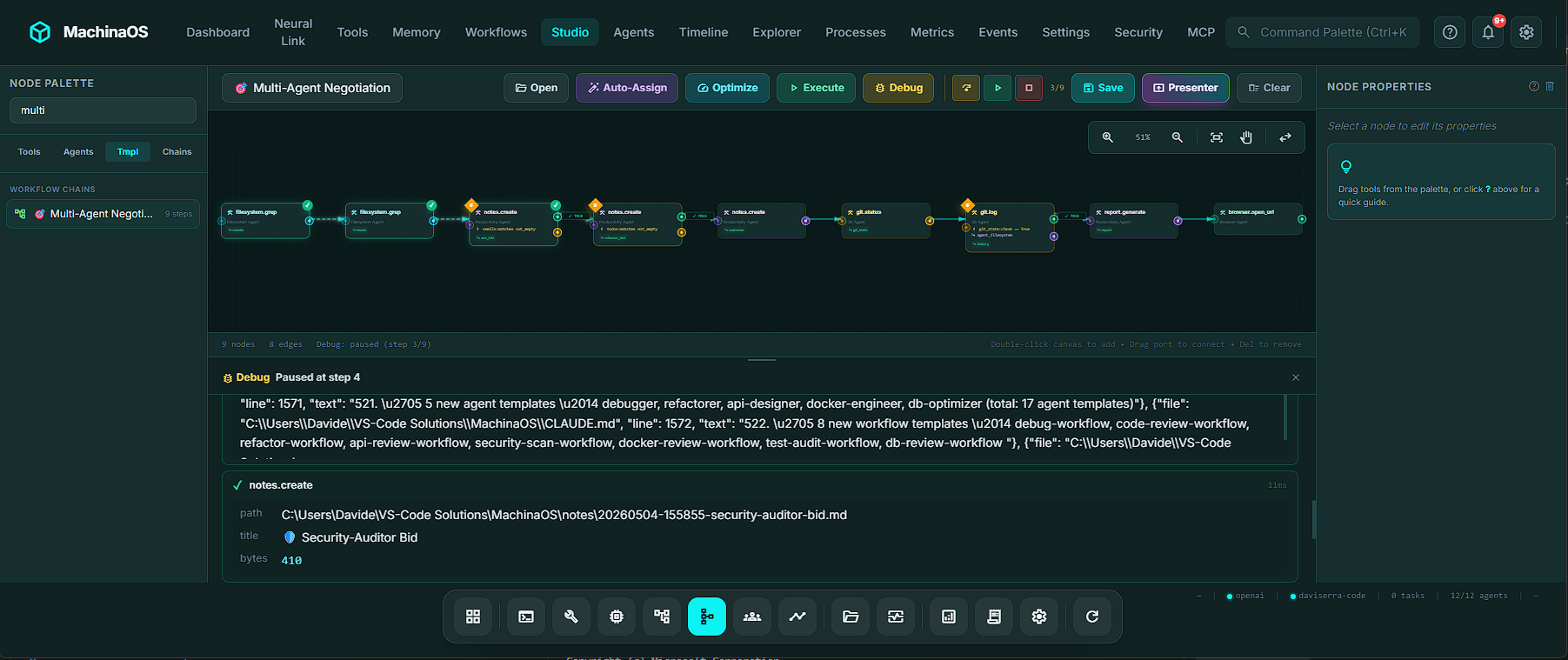

- The Agent Fleet: A Specialized Digital Workforce

Automation in MachinaOS is not managed by a monolithic AI script; it is distributed across an Agent Fleet. By default, MachinaOS includes built-in specialized agents (Filesystem, Git, Shell, System, MCP). Users can dynamically expand this fleet using over 21 blueprint models, instantly activating pre-configured personas such as the Security Auditor, the Code Reviewer, or the Release Manager.

What makes this fleet enterprise-grade is its Multi-Agent Coordination Framework. Instead of a rigid script, the fleet exhibits intelligent, emergent behavior, guided by five orchestration strategies (Single, Round Robin, Capability Score, Fallback, and Pipeline).

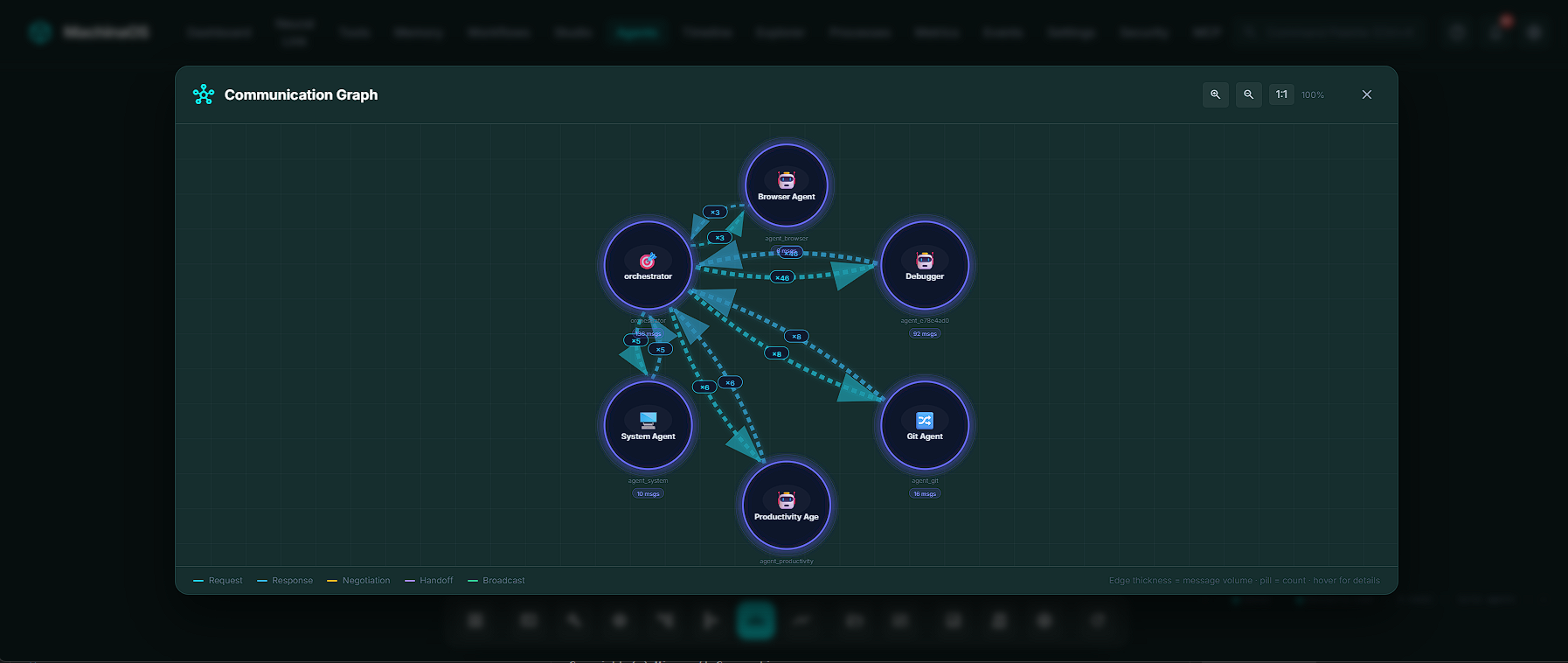

- The Communication Graph: Visualizing Autonomy

In complex multi-agent systems, observability is crucial. MachinaOS solves the AI "black box" problem with its dedicated Communications Dashboard.

The dashboard features a real-time SVG Communication Graph that visualizes every interaction between agents. Agents are arranged as circular nodes, connected by color-coded directed arcs: cyan for direct requests, amber for negotiations, purple for handoffs, and emerald for broadcast queries.

Hovering over any arc reveals precise tooltips showing message count and sequence. Below the graph, a unified activity feed provides a filterable history of every event, allowing human operators to group messages into conversation threads via correlation IDs. This ensures that when the AI workforce collaborates on a codebase, their exact reasoning and data flow can be inspected at any time.

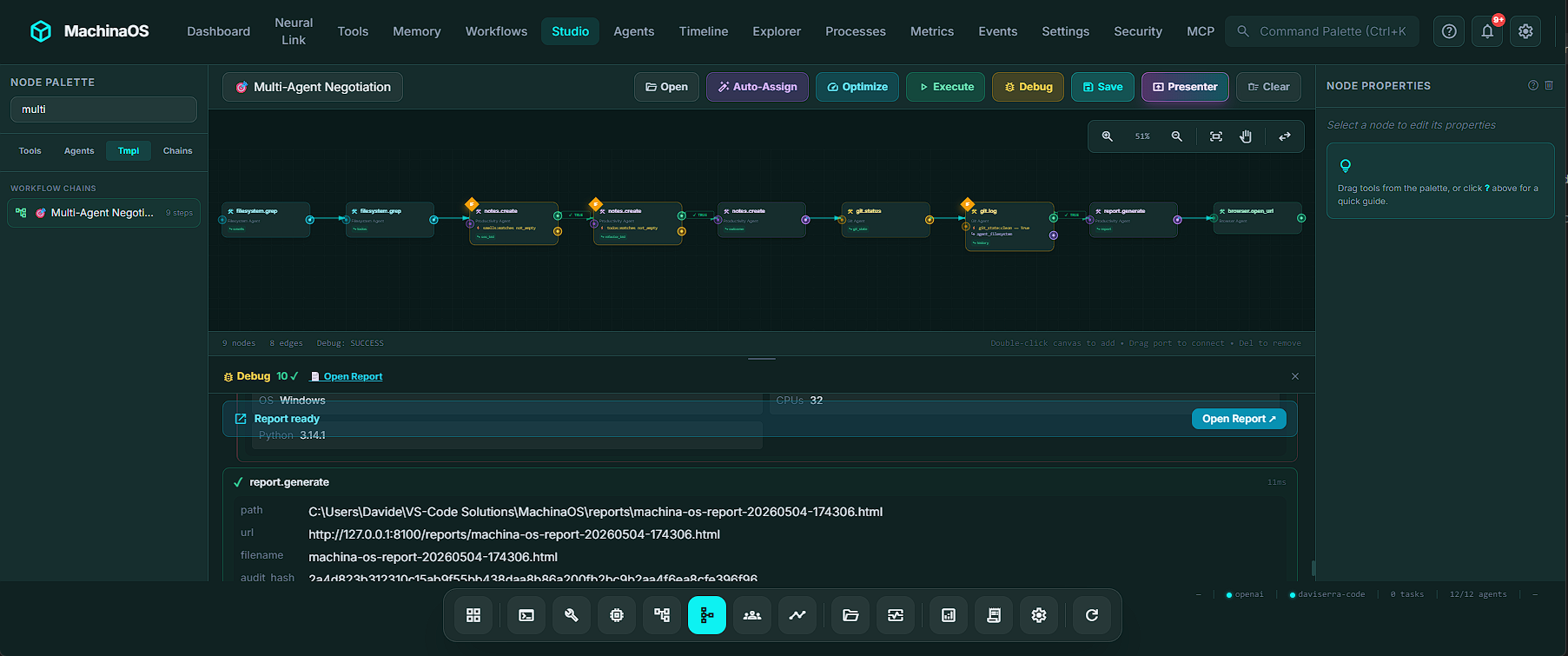

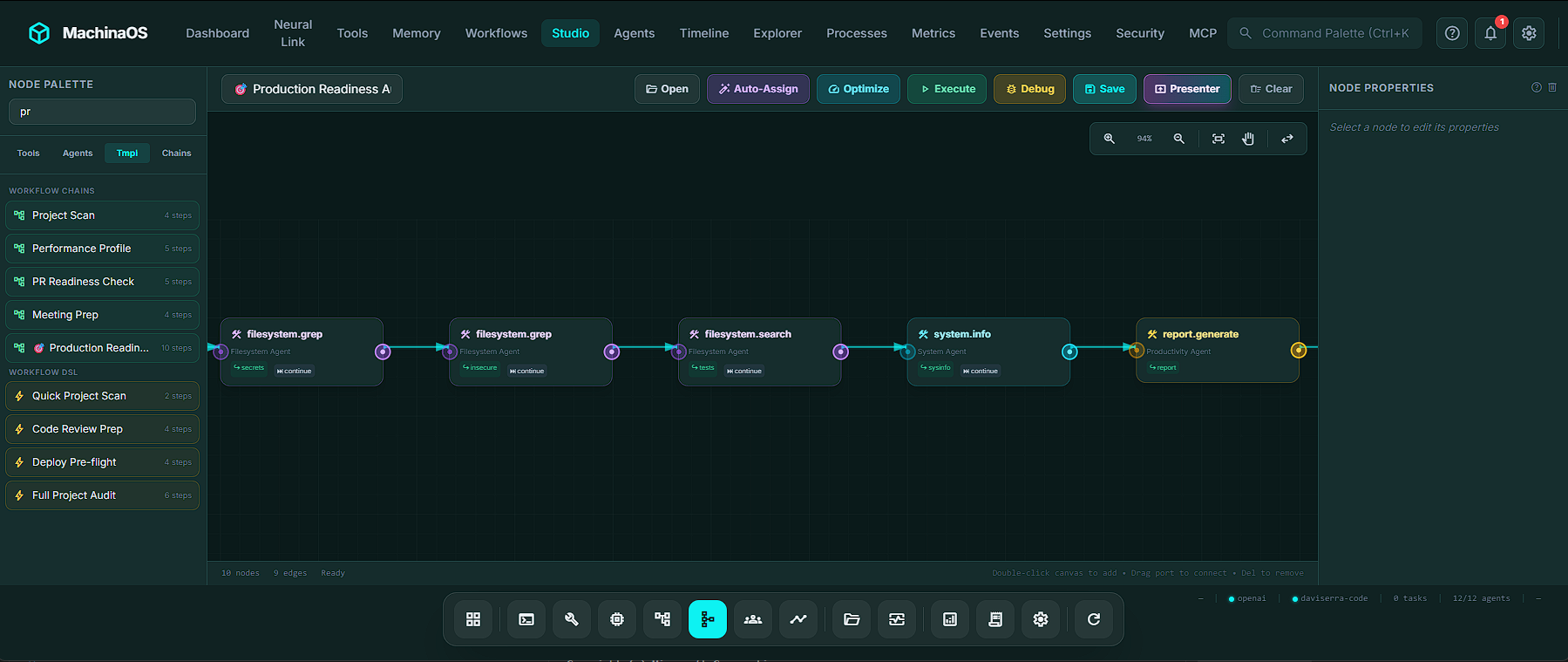

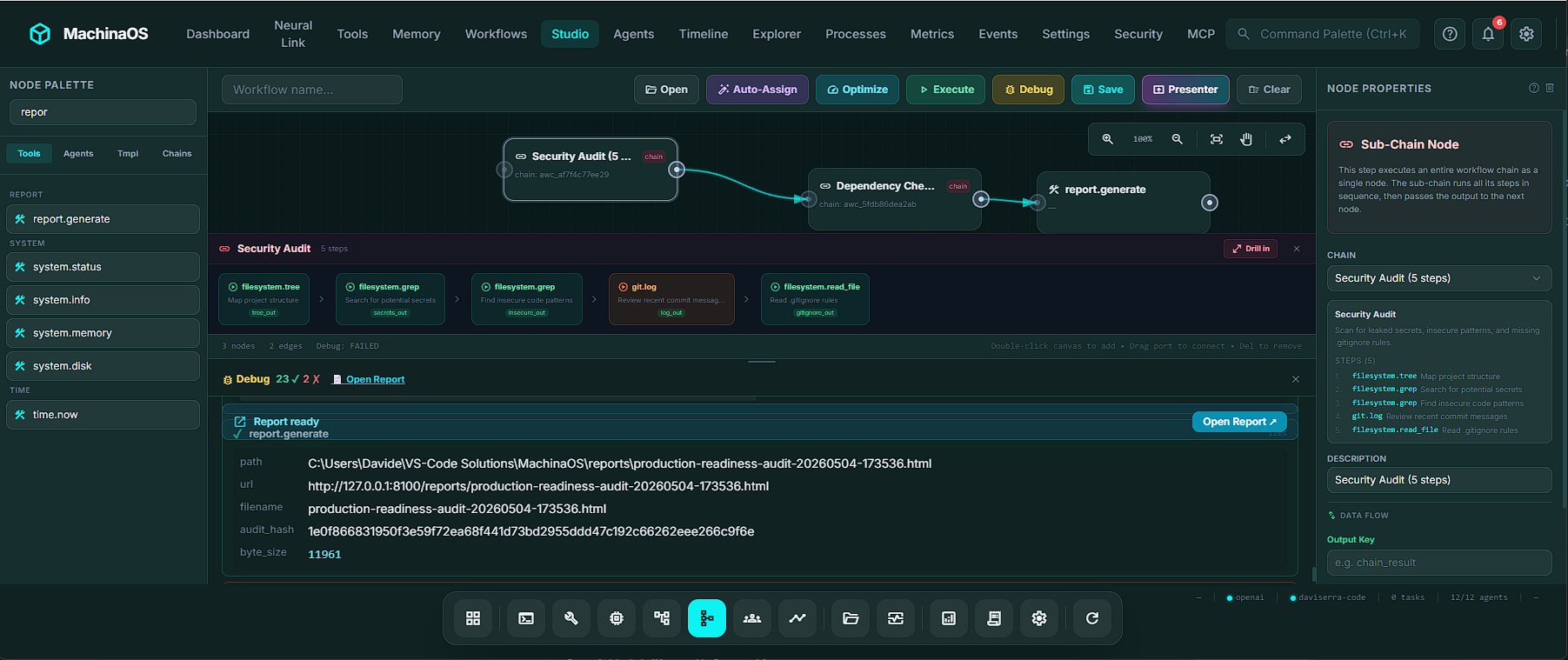

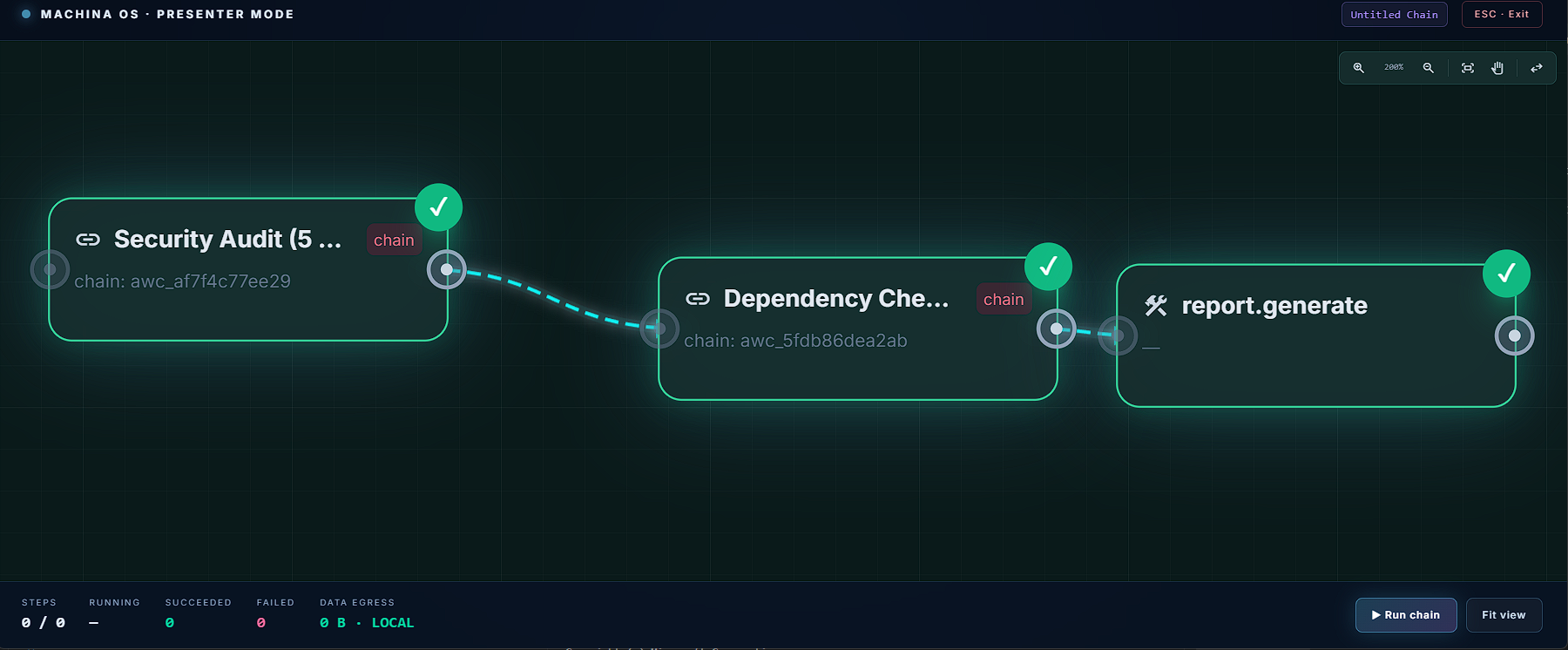

- Workflow Studio and Chain Composition: Visualizing Execution

For repeatable engineering routines, MachinaOS offers the Workflow Studio—a visual, node-based, and striking workflow composer.

- MCP Integration: The Universal Bridge

MachinaOS interacts intensely with Anthropic's Model Context Protocol (MCP) and also with other MCPs. Fundamentally, MachinaOS operates in a Dual Role.

As an MCP Client: MachinaOS natively connects to external MCP servers (such as GitHub, Postgres, Slack, and Anthropic's memory server). Once connected, external tools are integrated into MachinaOS as first-class citizens. They populate the visual Workflow Studio, respond to Neural Link inputs, and even undergo risk policy checks (requiring human approval) without writing a single line of code.

As an MCP Provider: In reverse, MachinaOS acts as an MCP Server for external clients like Claude Desktop or Cursor. By connecting Claude to MachinaOS via Server-Sent Events (SSE), Claude gains the ability to trigger all 52 of Machina's native tools, read from 17 live system resources (like workspace status or agent health), and utilize 4 specialized prompt templates (like analyze_code or scrub_credentials).

This is not excluding in any way the integration at any level with any other big AI weight on the cloud, LMstudiio, Openrouter and of course Ollama.

The AI on Premise here is one of the approaches we mostly take care of.

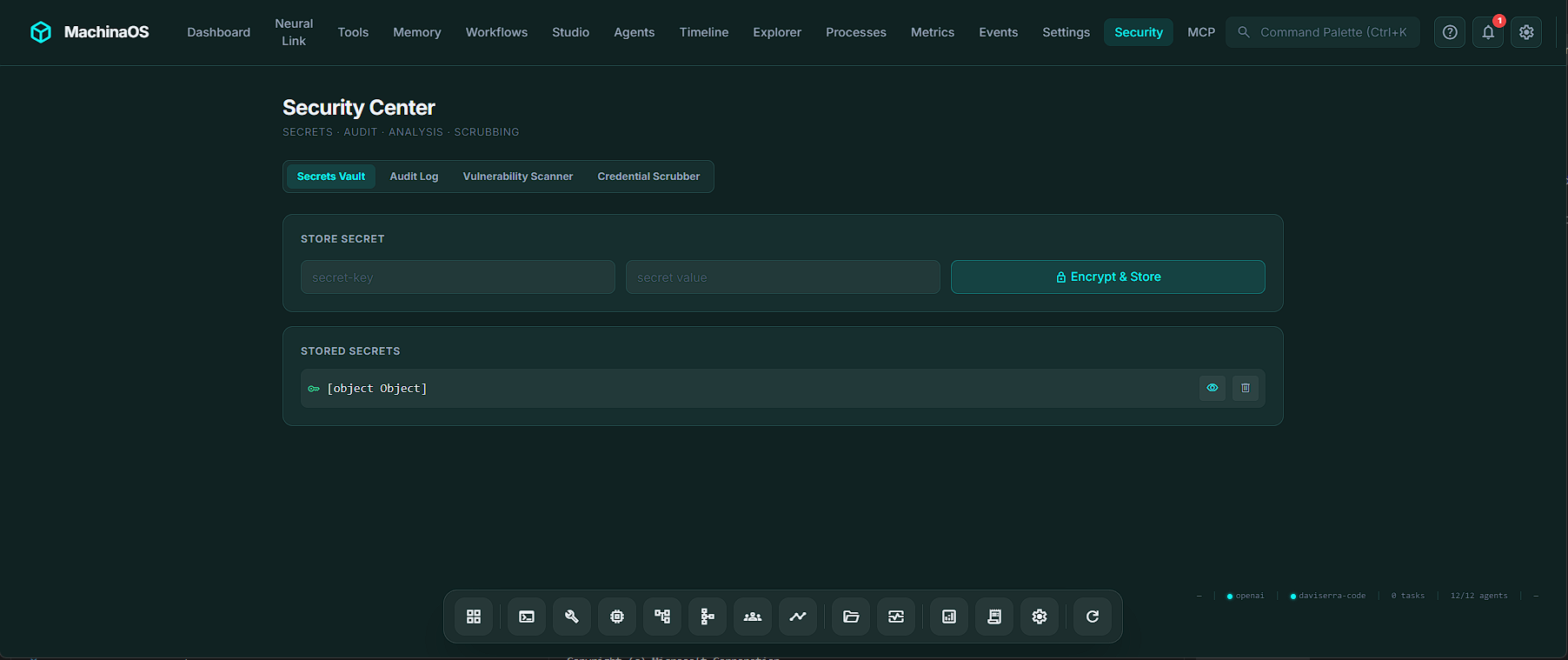

- Security Center: Enterprise-Grade Governance

Because MachinaOS acts on the host system, security is woven into its foundational DNA, centralized in the Security Center.

- The Machina Intelligence Ecosystem

MachinaOS does not exist in a vacuum. It is a flagship layer of a broader vision spearheaded by Machina Intelligence Inc., pioneering neural intelligence systems for an autonomous world.

By visiting machina-intelligence.com, enterprises and visionary creators can access a wider suite of specialized AI solutions designed to transform industries. This powerful ecosystem includes:

AI-Radar: An independent observatory dedicated to analyzing AI models, local LLM stacks, hardware signals, and emerging AI market trends.Shopfloor Copilot: An AI-enhanced Manufacturing Execution System (MES) platform providing intelligent decision support and on-prem industrial diagnostics.Teyra: An AI assistant workspace focused on complex architecture reviews, prompt engineering, and expert workflows.FantacalcioAI: A specialized intelligence suite leveraging AI for lineup optimization, scouting analytics, and live market insights.

-

MachinaOS as an App

MachinaOS can be distributed as a local App too! A Native Desktop Shell for Windows,Linux and MacOS.

Tauri 2-based desktop app ships as a self-contained installer (MSI / NSIS / DEB / AppImage / DMG) with bundled Python runtime and all dependencies. Zero prerequisites on the target machine. Frameless glassmorphic window with custom titlebar, minimize/maximize/close controls, and system-tray hide-to-background support.

Conclusion: Engineering the Future

MachinaOS represents a leap forward in how we interact with our digital environments. It moves us past the era of the "chat window" and into the reality of the "governed workforce." With its Neural Link, intuitive Workflow Studio, self-healing Agent Fleet, transparent Communication Graph, seamless MCP integration, and uncompromised Security Center, MachinaOS is the definitive intent-driven operating layer.

As part of the expansive machina-intelligence.com ecosystem, it stands ready to power the future of autonomous workflows. For technical teams, engineers, and developers ready to transform intent into auditable execution, the future is already running locally on MachinaOS

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!