Introduction

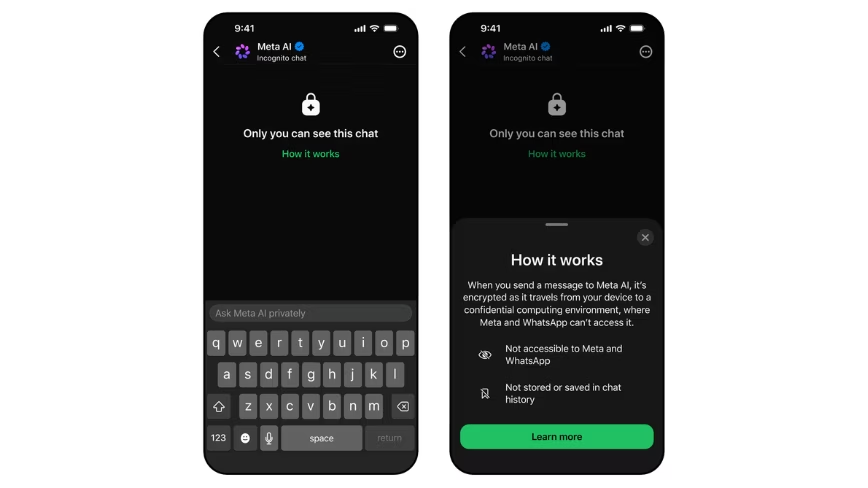

Meta has announced the release of a new feature for its AI assistant, named "Incognito Chat." This mode is now available on both WhatsApp and the Meta AI app, representing a significant step in the company's approach to managing privacy in conversational artificial intelligence interactions. The introduction of Incognito Chat aims to address one of the main concerns associated with using AI assistants: the storage and accessibility of conversation data.

Meta's move reflects a growing awareness within the tech industry regarding the need to balance Large Language Model (LLM) innovation with user confidentiality requirements. Many AI-powered assistants, by their nature, process and often retain interaction data to improve their performance or for other purposes. Incognito Chat proposes to deviate from this paradigm, offering an environment where privacy is prioritized by design.

Technical Details and Implementation

The core of Incognito Chat mode lies in its operation within a Meta-owned "Private Processing enclave." This isolated environment is designed to ensure that conversations between the user and Meta AI remain confidential. One of the fundamental features is the automatic deletion of conversations by default, with no server-side record being retained. This means that once the interaction is complete, the data is no longer accessible.

The architecture of a "Private Processing enclave" typically involves the use of confidential computing technologies, where data is processed in a protected environment isolated even from the service provider. This approach is crucial for data sovereignty and compliance, especially in regulated sectors. Meta's promise that even the company itself cannot read the content of chats in Incognito mode underscores its commitment to an enhanced privacy model, which could have significant implications for LLM deployment in sensitive enterprise contexts.

Implications for Privacy and On-Premise Deployment

The introduction of a private chat mode by a player like Meta highlights the increasing demand for AI solutions that respect privacy. For businesses, particularly those operating in sectors with stringent regulatory requirements such as finance or healthcare, the ability to use LLMs without compromising data confidentiality is fundamental. Although Meta's solution is cloud-based, the concept of a "Private Processing enclave" resonates with the needs of those evaluating an on-premise deployment.

Organizations opting for self-hosted or air-gapped solutions for their LLMs often do so precisely to maintain complete control over data and infrastructure. The challenge is to replicate the capabilities and scalability of cloud services while maintaining data sovereignty. Meta's approach, while not an on-premise deployment, demonstrates that confidential processing technology is mature and can serve as a benchmark for those designing AI architectures with high privacy and security standards. For those evaluating on-premise deployment, AI-RADAR offers analytical frameworks on /llm-onpremise to assess trade-offs between control, Total Cost of Ownership (TCO), and performance.

Future Prospects and User Trust

Incognito Chat mode represents an attempt by Meta to rebuild user trust in the era of AI assistants. Transparency about how data is handled and the assurance that conversations are not stored or read are crucial elements for the widespread adoption of these technologies. This approach could prompt other LLM developers to consider similar solutions, raising privacy standards across the entire ecosystem.

The success of initiatives like Incognito Chat will depend on user and security expert perception and verification of its effectiveness. In a landscape where data management is constantly under scrutiny, offering tools that promise granular control over one's privacy is a step forward. It remains to be seen how this functionality will evolve and whether it will influence LLM deployment strategies in enterprise contexts, where data protection is an absolute priority.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!