The goal of Harness Engineering is to shape a model's intelligence for specific tasks, optimizing performance, token efficiency, and latency. This approach focuses on building tools around the model to achieve these goals.

Trace Analysis for Debugging

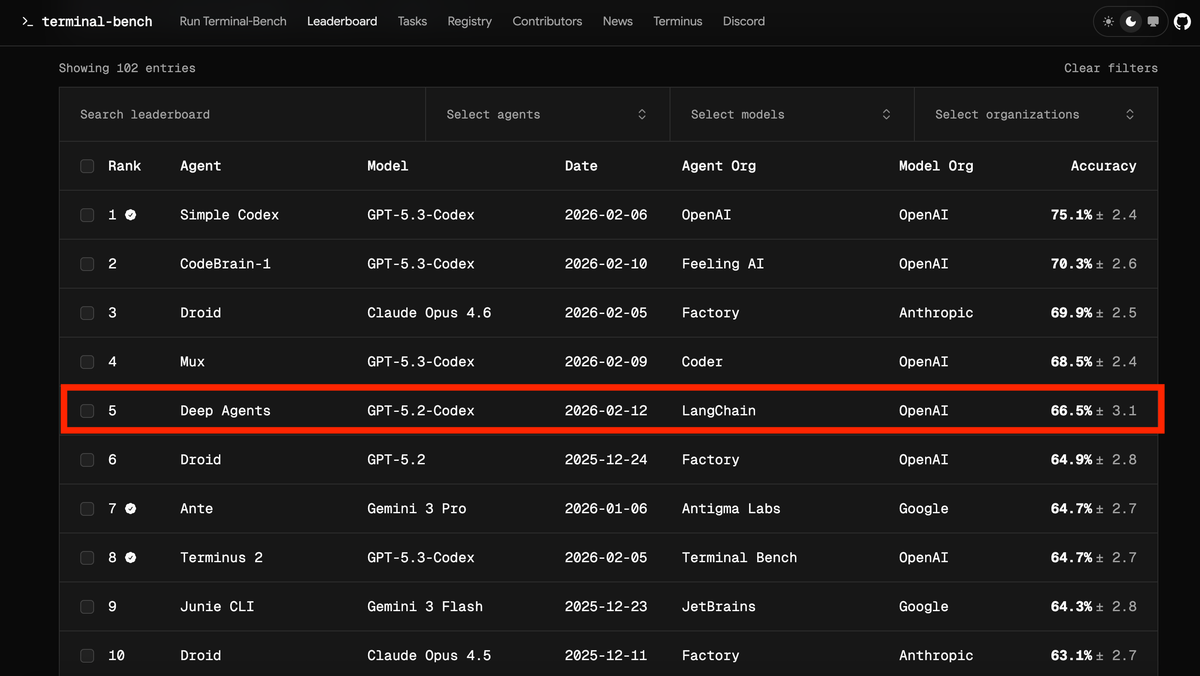

LangChain uses Traces to understand the weaknesses of agents on a large scale. By analyzing inputs and outputs, areas for improvement can be identified. A simple iterative process improved the performance of the deepagents-cli coding agent by 13.7 points on Terminal Bench 2.0, from 52.8% to 66.5%, acting solely on the harness and keeping the model (gpt-5.2-codex) unchanged.

Experiment Setup

Terminal Bench 2.0 was used to evaluate the agent's coding capabilities. Harbor was used to orchestrate the runs, managing sandboxes (Daytona), interactions with the agent, and verification processes. Every action of the agent was stored in LangSmith, including metrics such as latency, token count, and costs.

Key Components of the Harness

An agent's harness offers several levers for intervention: system prompts, tools, middleware, skills, sub-agent delegation, and memory systems. Optimization focused on three main aspects: System Prompt, Tools, and Middleware (hooks around model and tool calls).

Trace Analysis Skill

Trace analysis has been transformed into an agent skill to make it repeatable. The workflow involves:

- Retrieving experiment traces from LangSmith.

- Generating parallel agents for error analysis; the main agent synthesizes the results and suggestions.

- Aggregating feedback and making targeted changes to the harness.

This process is similar to boosting, focusing on errors from previous runs. Human intervention can be helpful in verifying and discussing proposed changes, avoiding overfitting.

Implemented Improvements

Automated Trace analysis made it possible to identify areas where agents made mistakes, such as reasoning errors, failure to follow instructions, lack of testing and verification, and exceeding time limits.

Build & Self-Verify

Today's models have self-improvement capabilities. Self-verification allows agents to improve themselves through feedback during execution. Guidance has been added to the system prompt on how to approach problem solving, including:

- Planning & Discovery.

- Build.

- Verify.

- Fix.

Emphasis was placed on testing, which fuels changes in each iteration. A PreCompletionChecklistMiddleware intercepts the agent before exiting and reminds it to perform a verification against the task specifications.

Environmental Context

Harness Engineering includes creating a delivery mechanism for context. A LocalContextMiddleware maps directories and finds tools such as Python installations. Context injection reduces errors and facilitates the agent's onboarding into its environment. Agents were taught to write testable code, emphasizing that their work will be measured against programmed tests. Time budget warnings were added to push the agent to finish the work and move on to verification.

Reconsidering Plans

A LoopDetectionMiddleware tracks the number of changes per file via tool call hooks. It adds context such as "...consider reconsidering your approach" after N changes to the same file.

Compute for Reasoning

It is necessary to decide how much compute to dedicate to each sub-task. GPT-5.2-codex has 4 reasoning modes: low, medium, high, and xhigh. Reasoning was found to help with planning and verification. A "reasoning sandwich" approach (xhigh-high-xhigh) was used as a baseline.

Practical Takeaways

- Context Engineering on Behalf of Agents.

- Help agents self-verify their work.

- Use Traces as a feedback signal.

- Detect and fix bad patterns in the short term.

- Tailor Harnesses to Models.

For those evaluating on-premise deployments, there are trade-offs to consider. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these trade-offs.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!