Nvidia and Nanya: A Strategic Partnership for AI Racks

Nvidia, the undisputed leader in GPUs for artificial intelligence, has forged a collaboration with Nanya Technology for the integration of advanced memory solutions into its AI racks. This partnership aims to meet the growing demand for computing capacity and, in particular, high-density memory, which is essential for training and inference of Large Language Models (LLMs) and other complex AI workloads. Nanya's adoption of LPDDR (Low Power Double Data Rate) memory for these systems highlights a clear direction towards energy efficiency and compaction.

The memory density offered by this integration is remarkable, with a capacity per single rack estimated to be equivalent to that of 4,500 smartphones. This comparison, while analogical, provides a clear indication of the scale and quantity of data that these racks are designed to handle, a critical factor for companies developing and deploying AI solutions at scale.

The Role of LPDDR Memory in Next-Generation AI Systems

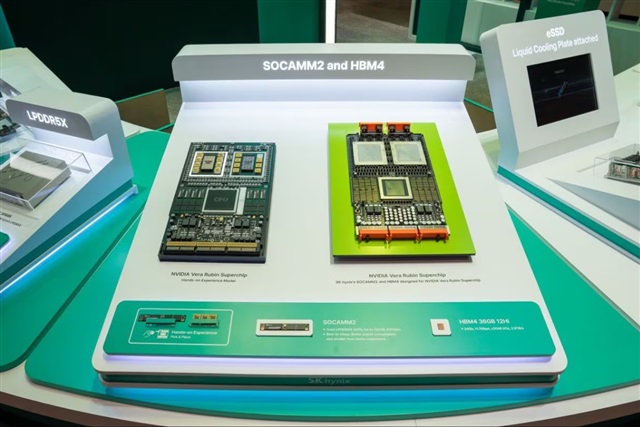

LPDDR memory is traditionally associated with mobile devices due to its energy efficiency and compact form factor. Its integration into Nvidia's AI racks suggests an evolution in hardware architectures, where power optimization and space efficiency are becoming increasingly relevant. While high-end GPUs typically rely on HBM (High Bandwidth Memory) for their extremely high bandwidth, LPDDR can play a crucial role as auxiliary system memory or in specific configurations where density and efficiency are prioritized.

For LLM workloads, memory capacity is a fundamental constraint. Increasingly larger models require gigabytes, if not terabytes, of memory to be loaded and to manage extended context windows. Higher memory density per rack allows for hosting larger models or more model instances, improving overall throughput and reducing latency for inference applications. This is particularly true in scenarios where data and model parallelism are essential for scaling AI operations.

Implications for On-Premise Deployments and TCO

For organizations evaluating on-premise deployments of AI infrastructures, memory choice has a direct impact on the Total Cost of Ownership (TCO). Solutions like LPDDR, which offer high density and energy efficiency, can help reduce operational costs related to power consumption and cooling. In a data center context, where every watt and every unit of space counts, memory optimization translates into a smaller physical footprint and greater environmental and economic sustainability.

The ability to concentrate more memory capacity in a single rack also offers advantages in terms of scalability and infrastructure management. Companies can achieve desired performance requirements with fewer physical units, simplifying deployment, maintenance, and expansion. For those evaluating self-hosted deployments or in air-gapped environments, where data sovereignty and hardware control are priorities, efficiency and density become decisive factors in technology selection. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these complex trade-offs.

Future Prospects and the AI Memory Race

The partnership between Nvidia and Nanya reflects a broader trend in the AI industry: the continuous search for innovative memory solutions to support the evolution of models and applications. With LLMs growing exponentially in terms of parameters and context requirements, memory is no longer just a passive component but a strategic element that defines the limits of computing capabilities. The ability to provide high-density, low-power memory will be a critical factor for AI hardware providers.

This move by Nvidia underscores how the supply chain and technological collaborations are fundamental to addressing enterprise-scale AI challenges. The balance between performance, efficiency, and cost will continue to drive innovations in silicio and system architecture, with the goal of making AI increasingly accessible and powerful for a wide range of industrial sectors.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!