Nvidia's Increasing Reliance on Asian Supply Chains

Nvidia, a dominant player in the artificial intelligence landscape, faces growing exposure to Asian supply chains for essential components in its production. According to recent analyses, a significant 90% of the company's production costs are now tied to these supply chains, a notable increase from the previous 65%. This development highlights an ever-increasing concentration of production and assembly operations in specific geographical regions, with significant implications for cost stability and product availability.

This dependency is not merely a financial matter but touches upon crucial strategic aspects for the global technology industry. Geopolitical volatility, logistical disruptions, and economic fluctuations in these areas can directly impact Nvidia's ability to meet demand, cascading throughout the entire AI ecosystem. For companies planning Large Language Model (LLM) deployments or other AI solutions, understanding these factors is fundamental for evaluating the Total Cost of Ownership (TCO) and the resilience of their infrastructures.

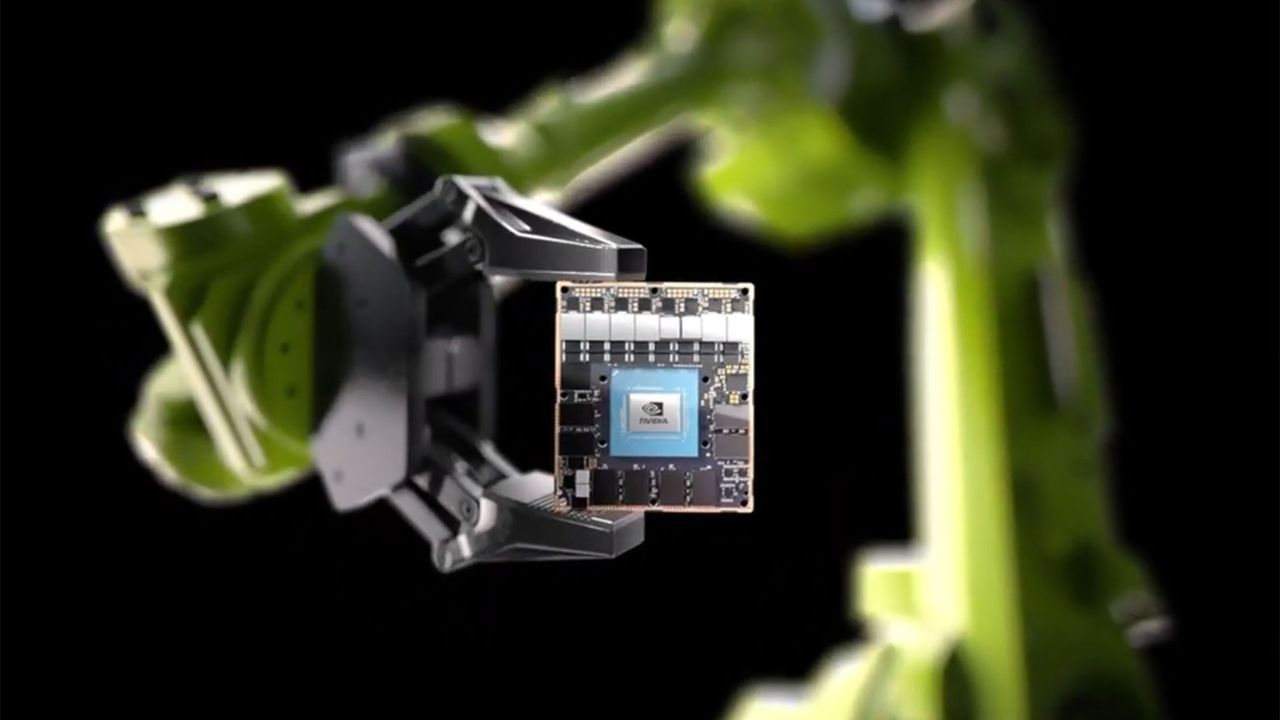

The Impact of Physical AI and the Jetson Platform

The context of physical AI, which includes robotics applications, autonomous vehicles, and smart IoT devices, is set to further intensify this exposure. Physical AI requires specialized hardware for inference directly in the field, often in edge environments. Products like Nvidia Jetson, a high-performance computing platform for embedded and edge AI, are at the heart of this transformation. These compact and powerful systems enable on-site data processing, reducing latency and ensuring greater data sovereignty, crucial aspects for sectors such as manufacturing, logistics, and healthcare.

The growing demand for edge AI solutions, driven by the need to process real-time data and operate in disconnected or air-gapped environments, makes the availability of platforms like Jetson even more critical. Supply chain disruptions can delay innovation and the adoption of these technologies, with direct repercussions on enterprise digitalization strategies. A company's ability to develop and deploy physical AI solutions is closely dependent on the procurement capability for the necessary hardware components.

Considerations for On-Premise and Edge Deployments

For CTOs, DevOps leads, and infrastructure architects evaluating on-premise or edge deployments, reliance on a vendor's supply chain like Nvidia's becomes a key factor in planning. Supply chain resilience directly impacts the ability to scale operations, cost predictability, and risk management. High exposure can translate into greater price volatility for hardware, longer lead times, and potential component shortages—all elements that can compromise the long-term TCO of a self-hosted AI infrastructure.

The choice of on-premise or hybrid architectures is often motivated by the pursuit of greater control, security, and data sovereignty. However, these benefits must be balanced with the risks associated with hardware procurement. Evaluating alternatives, diversifying suppliers, or designing systems with greater hardware flexibility become essential strategies. AI-RADAR offers analytical frameworks on /llm-onpremise to assess these trade-offs, helping companies make informed decisions about LLM and AI deployments in on-premise contexts, considering not only technical specifications but also market and supply constraints.

Future Outlook and Strategic Resilience

Nvidia's increased exposure to Asian supply chains underscores a broader trend in the technology sector, where the globalization of production clashes with the growing need for resilience and security. For companies investing in AI infrastructures, it is imperative to adopt a strategic vision that goes beyond mere technical specifications, including an in-depth analysis of supply chain risks. This approach allows for mitigating potential disruptions and ensuring the operational continuity of critical AI workloads.

The ability to anticipate and manage these risks will be a key differentiator for enterprises aiming to build and maintain a competitive advantage in the AI era. Planning for on-premise or edge deployments must therefore consider not only immediate performance and costs but also the robustness of the entire value chain, from chip production to the final system deployment. Supply chain resilience thus becomes a fundamental pillar for the long-term sustainability and effectiveness of AI strategies.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!