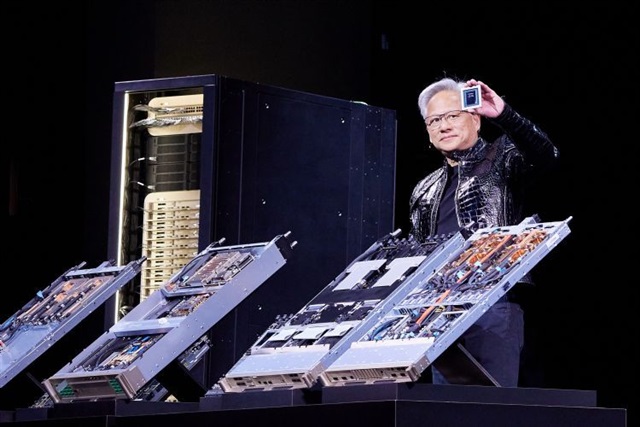

Nvidia Vera Rubin: Roadmap Clears for 2026

Nvidia, a dominant player in the artificial intelligence hardware landscape, has reportedly resolved issues affecting its upcoming platform, codenamed Vera Rubin. According to DIGITIMES, the supply chain is now targeting a ramp-up in deliveries during the third quarter of 2026. This news is particularly relevant for companies and organizations that rely on Nvidia solutions for their AI workloads, as it provides greater clarity on the future availability of next-generation hardware.

The timing of release and production capacity for new GPUs are critical factors for strategic planning, especially for those evaluating on-premise deployments of Large Language Models (LLM) and other artificial intelligence applications. The availability of advanced silicon directly impacts the ability to implement high-performance AI solutions with optimized energy efficiency and granular control over data.

The Technological and Market Context

The Vera Rubin platform is anticipated as the next evolution following the Hopper (H100) and Blackwell (B100/B200) architectures, representing the next generational leap in Nvidia GPUs. Each new generation brings significant improvements in terms of computing power, VRAM capacity, and throughput, which are fundamental elements for training and inference of increasingly complex LLMs. These advancements are essential for managing models with billions of parameters and extended context windows, while simultaneously reducing latency and increasing the number of tokens processed per second.

For self-hosted infrastructures, adopting the latest generation hardware can lead to a more favorable TCO in the long run, thanks to greater energy efficiency and higher compute density per rack unit. The resolution of issues and the subsequent production ramp indicate that Nvidia is on track with its roadmap, sending a positive signal to the market and allowing companies to align their AI infrastructure investment strategies.

Implications for On-Premise Deployments and Data Sovereignty

The availability of new GPU generations like Vera Rubin is a decisive factor for organizations prioritizing on-premise deployments. The ability to access cutting-edge hardware allows for complete control over data and models, a crucial aspect for data sovereignty, regulatory compliance (such as GDPR), and security in air-gapped environments. Companies can thus avoid dependencies on cloud service providers, which can entail variable operational costs and potential constraints on data localization.

For those evaluating on-premise deployments, certainty about the hardware roadmap enables more accurate planning of CapEx and OpEx investments. The choice between cloud and self-hosted solutions often boils down to an in-depth TCO analysis, which includes not only the initial hardware cost but also energy, cooling, maintenance, and specialized personnel costs. The greater efficiency of new GPUs can tip the scales in favor of on-premise solutions for intensive, long-term workloads. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these trade-offs, providing tools for informed decisions.

Future Prospects and Strategic Planning

The announcement of issue resolution and the projected production ramp for Vera Rubin in the third quarter of 2026 offer companies a more defined timeline for upgrading their AI infrastructures. This allows CTOs, DevOps leads, and infrastructure architects to begin evaluating how to integrate this new technology into their existing pipelines, considering factors such as data center upgrades, power and cooling capacity, and compatibility with current software frameworks.

Closely monitoring Nvidia's roadmap and supply chain dynamics is crucial for anticipating opportunities and constraints. The availability of powerful and reliable hardware is the foundation for innovating with AI, ensuring that deployment decisions support long-term business objectives, from research and development to large-scale production. Clarity on the Vera Rubin timeline is an important step in this direction, providing a benchmark for future AI investment strategies.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!