Onsemi and the AI Data Center Push

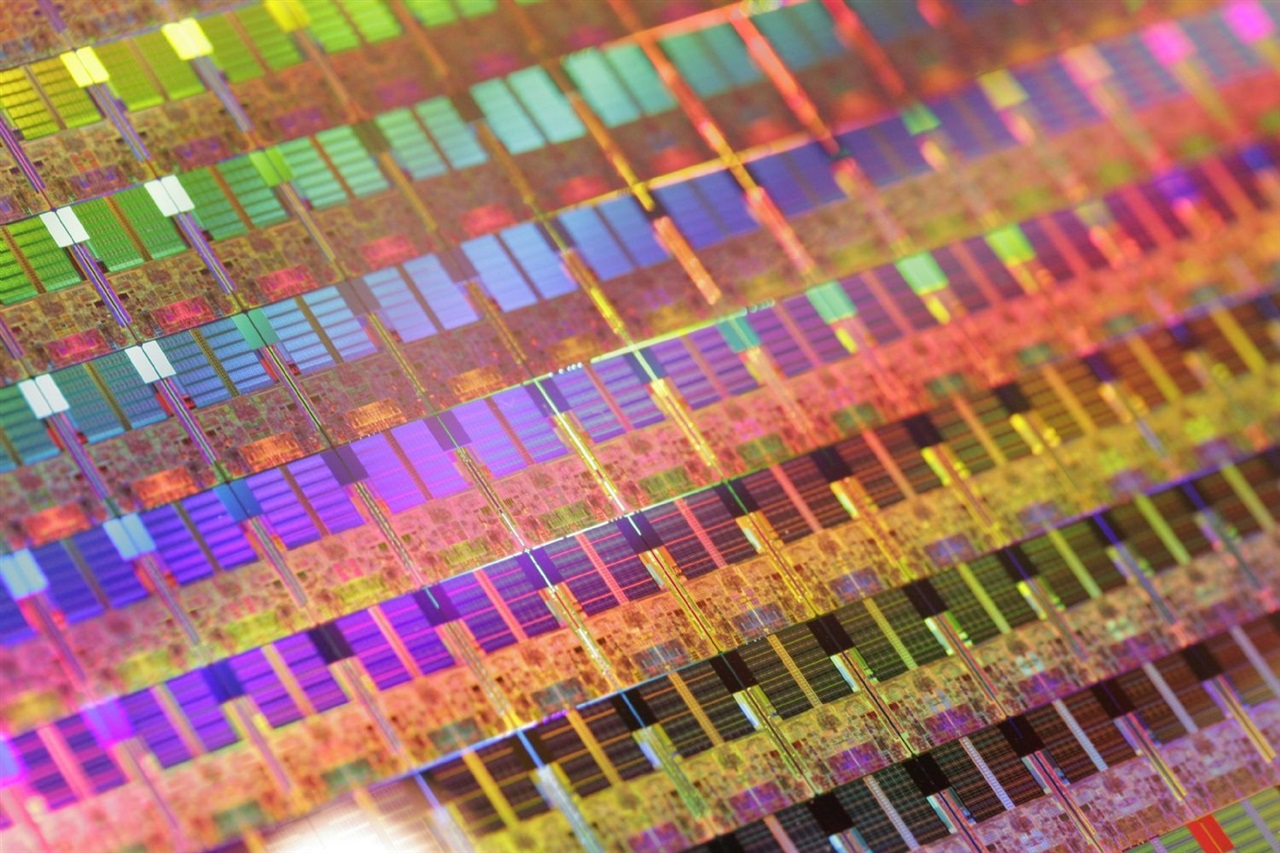

Onsemi, a prominent player in the semiconductor landscape, has outlined a clear strategy for its future growth, identifying two fundamental pillars: the artificial intelligence data center segment and the Treo division. The company anticipates these sectors will be the primary engines for revenue recovery and improved operating margins. This vision is set against a backdrop of increasing demand for specialized infrastructure capable of supporting the intensive workloads required by LLMs and advanced AI applications.

The focus on AI data centers underscores Onsemi's awareness of market evolution. The computational demands of AI algorithms, particularly Large Language Models, are redefining data center architectures, pushing towards solutions that ensure high computing power, energy efficiency, and the ability to manage enormous data volumes.

The Technical Context of AI Data Centers

Modern AI data centers are not mere evolutions of traditional infrastructures; they represent a distinct paradigm, optimized for hardware acceleration. The demand for high-performance GPUs, with ample VRAM capacity and high throughput, has become a critical factor. Components such as AI accelerators, power supply systems, and thermal management solutions, where Onsemi has a strong presence, are essential for ensuring the operation and efficiency of these facilities.

For companies developing and deploying LLMs, infrastructure choice is crucial. They constantly evaluate the trade-offs between the flexibility and scalability offered by the cloud and the control, data sovereignty, and long-term TCO guaranteed by an on-premise deployment. A data center's ability to support intensive workloads, with specific requirements for latency and parallelism, is a distinctive element that directly influences the performance of AI models.

Implications for On-Premise Deployment

Onsemi's strategy, focused on AI data centers, has direct implications for organizations considering or already adopting a self-hosted approach for their AI workloads. The availability of robust and optimized hardware components is fundamental for building efficient local stacks. For those evaluating on-premise deployment, the choice of silicio suppliers and power modules can significantly impact overall TCO, energy efficiency, and the ability to maintain air-gapped environments for compliance and security needs.

Data sovereignty and the need to adhere to stringent regulations, such as GDPR, drive many companies to prefer on-premise solutions, where control over infrastructure and data is maximized. In this scenario, the reliability and performance of components provided by companies like Onsemi become a key factor for the success of complex AI projects, which require not only computing power but also cutting-edge energy and thermal management.

Future Prospects and Treo's Role

The identification of Treo as an additional growth driver suggests a strategic expansion by Onsemi into specific areas of the AI market or new product lines. While specific details about Treo have not been disclosed, it is plausible that it represents a division or a suite of solutions that complement the AI data center offering, perhaps focusing on emerging segments or enabling technologies.

The artificial intelligence market continues to evolve rapidly, with a constant demand for innovation at the hardware and infrastructure levels. The ability of companies like Onsemi to anticipate these needs and provide critical components will be decisive in supporting the next generation of AI applications, both in cloud environments and, increasingly, in on-premise and hybrid configurations, where control and efficiency are paramount.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!