The Expansion of the Pelican Constellation

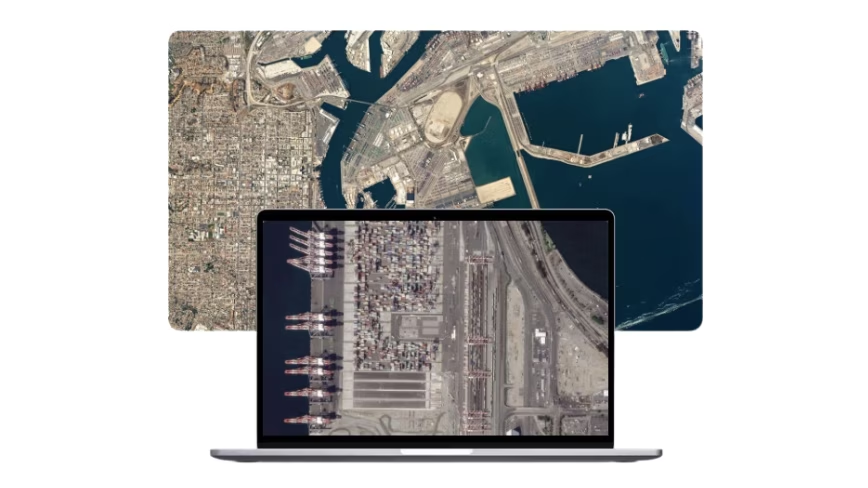

On May 3, a SpaceX Falcon 9 rocket lifted off from Vandenberg Space Force Base, carrying a payload of 45 satellites into orbit. Among these were three new Planet Labs spacecraft, named Pelicans 7, 8, and 9, marking a further expansion of the company's next-generation constellation. With these launches, the Pelican fleet now totals nine satellites dedicated to high-resolution Earth observation.

This expansion enhances Planet Labs' capability to offer a service that goes beyond merely providing static satellite images. The company focuses on selling a subscription that allows monitoring the entire planet's changes in real time, a proposition that radically transforms the approach to geospatial analysis and the detection of global-scale events.

The Challenges of Large-Scale Geospatial Data Processing

The ambition to monitor the planet in real time generates a continuous stream of colossal data volumes. Managing, storing, and analyzing petabytes of high-resolution satellite imagery represents a significant infrastructural challenge for any organization. This scenario demands extremely robust data pipelines and advanced computational capabilities to extract useful information, such as detecting environmental changes, identifying urban patterns, or monitoring critical infrastructure.

The application of artificial intelligence models, including Large Language Models (LLM) or computer vision models, is crucial for transforming this raw data into actionable insights. However, performing inference on such data volumes, often with low-latency requirements, imposes stringent demands on the underlying hardware, from GPU VRAM to network and storage throughput capabilities.

Implications for Infrastructure Deployment and Data Sovereignty

For businesses and government agencies relying on this data for strategic decisions, the choice of infrastructure deployment becomes critical. Processing sensitive or proprietary data, such as that derived from Earth observation, often requires strict control over the computational environment. In this context, self-hosted or on-premise solutions, including bare metal or air-gapped deployments, offer advantages in terms of data sovereignty, regulatory compliance, and security.

While the cloud offers scalability and flexibility, long-term Total Cost of Ownership (TCO) and concerns regarding data residency can push towards hybrid or entirely local alternatives. Evaluating these trade-offs is complex and must consider not only direct costs but also latency requirements, the need to customize hardware for specific workloads, and the ability to maintain complete control over the data processing chain.

Future Prospects and Strategic Decisions

The evolution of Earth observation services, such as those offered by Planet Labs, underscores a growing trend towards predictive analysis and proactive monitoring based on near real-time data. This scenario compels technical decision-makers, such as CTOs and infrastructure architects, to reconsider their deployment strategies for AI/LLM workloads.

The ability to effectively manage and analyze these massive data streams, while maintaining security and compliance, will be a distinguishing factor. The choice between cloud, on-premise, or hybrid infrastructure is never trivial and requires a thorough analysis of specific business constraints, performance needs, and data control objectives. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these trade-offs, supporting organizations in defining the most suitable strategy.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!