ServiceNow and the AI Wave in Enterprise Software

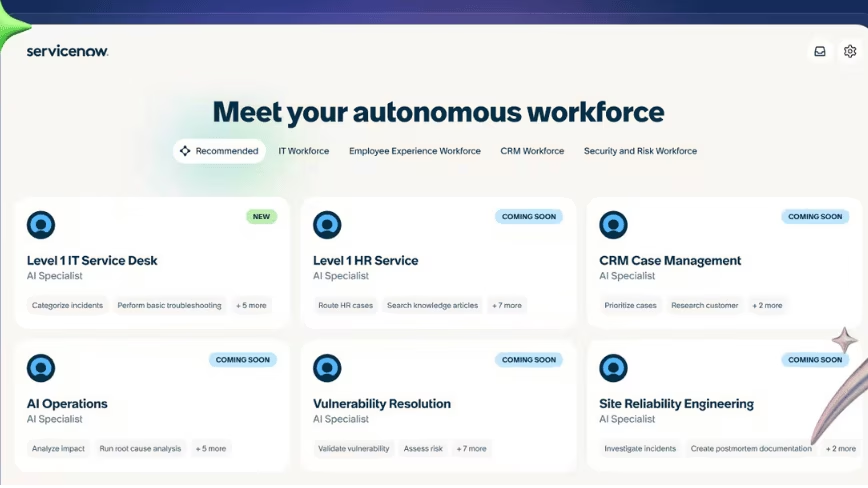

In 2026, ServiceNow has established itself as a closely watched company in the enterprise software landscape, serving as a litmus test for the ability of large entities to ride the artificial intelligence wave rather than being displaced by it. The company recently provided investors with a clear vision of its future ambitions, outlining a strategy that places AI at the core of its growth.

Financial projections released by ServiceNow indicate a target of $30 billion in subscription revenue by 2030. A particularly significant figure emerges from this forecast: one-third of the Annual Contract Value (ACV) is expected to come directly from AI-powered solutions. This statement, reported by Bloomberg and attributed to Chief Financial Officer Gina Mastantuono, underscores the strategic importance of AI for the company's future.

AI Integration and Infrastructure Challenges

The deep integration of AI, particularly Large Language Models (LLMs), into enterprise software represents a complex transformation. Companies like ServiceNow must not only develop innovative functionalities but also ensure that the underlying infrastructure can support intensive computational workloads. This includes managing large volumes of data, performing inference on complex models, and the need for scalability.

For organizations adopting these AI-powered solutions, deployment decisions become crucial. The choice between cloud environments, self-hosted on-premise, or hybrid configurations is not trivial and depends on factors such as Total Cost of Ownership (TCO), data sovereignty requirements, and compliance policies. Efficiency in model execution, measured in throughput and latency, is fundamental to ensuring a smooth and responsive user experience, especially in critical enterprise contexts.

Data Sovereignty and TCO: The Deployment Dilemma

The adoption of AI solutions in the enterprise sector raises significant issues related to data sovereignty and security. Many companies, particularly in regulated industries, require sensitive data to remain within their geographical boundaries or on fully controlled infrastructures, often in air-gapped environments. This drives the exploration of on-premise or hybrid deployment options, where control over hardware and data is maximized.

However, deploying LLMs and other AI capabilities on-premise involves significant initial investments in specialized hardware, such as high-performance GPUs with adequate VRAM, and technical expertise for infrastructure management. TCO analysis therefore becomes essential to compare operational and capital costs across different strategies. For those evaluating on-premise deployment, AI-RADAR offers analytical frameworks on /llm-onpremise to assess these trade-offs, considering aspects such as energy efficiency, maintenance, and technological obsolescence.

Future Prospects and the Strategic Role of AI

ServiceNow's vision reflects a broader trend in the technology sector: AI is no longer an add-on feature but a fundamental growth engine and a competitive differentiator. Companies that successfully integrate AI into their products and services, while managing infrastructural complexities and compliance requirements, will be the ones that thrive.

The success of these strategies will depend not only on the ability to innovate at the software level but also on the robustness and flexibility of deployment architectures. The ability to offer solutions that respect data sovereignty and optimize TCO will be a critical factor in winning and retaining enterprise customer trust. ServiceNow's trajectory, with its ambitious projections, will be a key indicator for the entire industry on how AI is reshaping the future of enterprise software.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!