La Nuova Memoria ZAM: Un'Alternativa a Basso Consumo per l'AI

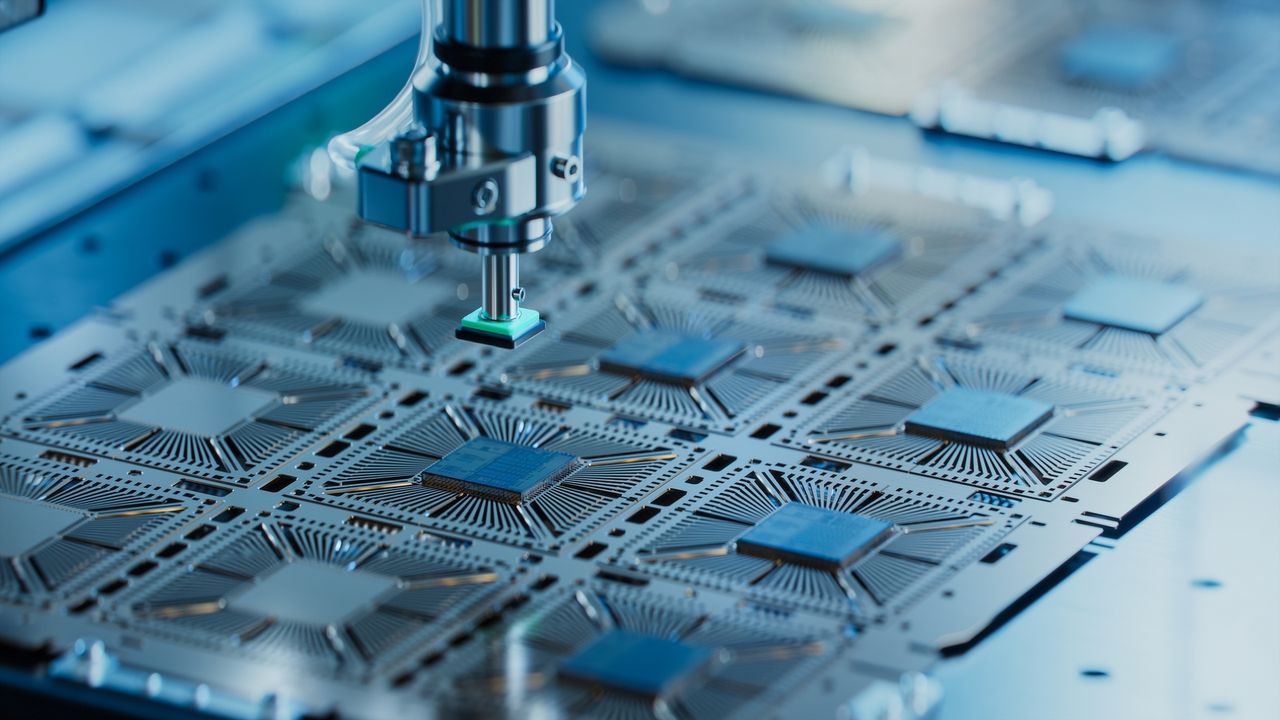

Una sussidiaria di SoftBank, in collaborazione con Intel, sta sviluppando una nuova tecnicia di memoria denominata ZAM. Questo progetto mira a introdurre sul mercato una soluzione innovativa specificamente progettata per le crescenti esigenze dei carichi di lavoro legati all'intelligenza artificiale. L'iniziativa sottolinea l'impegno congiunto delle due aziende nel fronteggiare le sfide attuali e future poste dallo sviluppo e dal deployment di modelli AI sempre più complessi.

L'obiettivo primario della memoria ZAM è quello di offrire un'alternativa a basso consumo energetico rispetto alle attuali memorie HBM (High Bandwidth Memory). Le memorie HBM sono diventate uno standard de facto per le GPU dedicate all'accelerazione AI, grazie alla loro elevata larghezza di banda che permette di alimentare rapidamente i core di calcolo con i dati necessari per l'addestramento e l'Inference di Large Language Models (LLM) e altri modelli complessi. Tuttavia, il loro consumo energetico e il costo rappresentano fattori significativi nel TCO complessivo delle infrastrutture AI.

Dettagli Tecnici e Implicazioni per l'Framework AI

La ricerca di soluzioni di memoria più efficienti è cruciale per l'evoluzione dell'AI. I carichi di lavoro AI, in particolare quelli che coinvolgono LLM con miliardi di parametri, richiedono quantità massicce di VRAM e una larghezza di banda elevatissima per minimizzare la latenza e massimizzare il Throughput. Le memorie HBM, pur eccellendo in queste metriche, contribuiscono in modo significativo al consumo energetico totale di un server AI.

L'introduzione di una memoria come ZAM, che promette un consumo energetico inferiore, potrebbe avere un impatto notevole sui deployment on-premise. Per le aziende che scelgono di mantenere i propri carichi di lavoro AI in ambienti self-hosted o air-gapped per ragioni di sovranità dei dati o compliance, la riduzione del consumo energetico si traduce direttamente in un TCO inferiore, sia in termini di costi operativi (energia e raffreddamento) sia, potenzialmente, in termini di requisiti infrastrutturali meno stringenti. Questo è un fattore chiave per CTO e architetti di sistema che valutano la fattibilità economica e operativa di infrastrutture AI dedicate.

Il Contesto del Supporto Governativo e la Competizione nel Settore

Il progetto ZAM ha ricevuto un significativo sostegno finanziario sotto forma di sussidi dal governo giapponese. Questo tipo di investimento pubblico evidenzia la crescente consapevolezza a livello nazionale dell'importanza strategica di sviluppare tecnicie hardware proprietarie e all'avanguardia nel settore dell'intelligenza artificiale. Il supporto governativo può accelerare la ricerca e lo sviluppo, consentendo alle aziende di affrontare sfide tecniciche complesse che altrimenti richiederebbero investimenti privati proibitivi o tempi di sviluppo più lunghi.

La competizione nel settore delle memorie per AI è intensa, con i principali attori che investono massicciamente in nuove architetture e processi produttivi. L'emergere di alternative come ZAM potrebbe diversificare il panorama, offrendo ai decision-maker più opzioni per ottimizzare le proprie infrastrutture in base a specifici vincoli di costo, potenza e performance. Per chi valuta deployment on-premise, l'analisi di questi trade-off è fondamentale e AI-RADAR offre framework analitici su /llm-onpremise per supportare queste decisioni.

Prospettive Future per l'Framework AI

Lo sviluppo di memorie come ZAM rappresenta un passo avanti nella ricerca di soluzioni hardware più sostenibili ed efficienti per l'AI. Sebbene i dettagli specifici sulle performance e sulla disponibilità commerciale siano ancora da definire, l'iniziativa di SoftBank e Intel suggerisce una direzione chiara verso l'ottimizzazione dell'efficienza energetica senza compromettere le capacità necessarie per i carichi di lavoro AI più esigenti.

Questa innovazione potrebbe non solo ridurre l'impronta energetica dei data center, ma anche abilitare nuove forme di deployment AI, magari più vicine all'edge o in contesti con vincoli energetici più stringenti. La capacità di eseguire LLM e altri modelli complessi con un minore consumo energetico è un fattore abilitante per un'adozione più ampia e decentralizzata dell'intelligenza artificiale, offrendo maggiore flessibilità e controllo alle organizzazioni.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!