Spotify and the New Era of Artist Verification

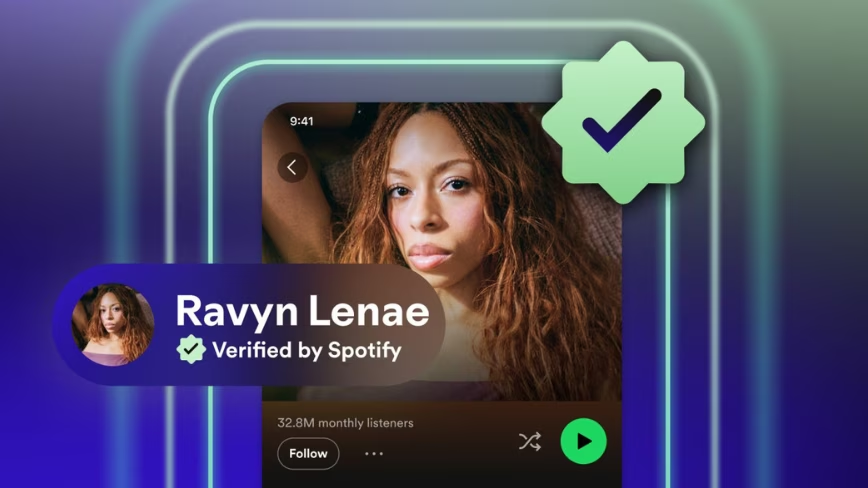

Spotify has announced the introduction of a verified badge for artists, an initiative aimed at strengthening authenticity and trust within its vast music streaming platform. The badge, represented by a green checkmark, will appear on artist profiles and next to their names, signaling their verified identity to users. This rollout will occur in the coming weeks, marking a significant step in managing digital identity within the music streaming industry.

To obtain this recognition, artists will need to meet specific criteria. These include consistent engagement with their listener base, full compliance with platform policies, and, crucially, an identifiable real-world presence. These requirements underscore Spotify's intent to ensure the badge is a true indicator of authenticity and not merely an aesthetic symbol.

The Exclusion of Generative AI and Its Implications

A particularly relevant aspect of this initiative is the explicit exclusion of content farms and artist profiles generated entirely by artificial intelligence. This decision by Spotify highlights a growing concern in the digital sector regarding content provenance and authenticity in the era of generative AI. As LLMs and other AI models become increasingly sophisticated in creating music, lyrics, and even entire artistic identities, the distinction between what is human and what is artificial becomes more blurred.

For businesses and IT operators managing large volumes of data, this trend raises fundamental questions about data governance and sovereignty. The ability to verify the origin of content, whether musical, textual, or otherwise, becomes essential for compliance, security, and building trust. Spotify's exclusion reflects a broader need to establish clear boundaries and verification mechanisms in a digital ecosystem increasingly permeated by AI.

Data Governance and Sovereignty in the AI Era

Spotify's decision, although specific to the music industry, resonates with the challenges many organizations face in managing AI-generated or AI-influenced data. For CTOs, DevOps leads, and infrastructure architects, the issue of data provenance is critical. In environments with stringent compliance requirements or in air-gapped contexts, the ability to track and verify the origin of every piece of information is fundamental to maintaining data sovereignty and regulatory compliance.

Implementing robust data governance frameworks, which include authenticity verification and provenance management, is a strategic investment. This may involve developing self-hosted data pipelines, adopting on-premise solutions for processing and storage, or integrating advanced auditing systems. The choice between cloud and on-premise deployment, in this context, is often driven by the need for granular control over data and verification processes, especially when dealing with sensitive content or intellectual property.

Future Perspectives and the Trade-offs of Digital Verification

Spotify's initiative is a clear signal of how platforms are reacting to the proliferation of AI-generated content. However, the challenge of distinguishing the authentic from the artificial is constantly evolving. As AI models improve, verification methods will also need to adapt, requiring continuous innovation both at the platform level and in the underlying infrastructure.

For organizations operating with LLMs and AI workloads, managing these trade-offs is crucial. On one hand, the efficiency and scalability offered by cloud solutions can be attractive. On the other hand, the control, security, and data sovereignty guaranteed by an on-premise or hybrid deployment become paramount when content provenance and authenticity are at stake. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these trade-offs, supporting strategic decisions on where and how to manage AI workloads securely and compliantly.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!