Voluntary AI Model Evaluation in the US: A First Step Towards Governance

The US Department of Commerce recently announced a significant initiative in the landscape of artificial intelligence governance. Five major AI research labs, including giants like Google, Microsoft, and xAI, have agreed to submit their most advanced models for government evaluation prior to their public release. This collaboration, while voluntary in nature and lacking a formal legal basis, represents the United States' most concrete attempt to establish a form of oversight over AI systems.

The agreement stems from a growing awareness of the potential risks that extremely powerful AI models can pose to national security. The so-called "Mythos crisis" – an unspecified event that evidently raised concrete concerns – forced the administration to confront a crucial question: how to effectively evaluate an AI model before it becomes publicly accessible, especially when its capabilities could have strategic implications? The lack of a formal evaluation mechanism has led to this pragmatic, albeit informal, solution.

The Challenges of Evaluating Large Language Models

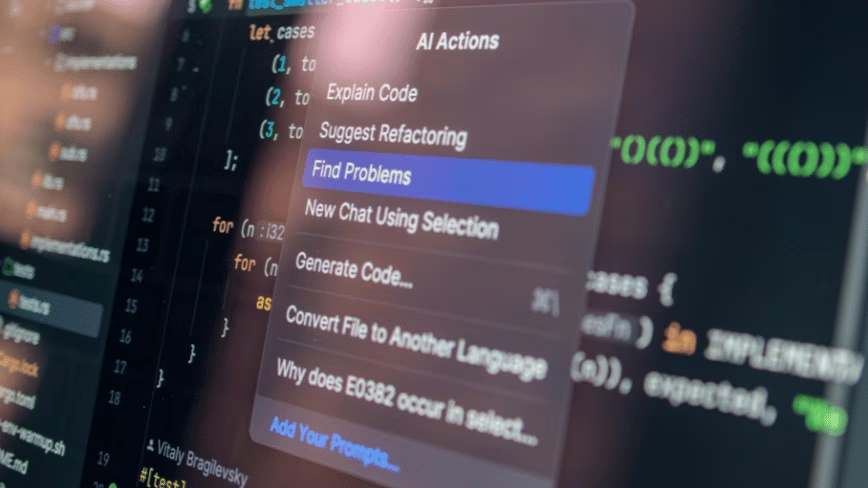

Evaluating Large Language Models (LLMs) and other advanced AI systems presents inherent complexities that go beyond traditional performance benchmarks. These models, especially the latest generation, can exhibit unpredictable emergent behaviors, generate potentially harmful or manipulative content, or be susceptible to vulnerabilities that could be exploited. For organizations considering the deployment of LLMs in self-hosted or air-gapped environments, the ability to conduct rigorous internal evaluation is fundamental to ensuring compliance, data security, and information sovereignty.

The challenge lies not only in identifying the model's capabilities but also in understanding its limitations and potential biases. This requires sophisticated testing pipelines, which often simulate real-world usage scenarios and stress-test the model under extreme conditions. The focus shifts from mere accuracy to robustness, security, and ethics – crucial aspects for any enterprise deployment, whether on-premise or in the cloud. The need to thoroughly understand how a model behaves before its release is a shared requirement for both governments and companies operating in regulated sectors.

Implications for Data Sovereignty and On-Premise Deployment

The US initiative, while a government agreement, raises relevant questions for companies managing AI workloads. The possibility of an external entity evaluating a model before release highlights the increasing importance of trust and transparency. For organizations prioritizing data sovereignty and complete control over their infrastructure, such as those opting for self-hosted or bare metal solutions, the ability to replicate and surpass such evaluation processes internally becomes a critical factor.

The decision to deploy an LLM on-premise is often driven by the need to keep sensitive data within one's own infrastructural boundaries, complying with regulations like GDPR or specific requirements of sectors such as finance or healthcare. In this context, pre-deployment evaluation is not just about functional security but also regulatory compliance and resilience against potential attacks or manipulations. Understanding the trade-offs between rapid adoption of innovative models and the need for thorough evaluation is essential for CTOs and infrastructure architects. AI-RADAR, for example, offers analytical frameworks on /llm-onpremise to support the evaluation of these trade-offs in on-premise deployment contexts.

Future Prospects of AI Governance

This move by the Department of Commerce, though not legally binding, sets an important precedent. It demonstrates the willingness of major tech companies to collaborate with institutions to address AI-related concerns, even in the absence of specific legislation. This "soft law" approach could serve as a bridge towards more structured future regulations as the understanding of AI's capabilities and risks evolves.

The discussion on AI governance is global and complex, with debates ranging from ethical regulation to national security. The US initiative underscores how public-private collaboration can be a mechanism for mitigating risks while maintaining a degree of flexibility. For businesses, this means that the ability to demonstrate the security and reliability of their AI systems, whether developed internally or adopted from third parties, will increasingly become a fundamental requirement, regardless of the deployment context.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!