Vercel, l'azienda nota per aver sviluppato il popolare framework Open Source Next.js, ha recentemente annunciato una fuga di dati che ha portato alla compromissione delle credenziali di accesso di alcuni dei suoi clienti. L'incidente, che ha sollevato preoccupazioni nel settore dello sviluppo web, è stato attribuito a Context.ai, una terza parte coinvolta nelle operazioni di Vercel.

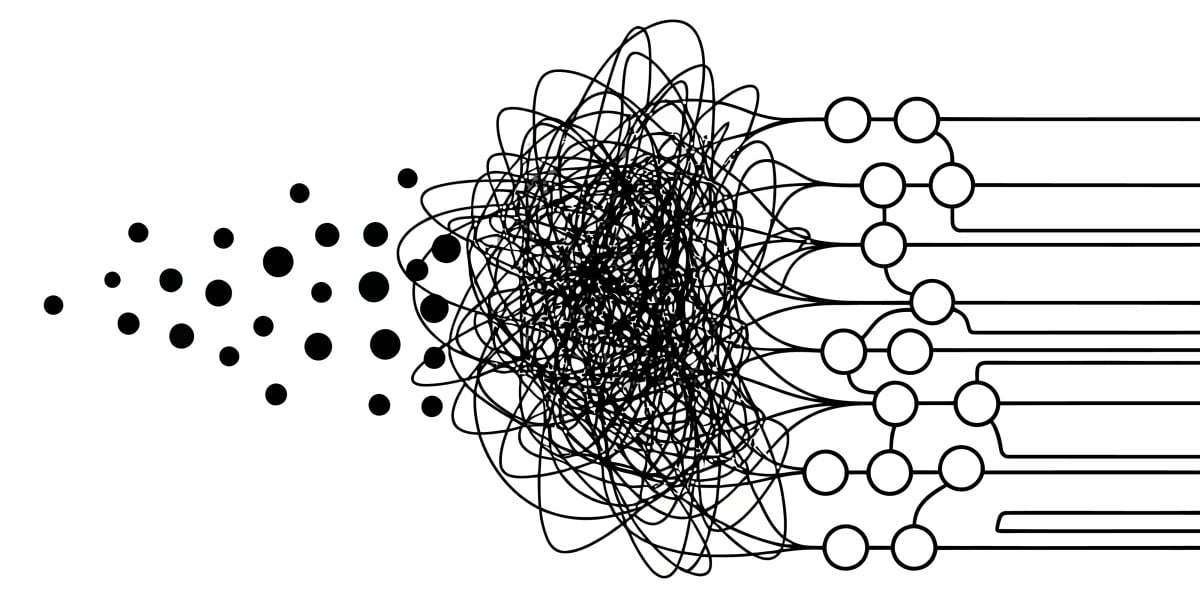

La causa specifica dell'accaduto, secondo quanto riportato, sarebbe riconducibile a un "agentic OAuth tangle". Questo tipo di problematica evidenzia le complessità e i potenziali punti deboli che possono emergere nelle moderne architetture software, specialmente quando si integrano servizi e agenti esterni che gestiscono processi di autenticazione e autorizzazione.

Dettagli Tecnici e la Natura dell'Incidente

Secondo le comunicazioni di Vercel, la compromissione è stata innescata da un problema legato a un "agentic OAuth tangle" attribuito a Context.ai. Il termine "OAuth tangle" suggerisce una configurazione o un'interazione complessa e potenzialmente errata all'interno del protocollo OAuth, che è ampiamente utilizzato per consentire a servizi di terze parti di accedere in modo sicuro a risorse utente senza esporre direttamente le credenziali. Un "agentic" contesto implica il coinvolgimento di agenti software o sistemi automatizzati che agiscono per conto dell'utente o del servizio.

Questo scenario può verificarsi quando le autorizzazioni concesse tramite OAuth sono troppo ampie, mal configurate o quando un agente automatizzato sfrutta una vulnerabilità nel flusso di autenticazione per ottenere accessi non autorizzati. La complessità delle integrazioni tra diversi servizi, ciascuno con i propri meccanismi di autenticazione e autorizzazione, può creare punti ciechi o configurazioni subottimali che diventano bersaglio per attori malevoli.

Implicazioni per la Sovranità dei Dati e i Deployment On-Premise

L'incidente che ha coinvolto Vercel e Context.ai sottolinea un aspetto cruciale per le aziende che gestiscono dati sensibili, in particolare nel contesto dei Large Language Models (LLM) e dei carichi di lavoro AI: la sicurezza delle dipendenze da terze parti. Anche quando un'organizzazione implementa robuste misure di sicurezza interne, la catena di fiducia può essere compromessa da vulnerabilità nei servizi esterni con cui interagisce.

Per CTO, DevOps lead e architetti infrastrutturali che valutano alternative self-hosted o deployment on-premise per i loro stack AI/LLM, questo episodio rafforza l'importanza del controllo diretto sull'intera pipeline. La sovranità dei dati, la compliance normativa e la capacità di operare in ambienti air-gapped diventano priorità assolute. Un deployment on-premise, sebbene comporti un investimento iniziale in CapEx e una gestione più complessa, può offrire un livello di controllo e isolamento che riduce l'esposizione a rischi derivanti da terze parti esterne. Per chi valuta questi trade-off, AI-RADAR offre framework analitici su /llm-onpremise per approfondire le implicazioni del TCO e della sicurezza.

Prospettive Future e Mitigazione dei Rischi

Incidenti come quello di Vercel evidenziano la necessità di un'attenta valutazione dei rischi associati alle integrazioni di terze parti e alla gestione delle identità e degli accessi (IAM). Le aziende devono implementare rigorosi processi di due diligence per i fornitori esterni, assicurandosi che i loro standard di sicurezza siano allineati e che i contratti includano clausole chiare sulla responsabilità in caso di violazione.

Inoltre, l'adozione di principi di minimo privilegio, la segmentazione della rete e l'implementazione di sistemi di monitoraggio continuo sono essenziali per rilevare e rispondere rapidamente a potenziali compromissioni. Per le organizzazioni che scelgono un approccio ibrido o on-premise, la gestione interna di questi aspetti offre un maggiore controllo, ma richiede anche competenze e risorse dedicate per mantenere un elevato livello di sicurezza e compliance. La scelta tra cloud e on-premise, in questo contesto, non è solo una questione di costi o performance, ma anche di tolleranza al rischio e di strategia di controllo sui propri dati e infrastrutture.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!