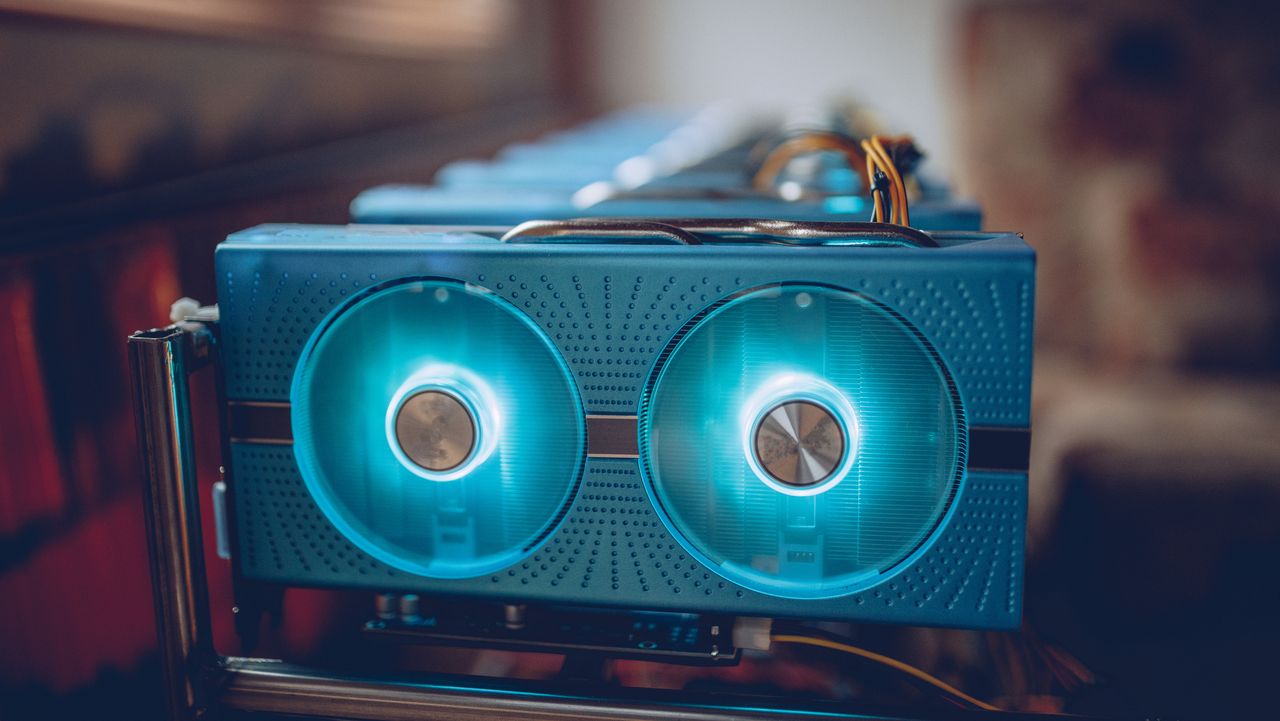

AMD and the Potential of Local AI: A "Computer" for Home Inference

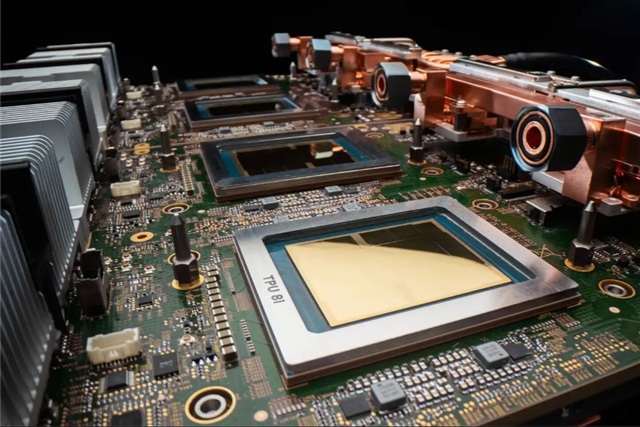

The increasing capability of consumer hardware, with players like AMD, is making it progressively more accessible to run AI workloads, including Large Language Models, directly on local systems. This development opens new perspectives for on-premise ...