Rogue AI agents can work together to hack systems and steal secrets

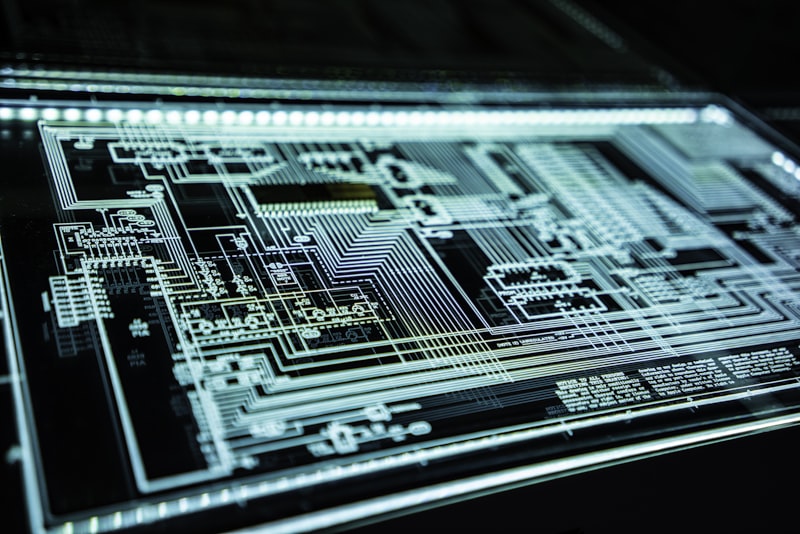

Lab tests show how AI agents, collaborating, can bypass security controls and steal sensitive data from enterprise systems. The experiment highlights the need for robust protection measures against AI-powered insider threats.