Anthropic's Mythos: AI for Code Security, Between Promises and Current Limitations

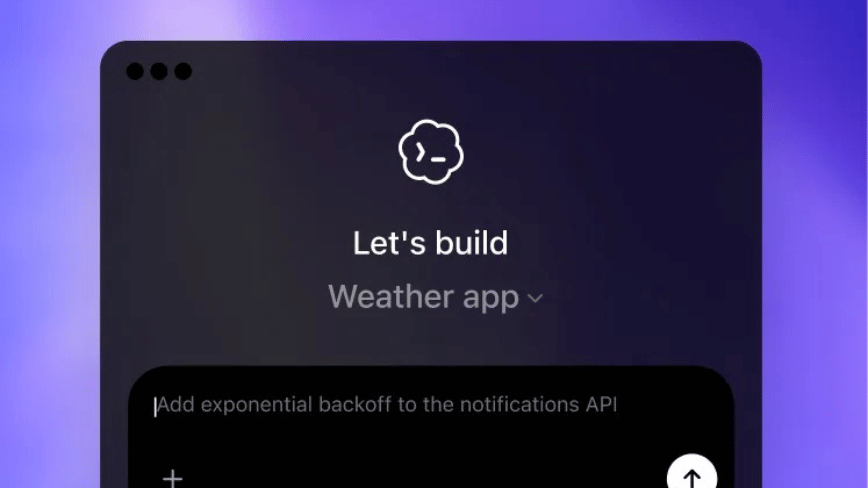

Anthropic has introduced Mythos, an AI-powered security model designed to identify code vulnerabilities. However, analysis suggests its current capabilities are limited to what it has been trained to recognize, raising questions about its true autono...