AMD Strix Halo: 192GB Memory for On-Premise LLMs, a New Horizon?

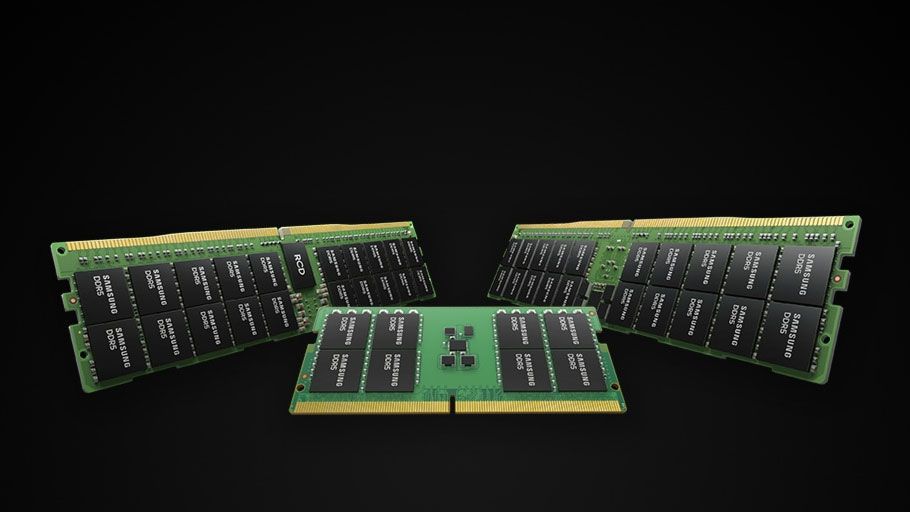

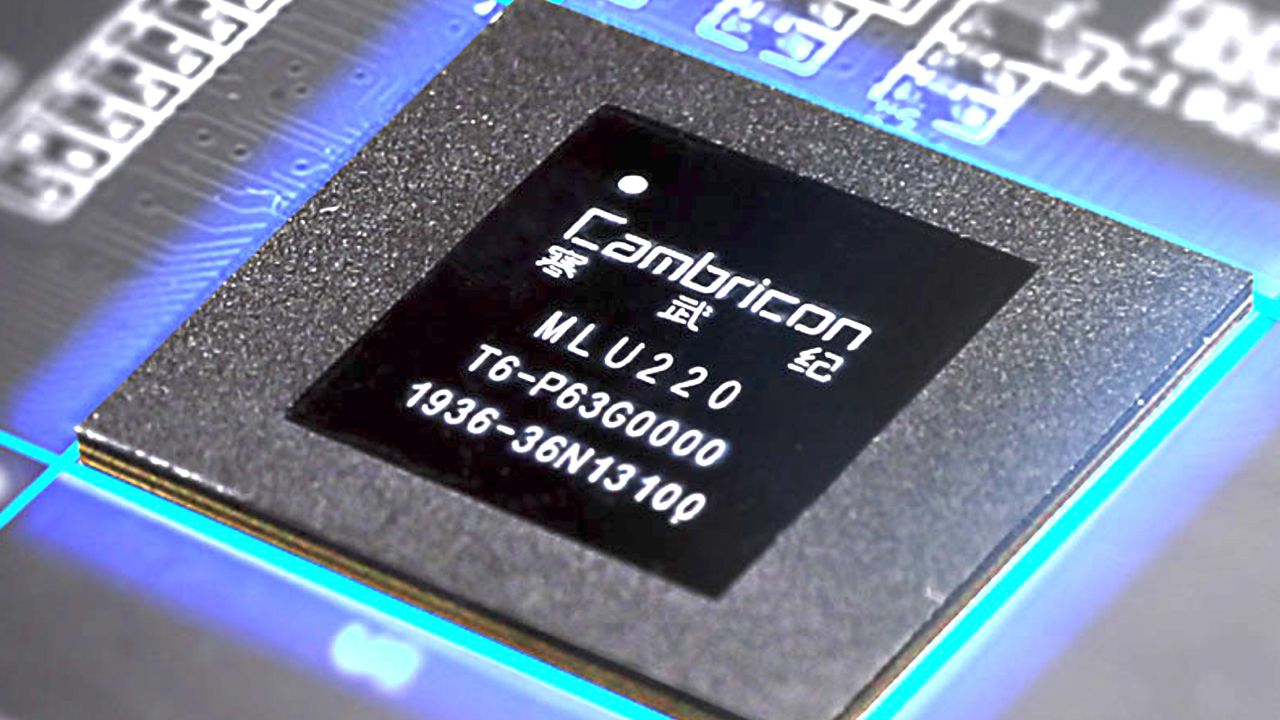

Recent rumors suggest that AMD's upcoming Strix Halo APU, potentially named "Gorgon Halo 495 Max" or "Ryzen AI Max Pro 495," could integrate 192GB of memory. This capacity, coupled with a Radeon 8065S iGPU, would mark a significant advancement for ru...