The HBM Competition: Samsung, Nvidia, and TSMC Vie for the Future of AI

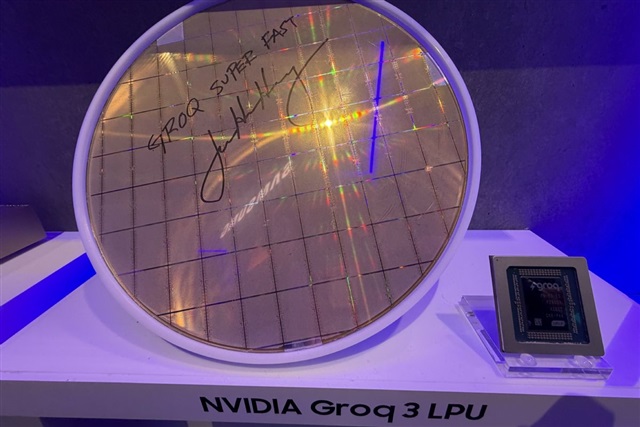

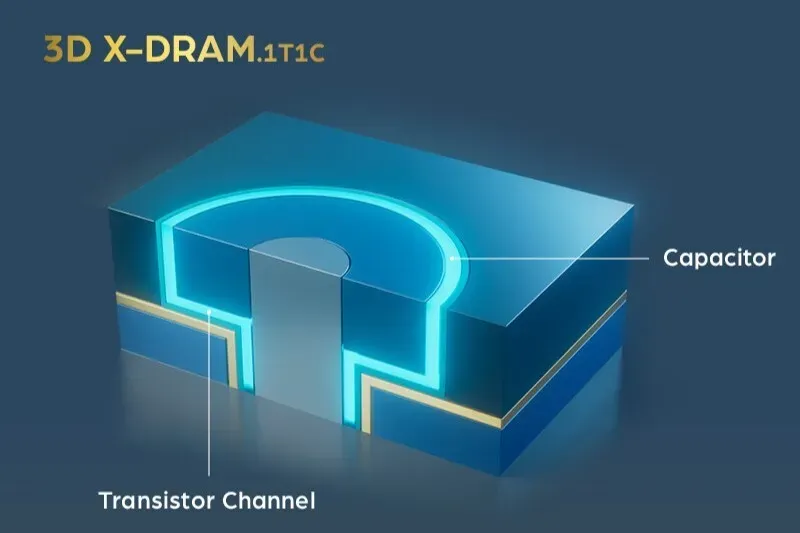

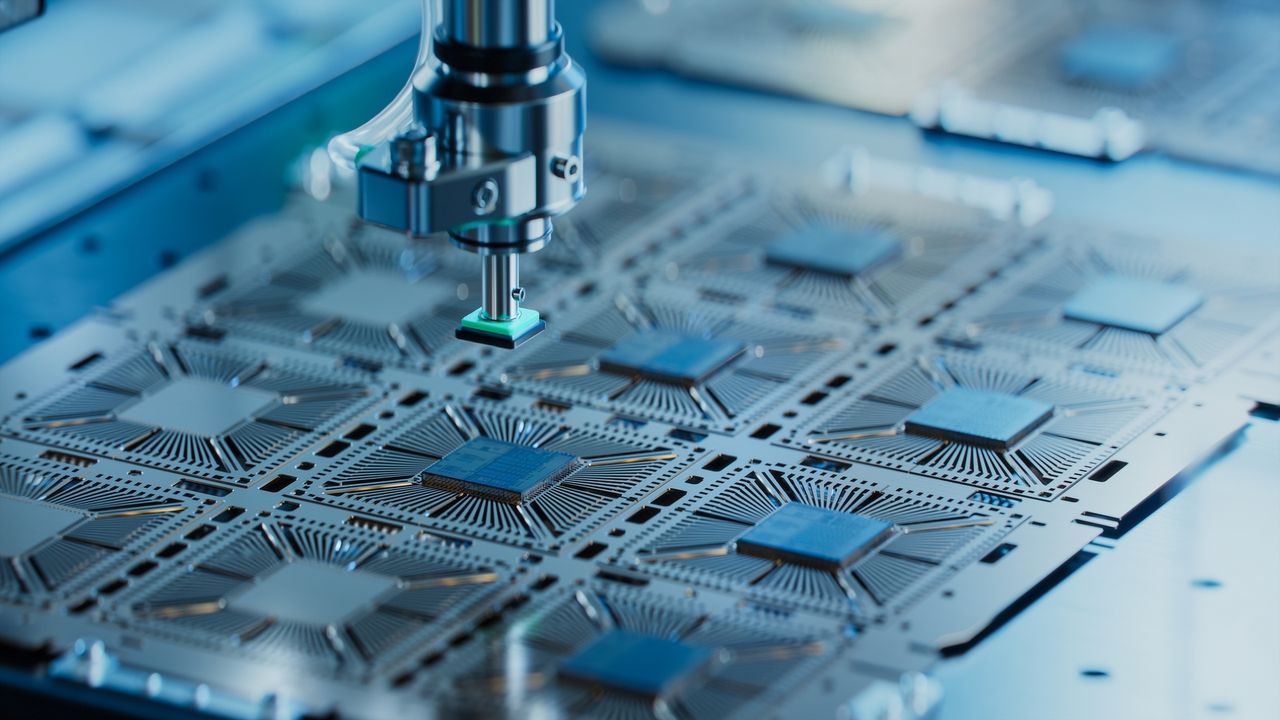

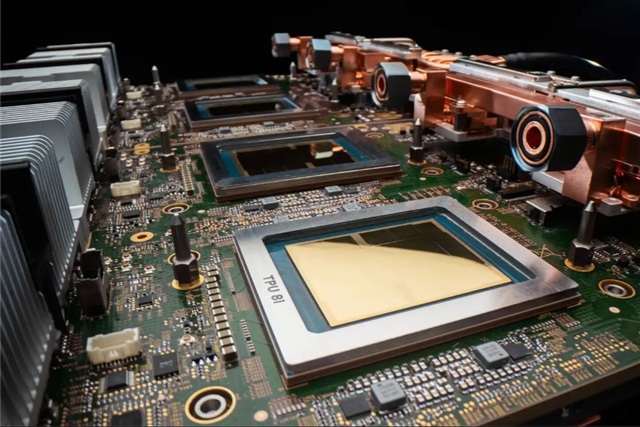

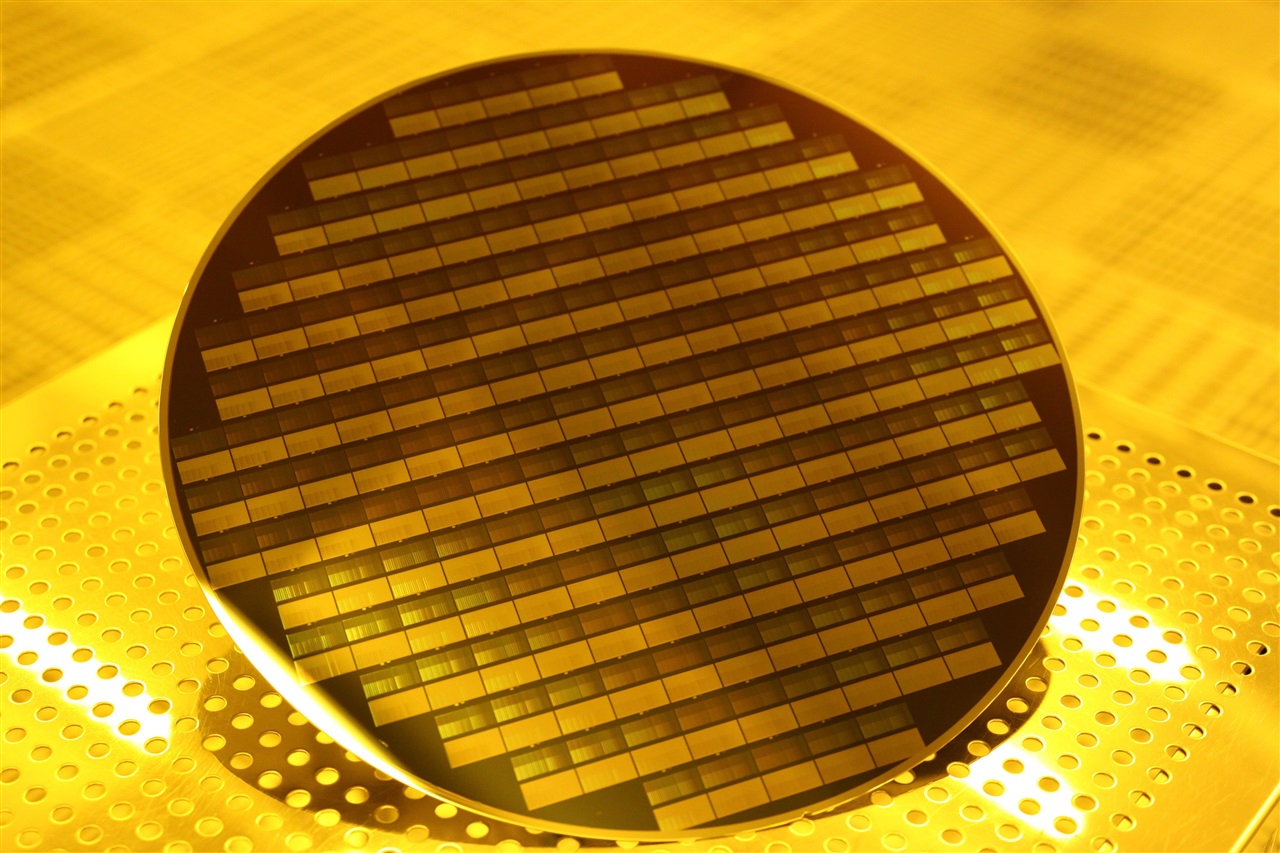

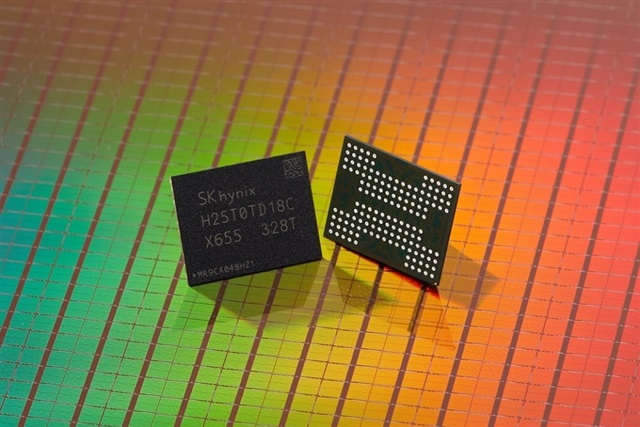

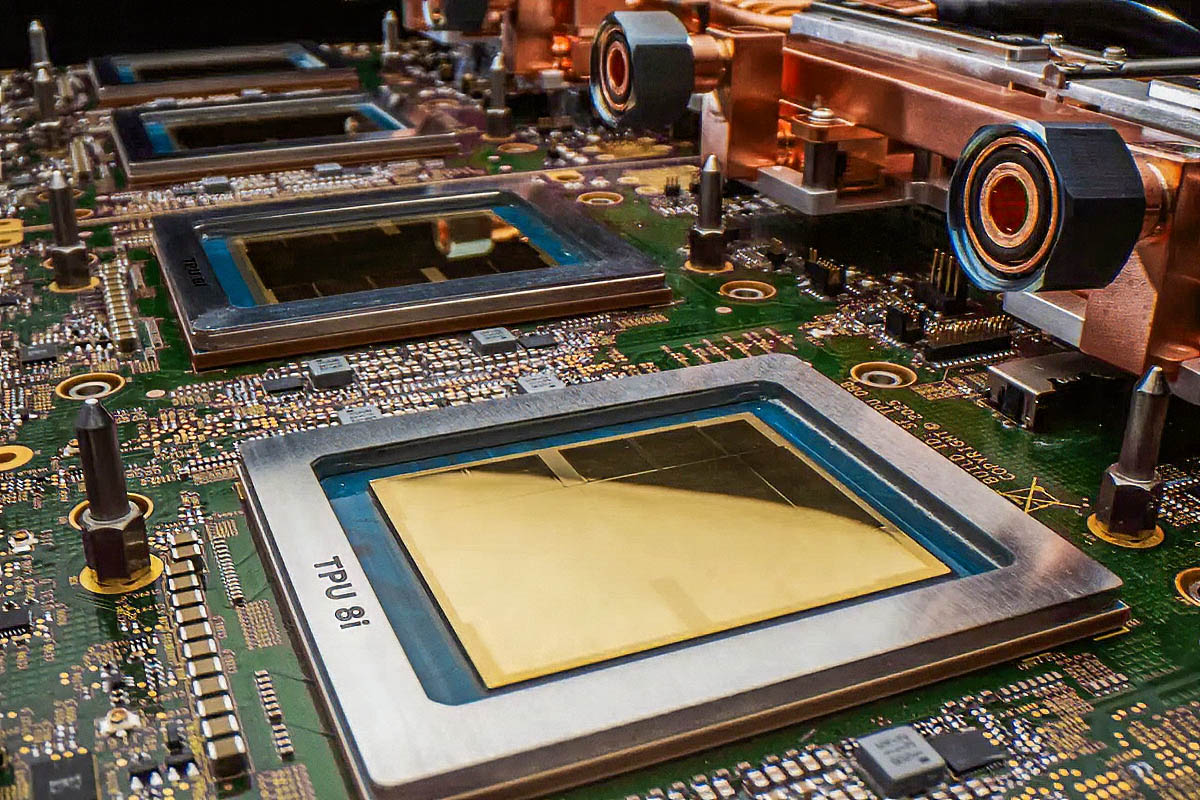

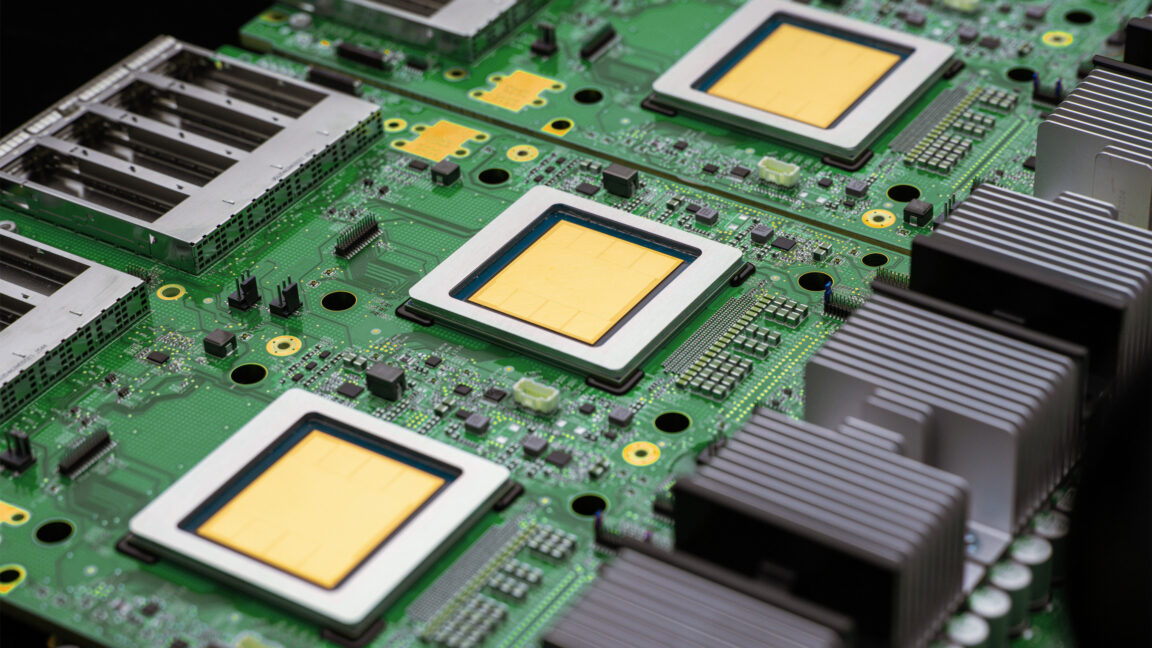

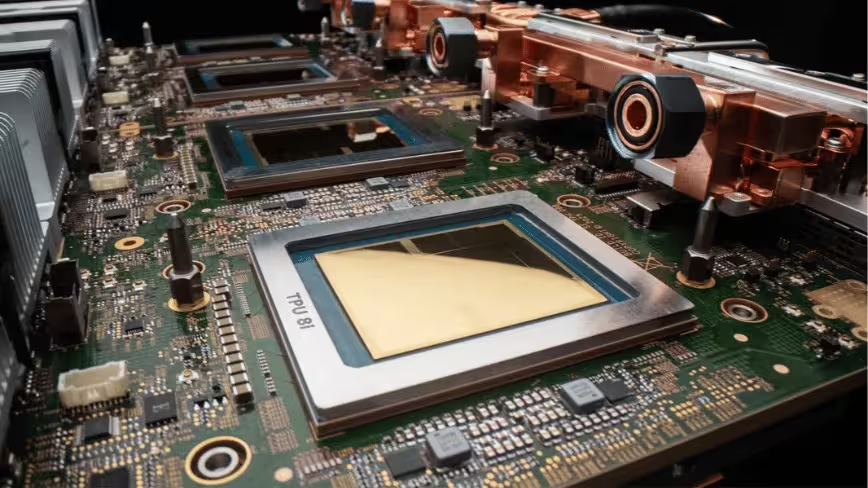

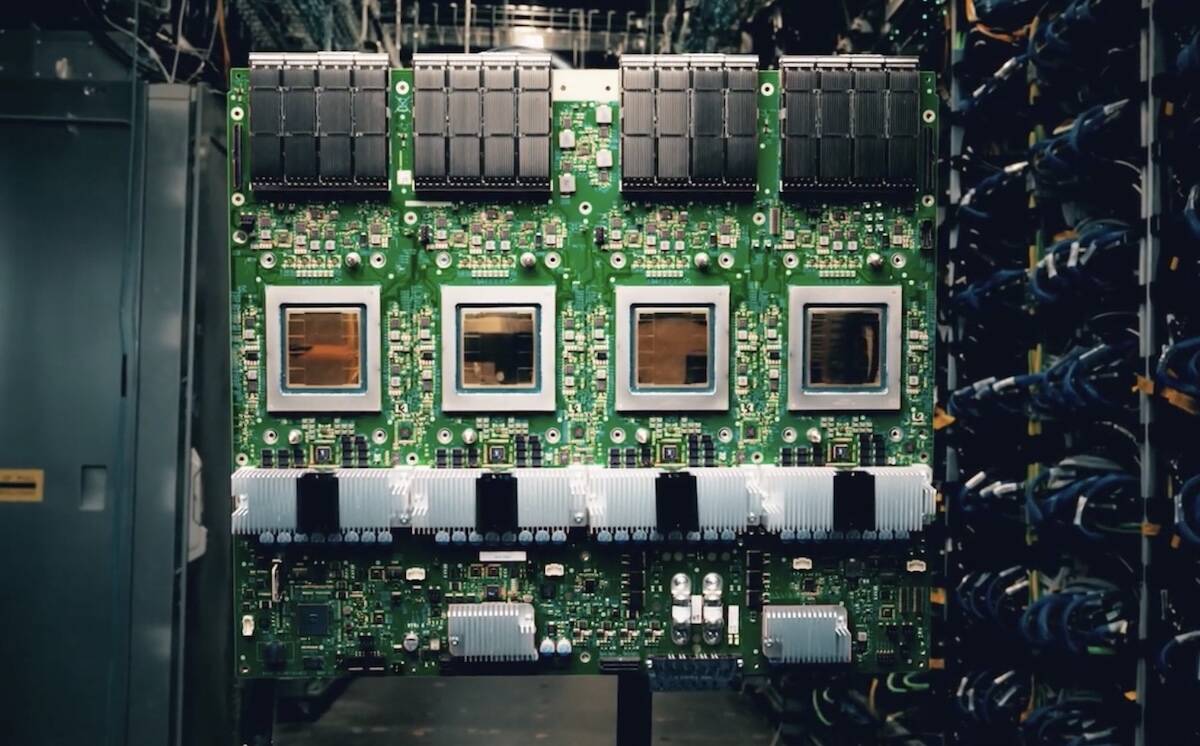

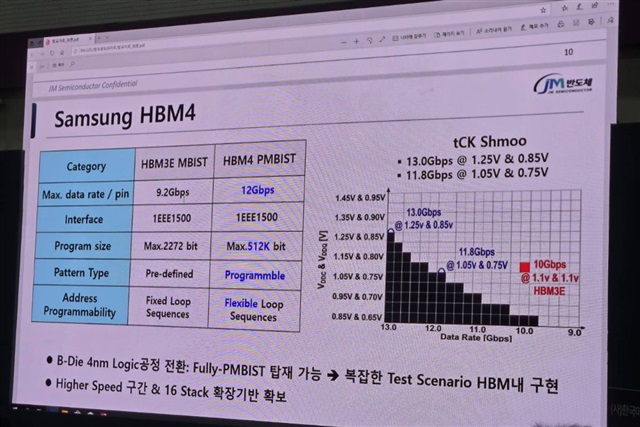

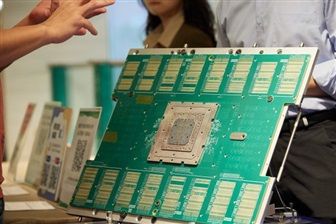

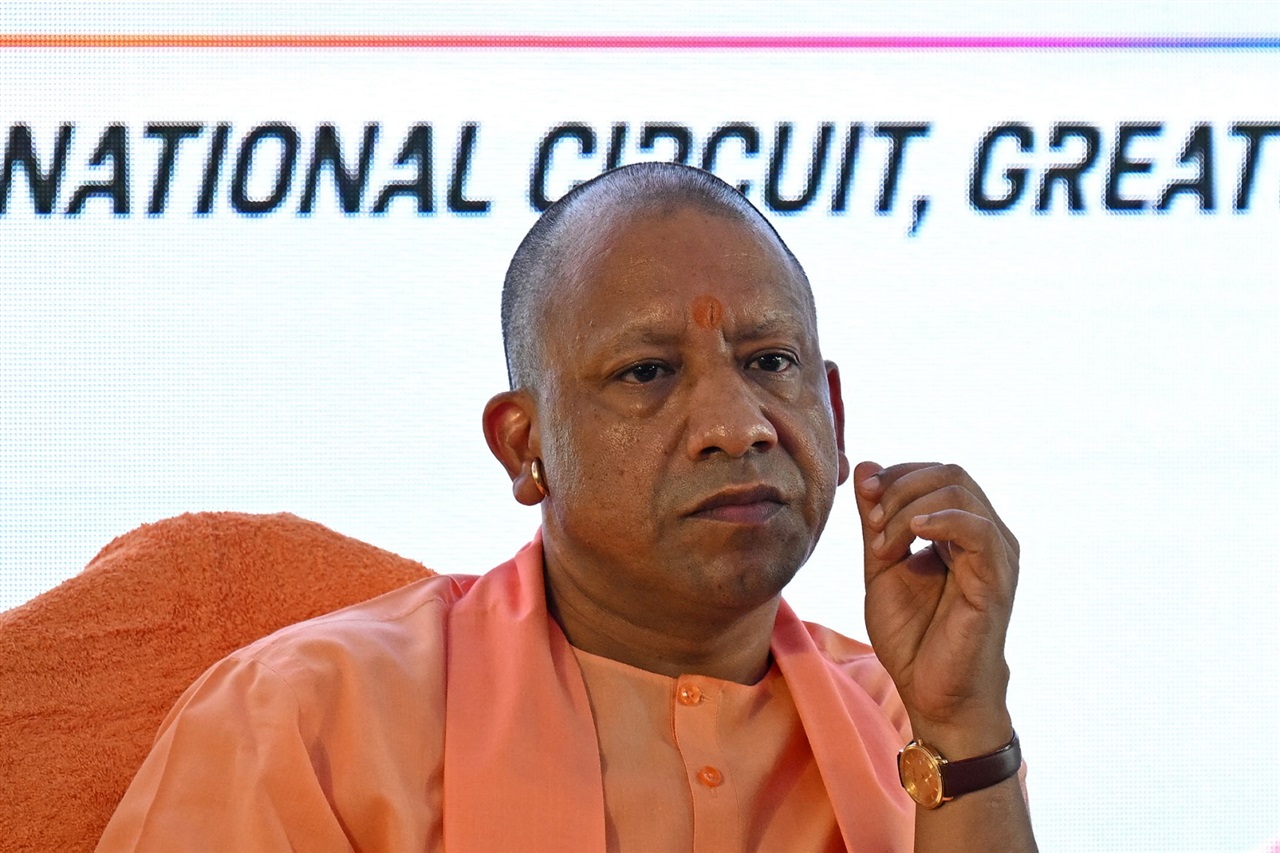

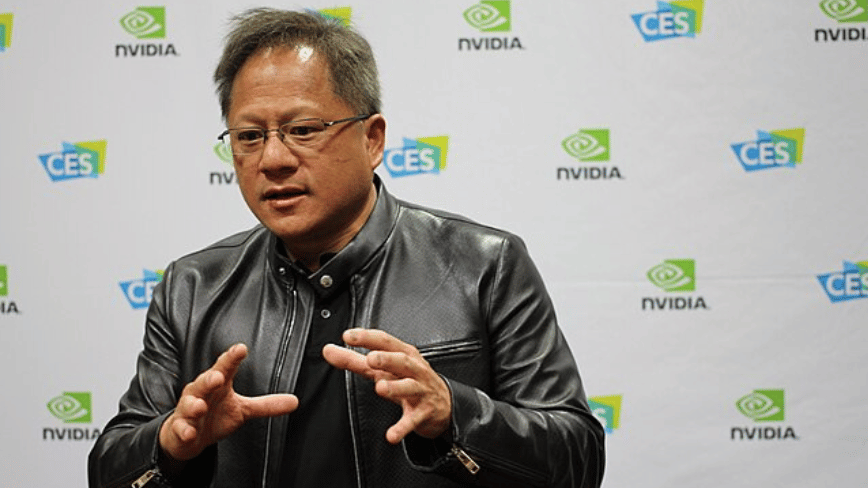

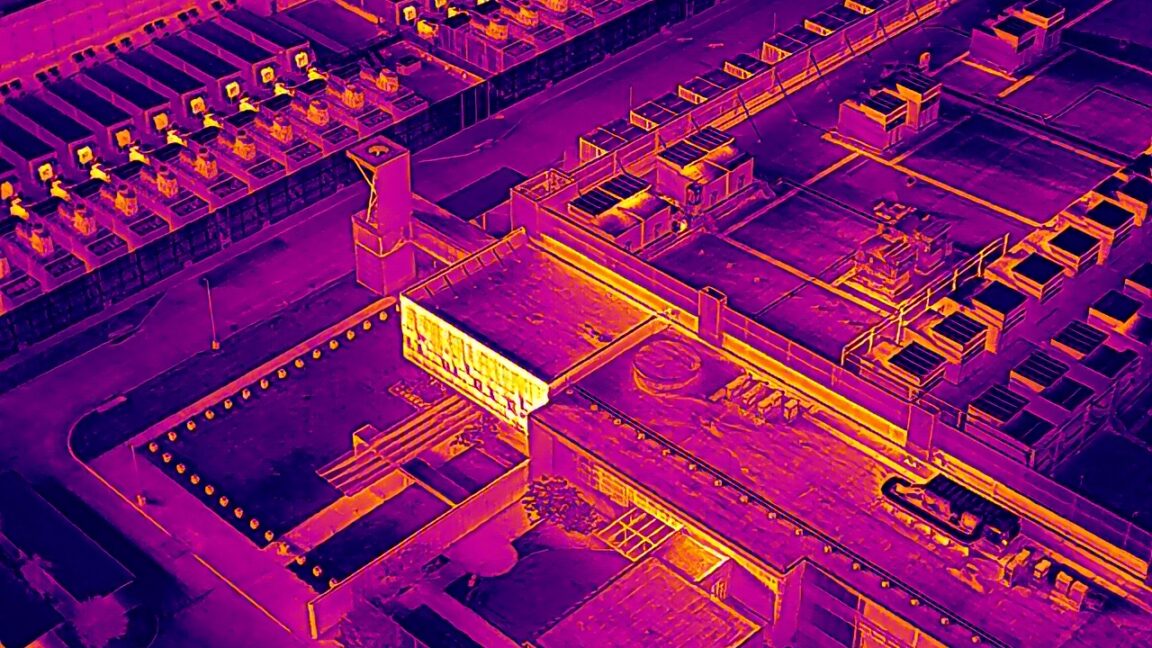

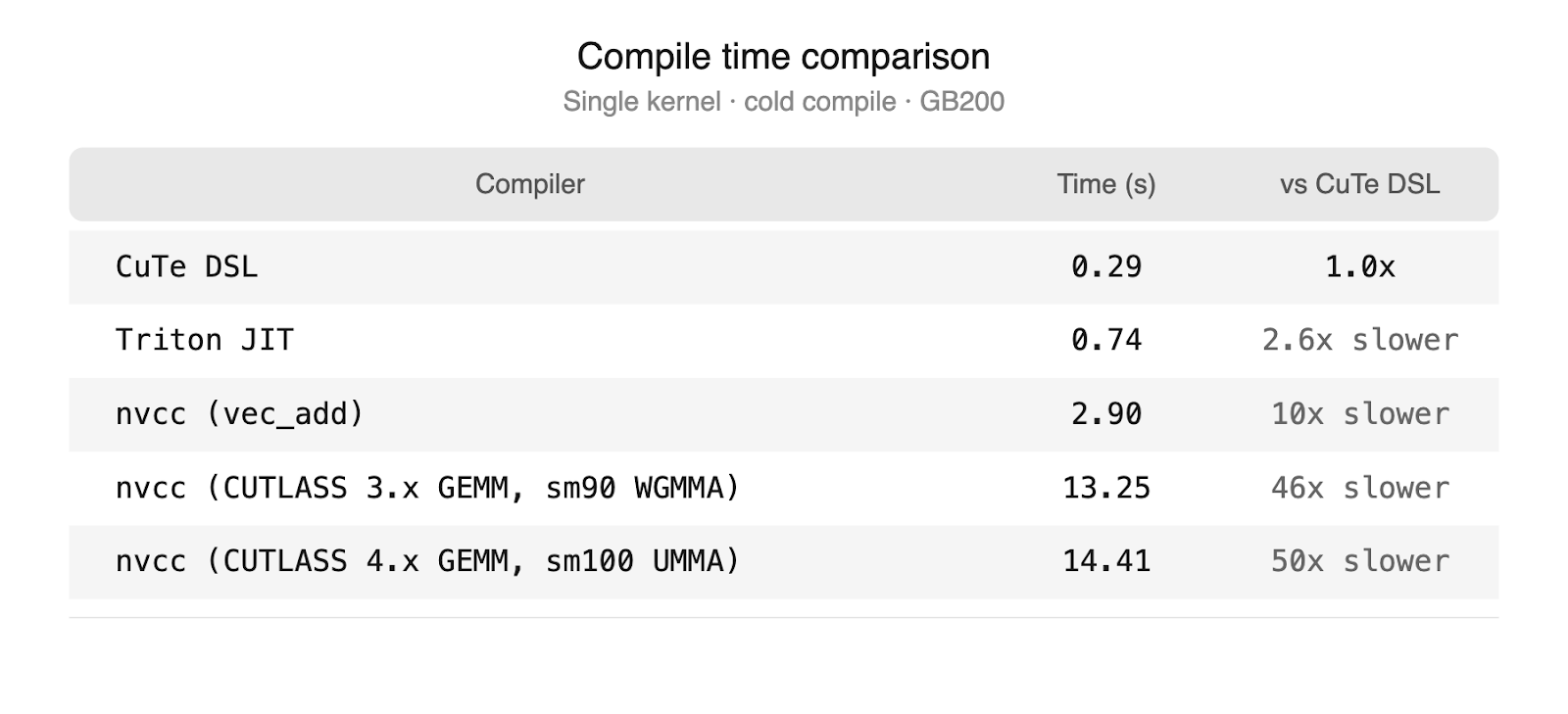

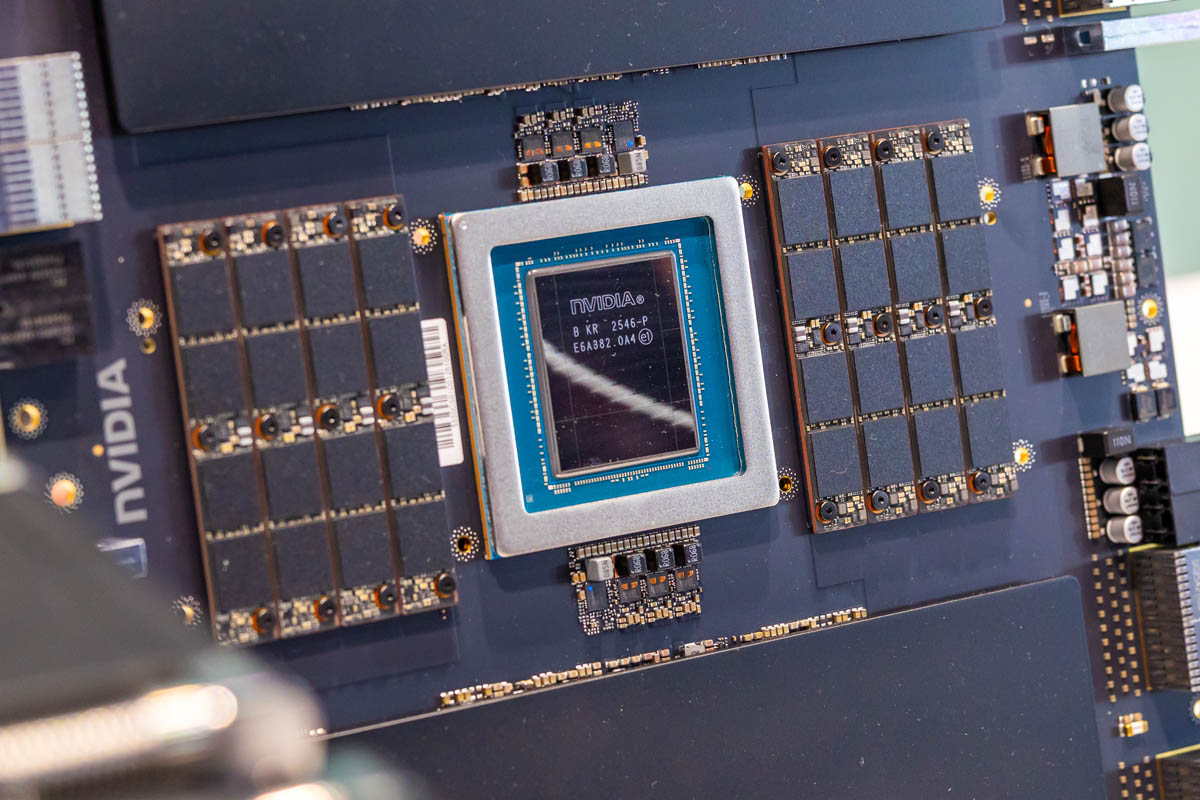

The High Bandwidth Memory (HBM) market is at the heart of growing competition among tech giants. Samsung is leveraging its production capacity to secure crucial orders from Nvidia for its AI accelerators, while TSMC intensifies its pushback. This mar...