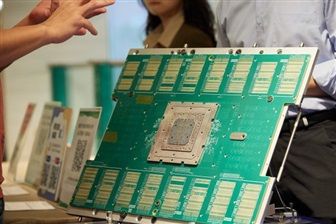

OpenAI Reportedly Taps Apple Suppliers for Hardware Expansion

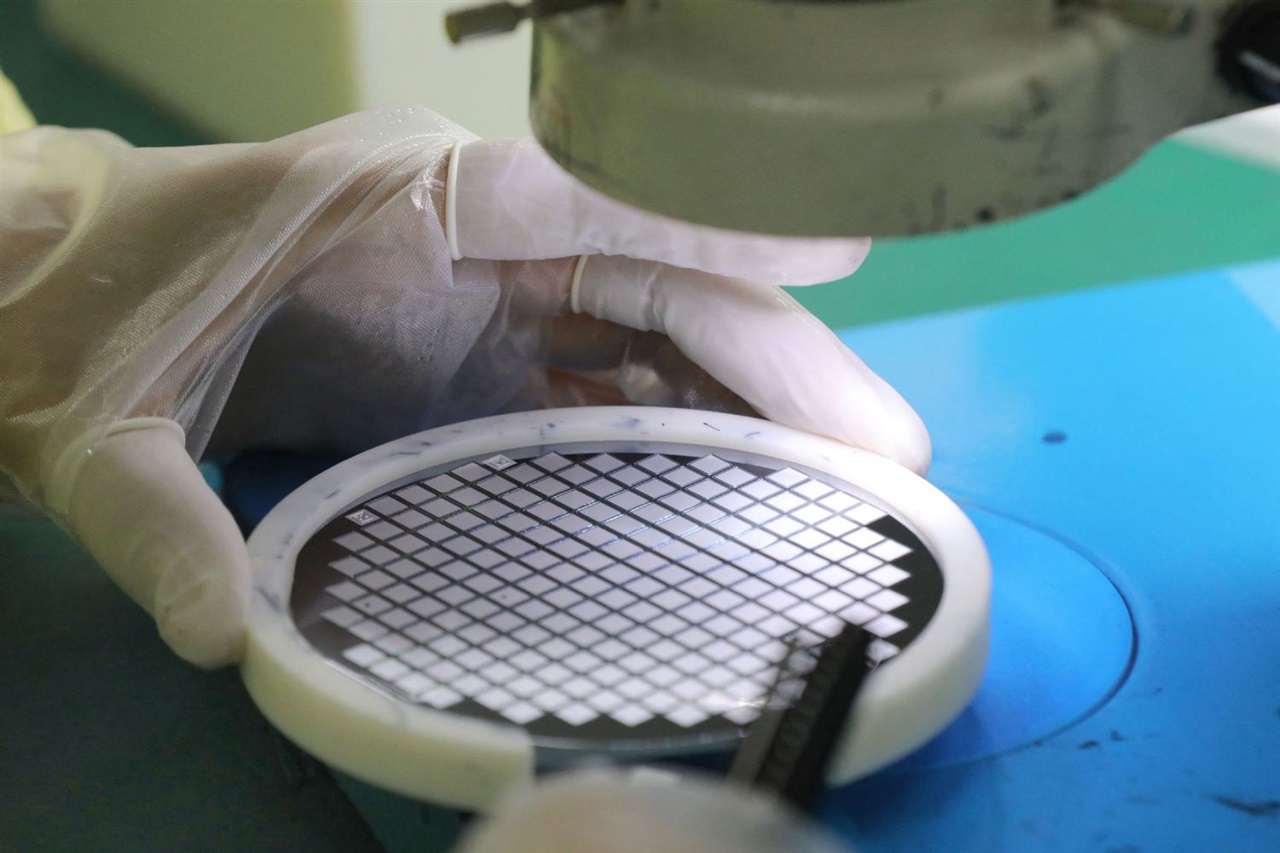

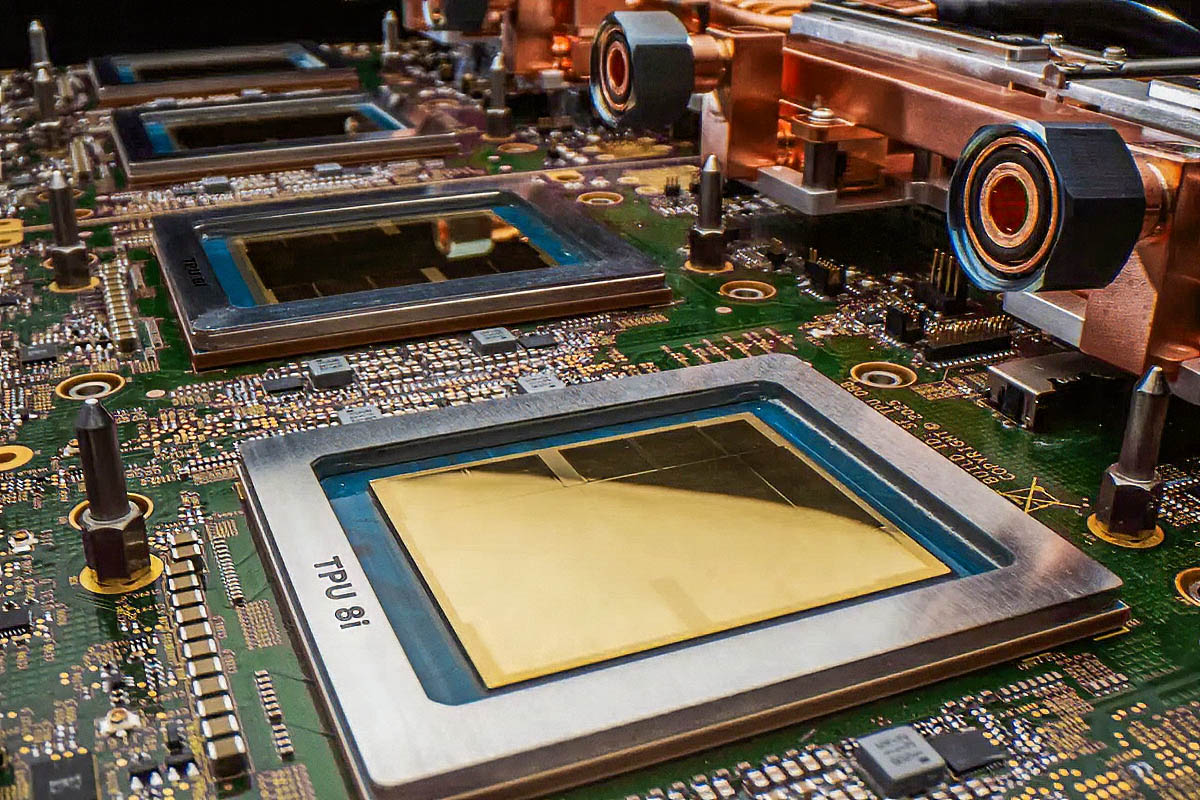

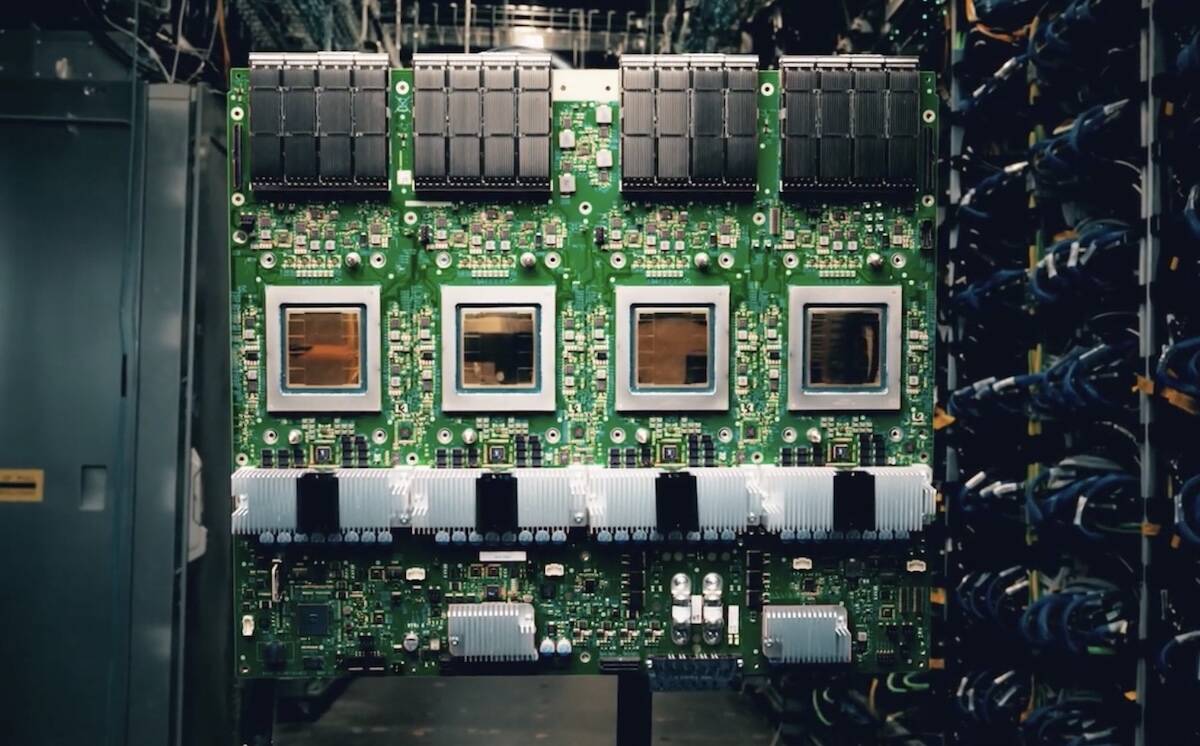

OpenAI is reportedly exploring collaborations with key Apple suppliers, including MediaTek, Qualcomm, and Luxshare, to bolster its hardware initiatives. The rumor, reported by analyst Ming-Chi Kuo, suggests a strategic expansion in the AI infrastruct...