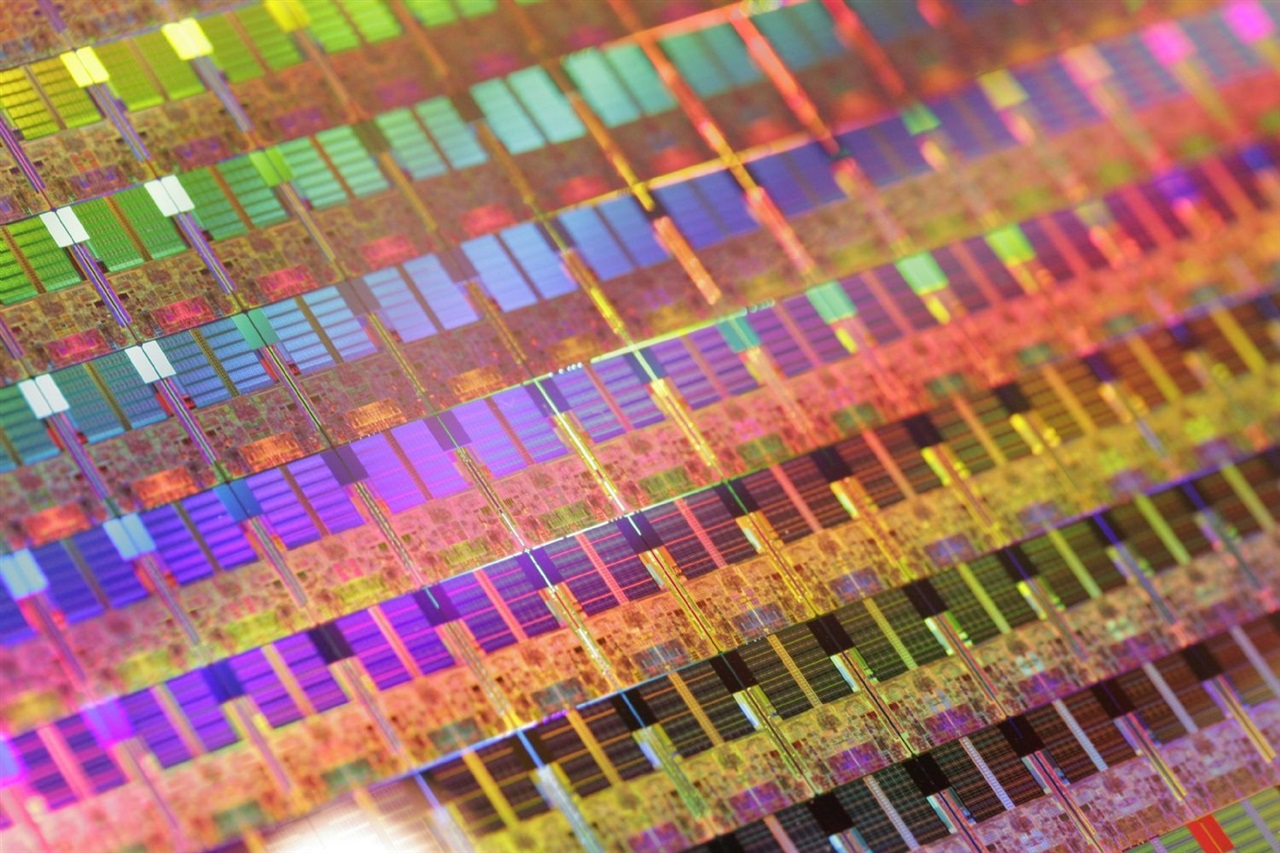

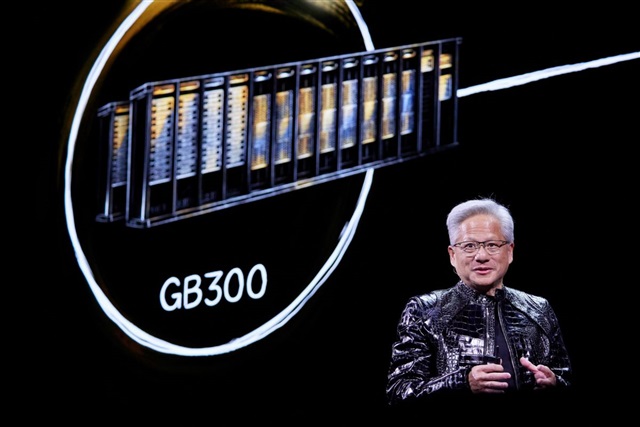

AI Revolutionizes Semiconductor Testing: AEM CEO's Vision

The CEO of AEM highlights how artificial intelligence is radically transforming the semiconductor testing sector. This evolution presents new challenges and opportunities for the industry, driving the adoption of more efficient and automated solution...