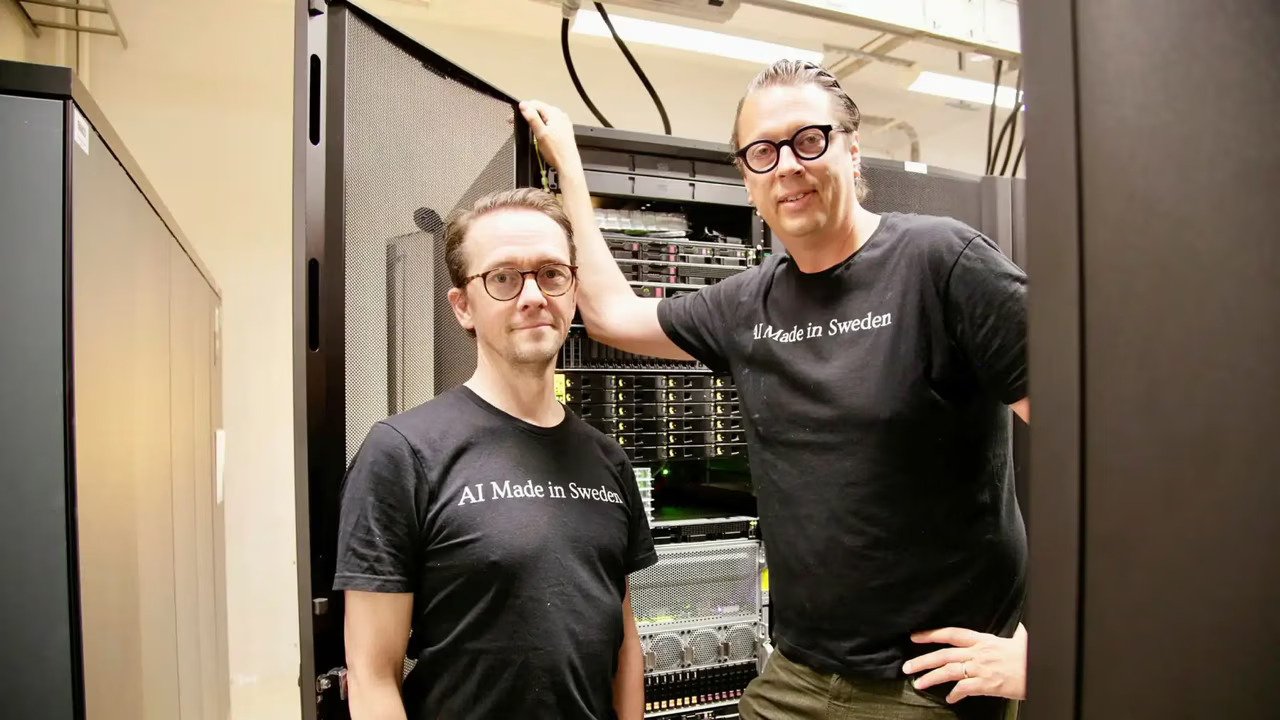

Google's AI efficiency shows search thriving, not dying

According to Digitimes, Google's recent advancements in integrating artificial intelligence into its search engine demonstrate how AI is enhancing, not replacing, existing search functionalities. The company is achieving significant efficiency gains,...