Taiwan Semiconductor Materials: Competitive Scenarios and Impact on On-Premise AI

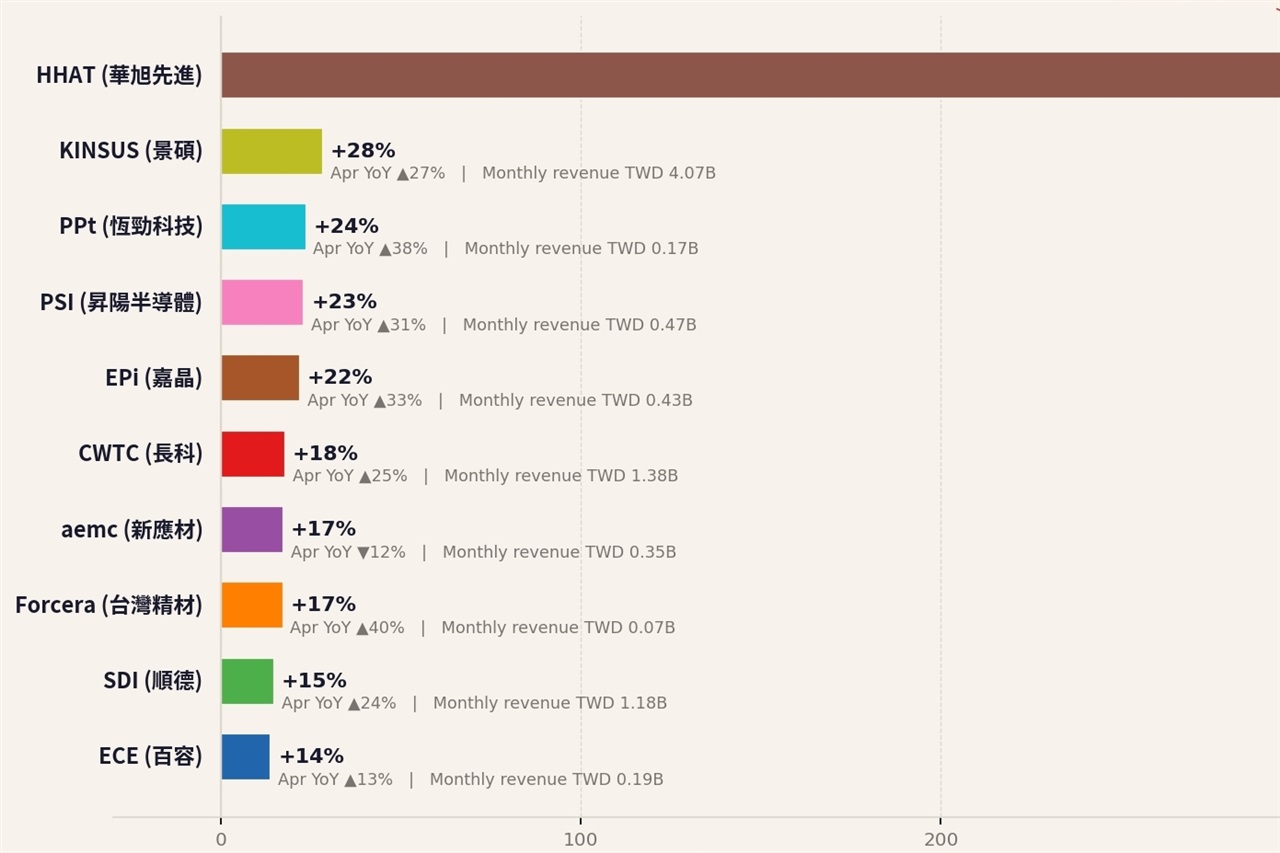

A Digitimes analysis for April 2026 highlights increasing polarization in Taiwan's semiconductor materials sector. This dynamic, characterized by two distinct 'races,' could significantly influence the global supply chain and, consequently, the costs...