Phison aiDAPTIV and Dimensity 9500: Boosting AI at the Edge

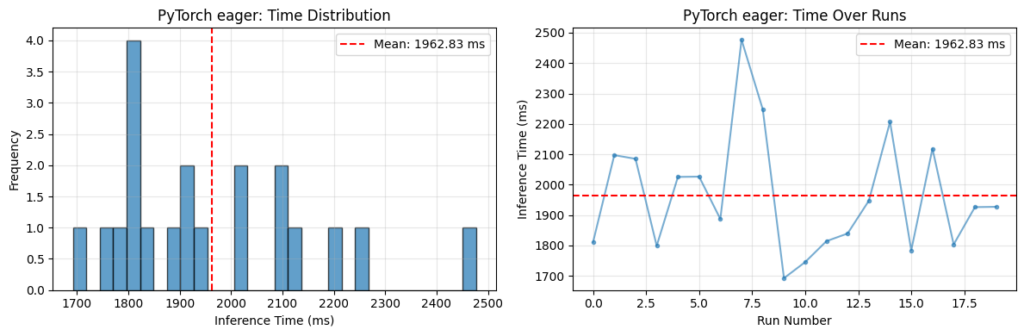

Phison has introduced aiDAPTIV, a solution designed to accelerate the deployment of AI workloads directly at the edge. Its integration with MediaTek's Dimensity 9500 processor highlights a focus on optimizing performance and energy efficiency for art...