Glassworm attack: Malicious code targets 151 GitHub repos and VS Code

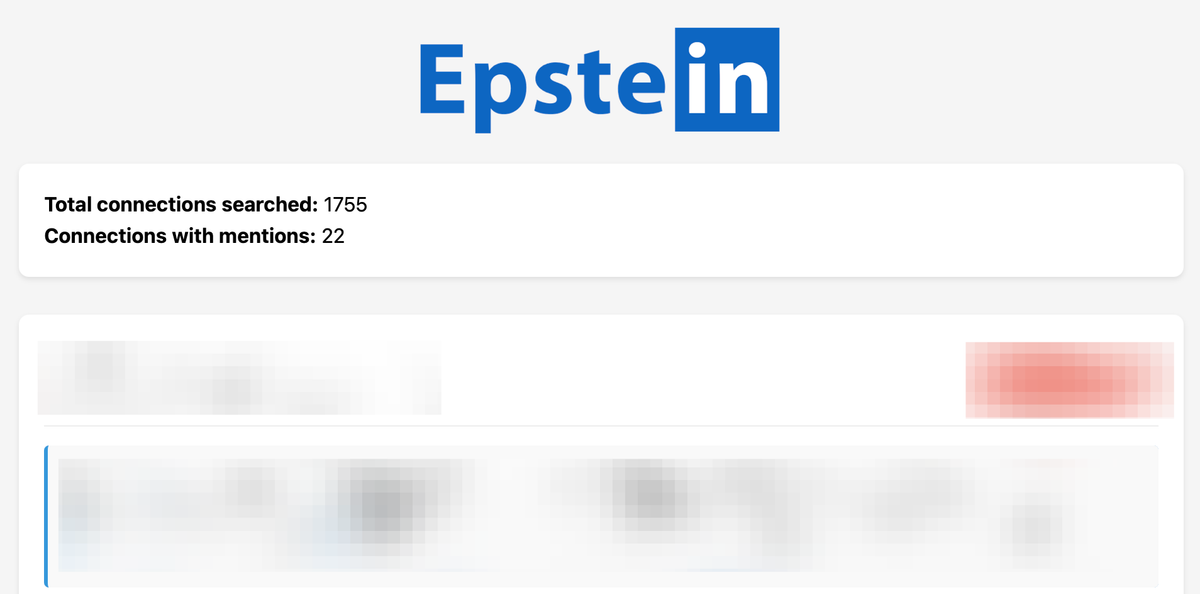

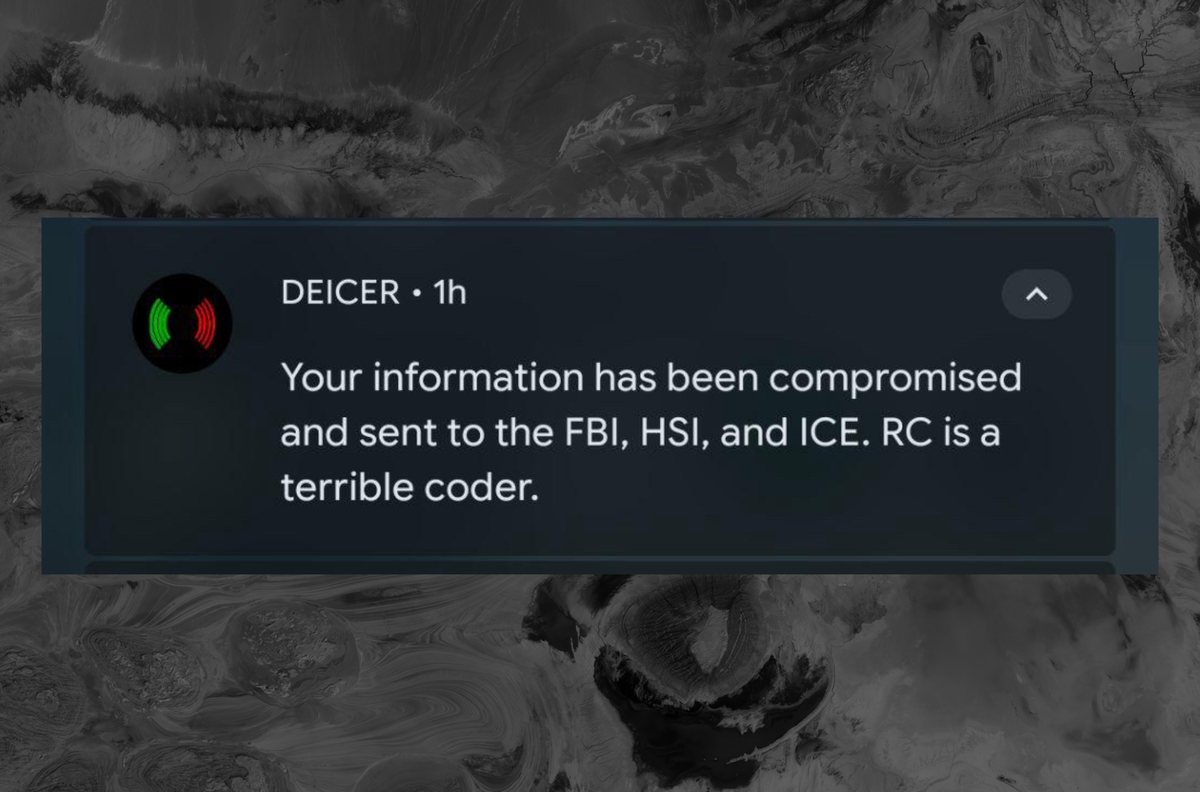

An attack named Glassworm has compromised 151 GitHub repositories and VS Code instances, leveraging the blockchain to steal tokens, credentials, and secrets. The threat highlights the growing security risks in the open source software supply chain.