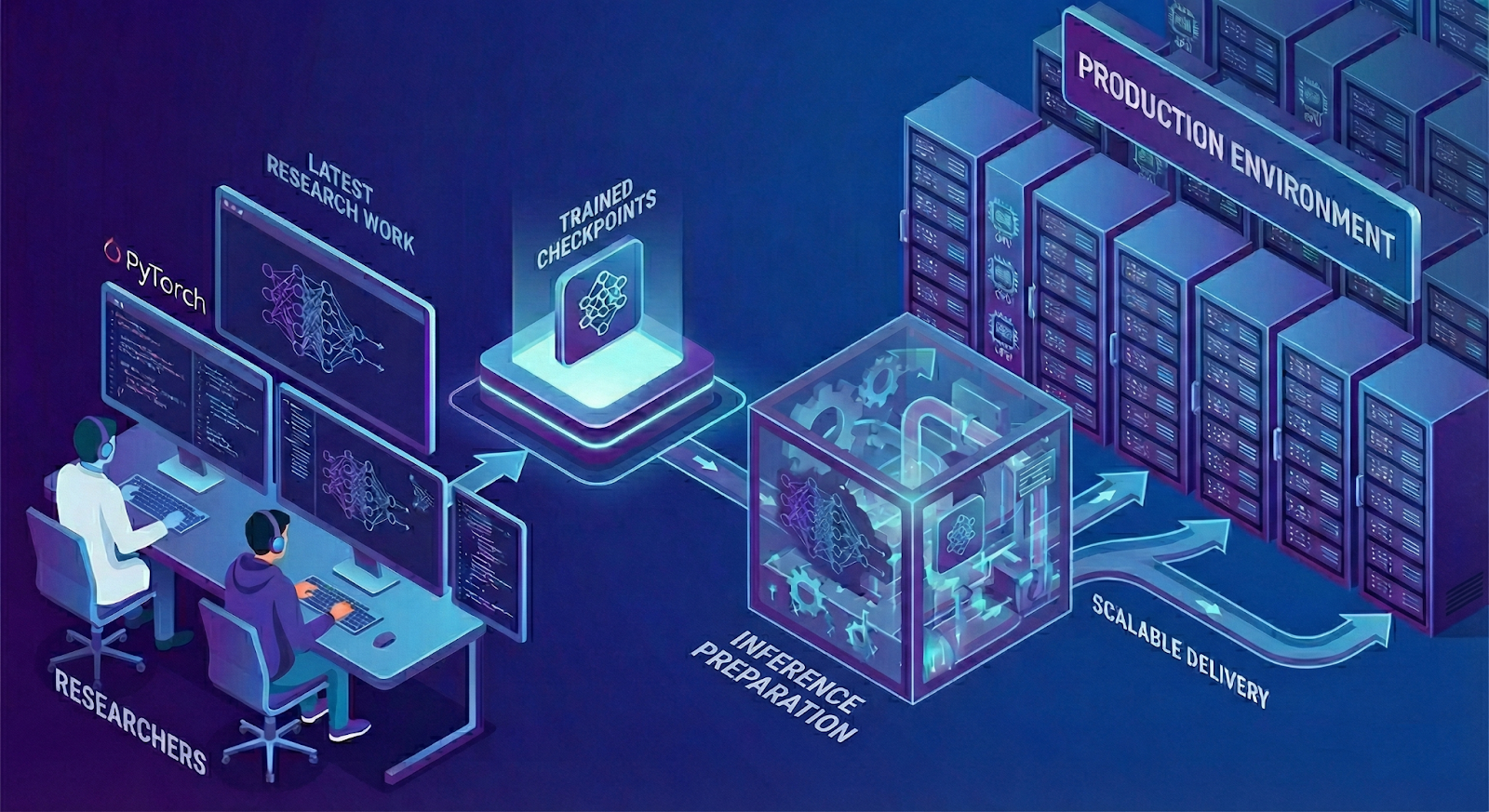

Scout AI: Artificial Intelligence for Advanced Weapon Systems

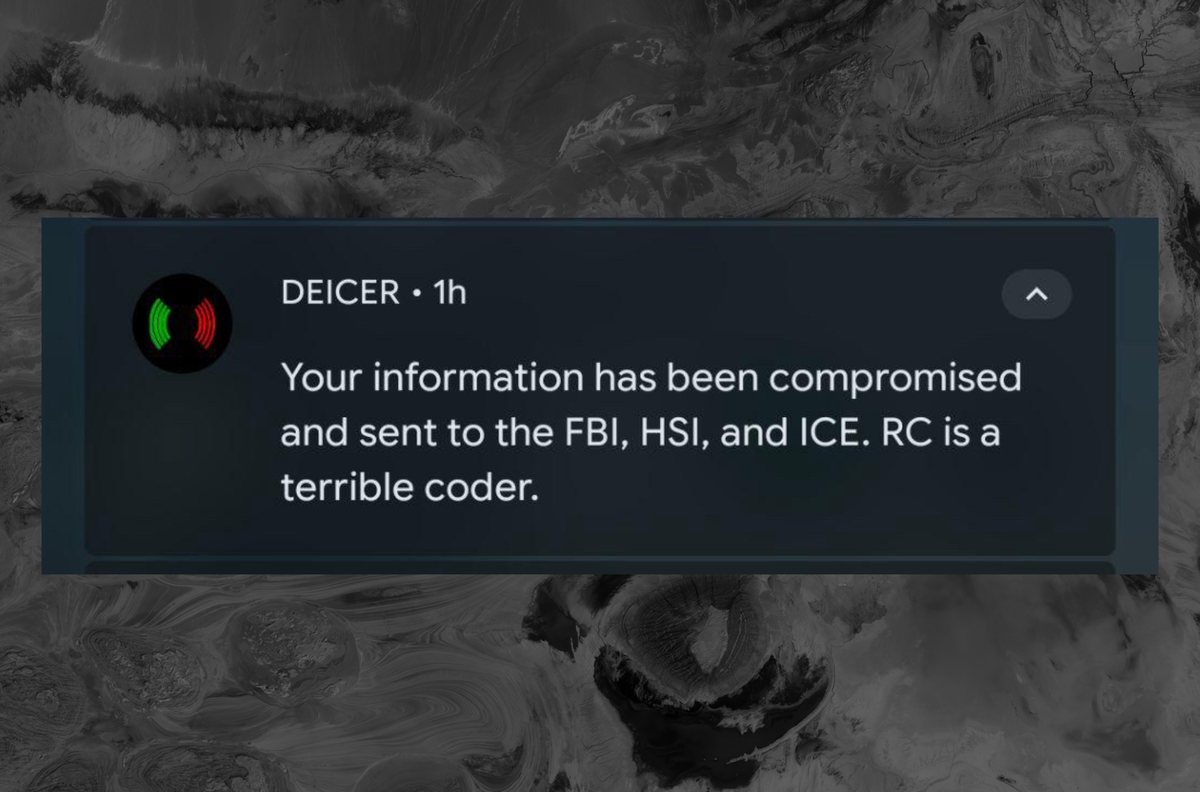

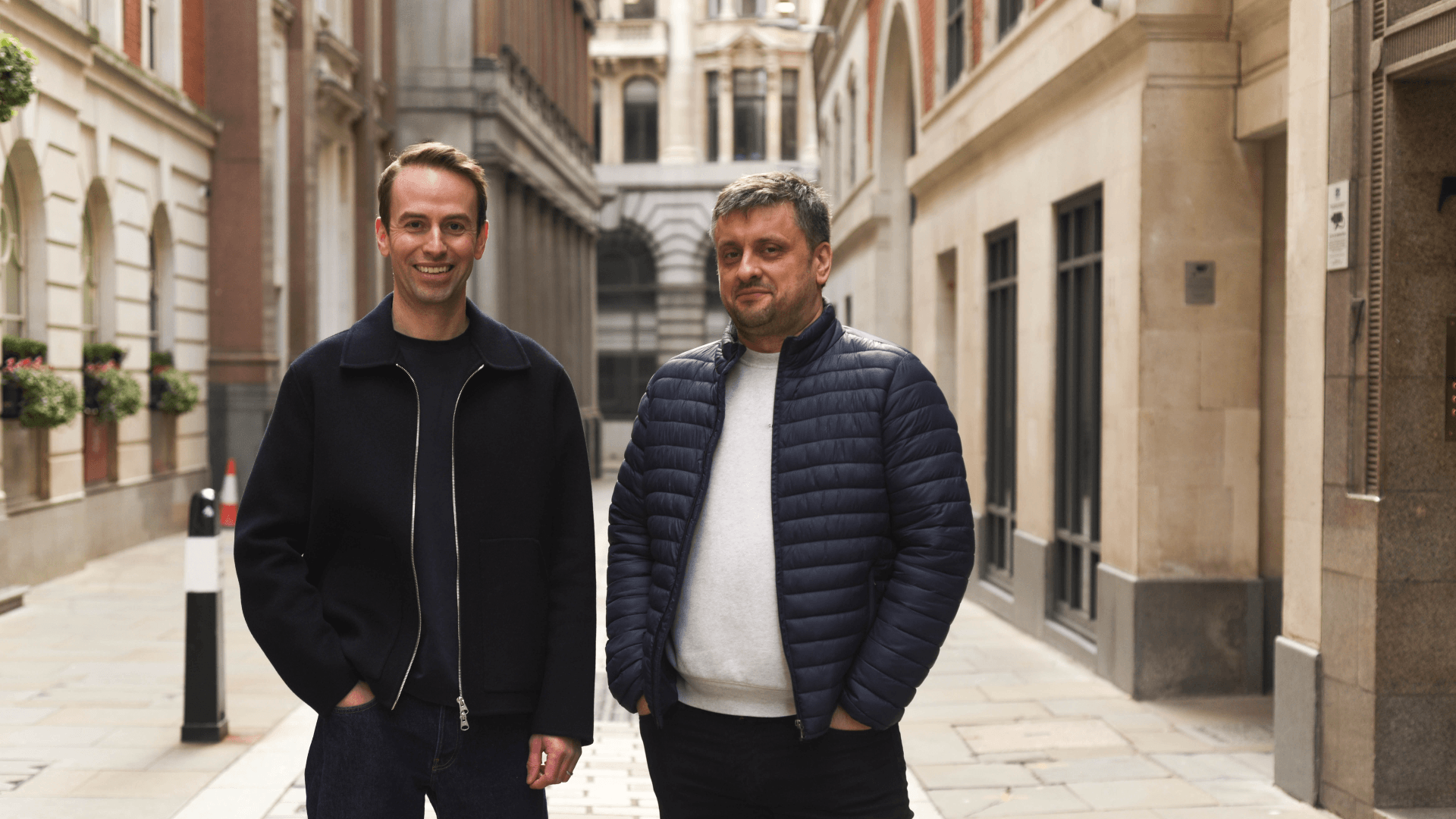

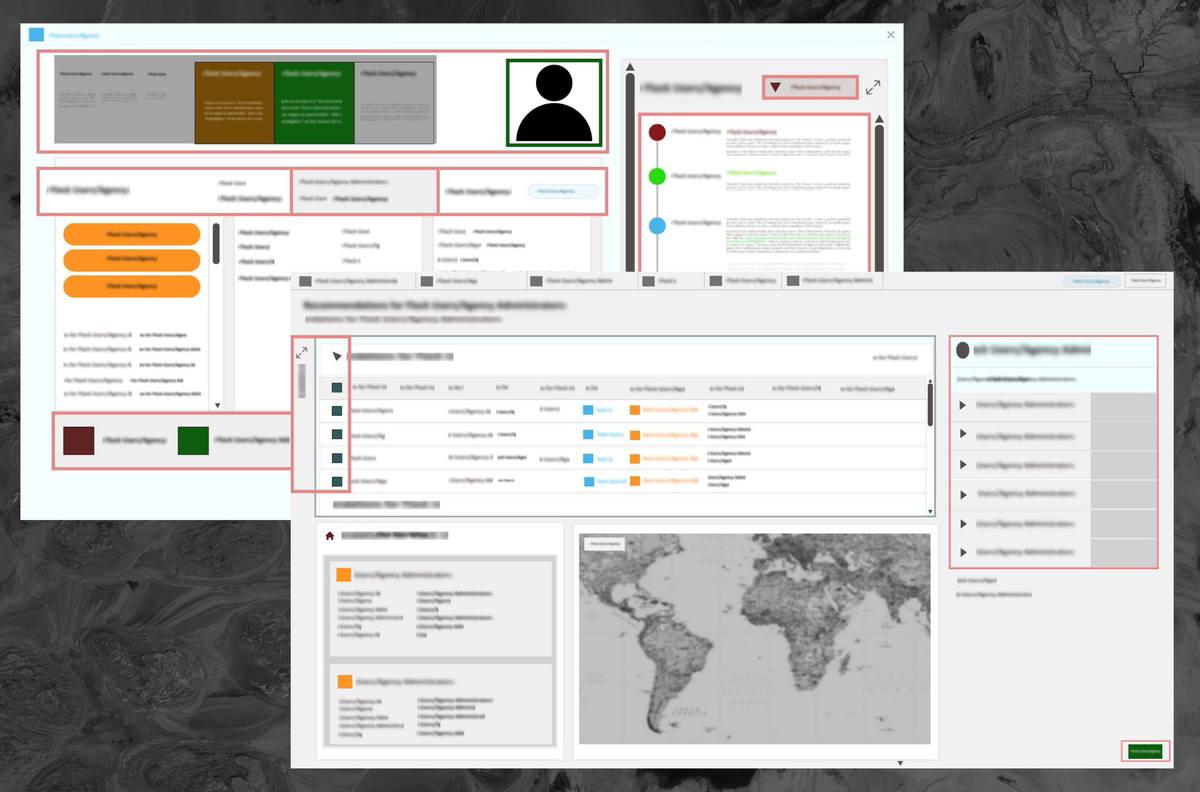

Defense company Scout AI is applying technologies derived from the AI industry to enhance lethal weapon systems. The company recently demonstrated the operational capabilities of its systems.