OpenClaw: Vulnerability Discovered in Malware Delivery Chain

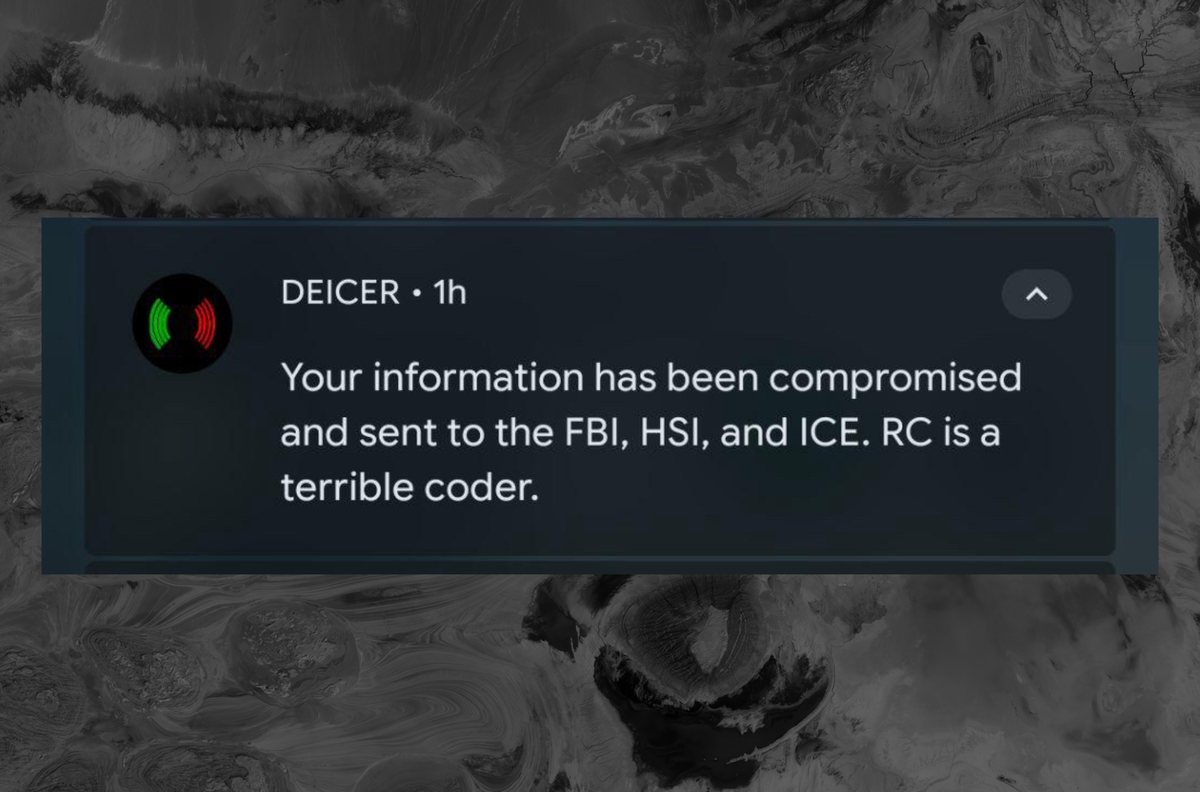

A 1Password researcher discovered that a top-downloaded OpenClaw skill was actually a staged malware delivery chain. The skill, promising Twitter integration, guided users to run obfuscated commands that installed macOS malware capable of stealing cr...